Anthropic admits 40% token bug, Abridge hits Epic, OpenAI Codex trails Gemini

Three vendor pitches today land behind the postmortem, peer review, or competitor product that already measured what they were selling.

Anthropic admits 40% token bug, Abridge hits Epic, OpenAI Codex trails Gemini

TL;DR

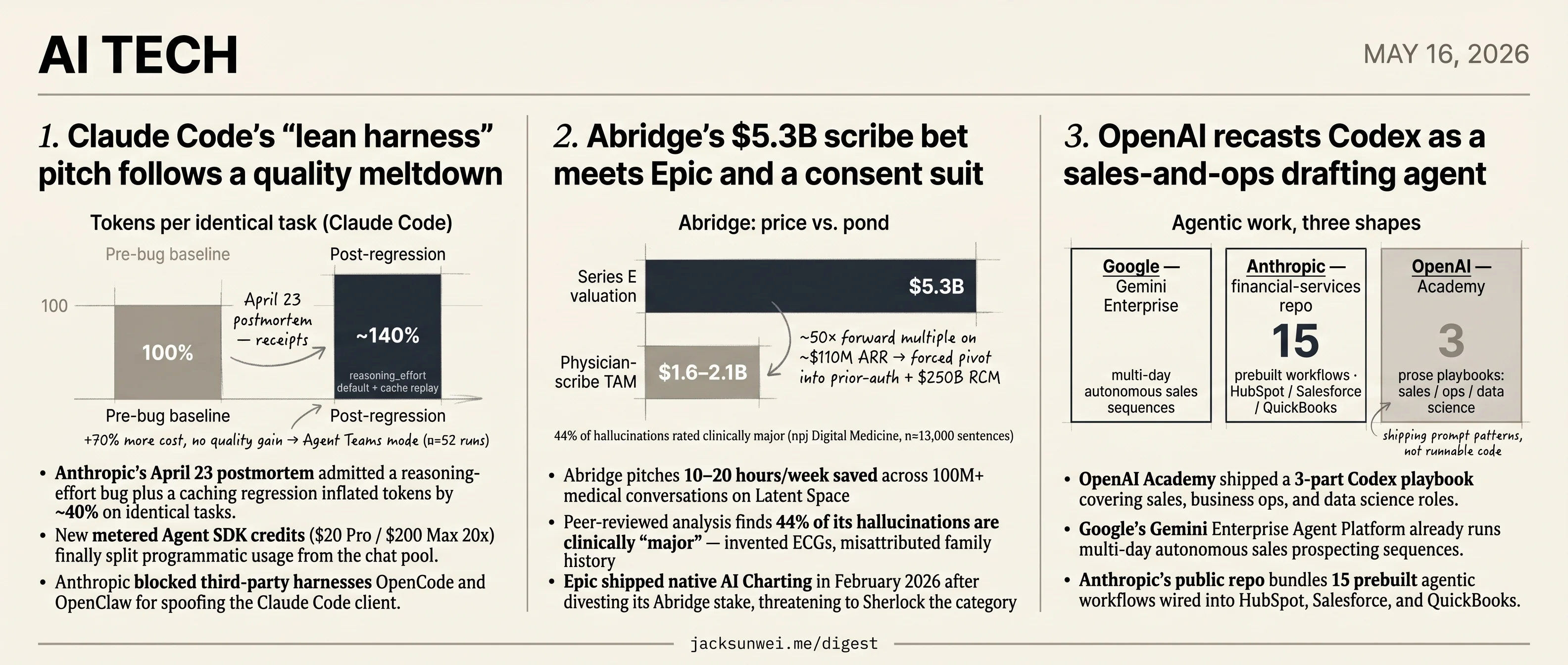

- Anthropic’s postmortem admits a reasoning-effort bug inflated Claude Code tokens ~40% on identical tasks.

- Anthropic blocks third-party harnesses OpenCode and OpenClaw for spoofing the Claude Code client.

- Abridge faces Epic’s native AI Charting and a peer-reviewed 44% major-hallucination rate.

- OpenAI Academy ships a 3-part Codex playbook for sales, ops, and data science roles.

- Datasette plugin caps per-user LLM spend with rolling 24-hour dollar budgets.

Today’s tech stories share a pattern: the vendor’s framing arrives later than the measurement that contradicts it. Anthropic rebrands Claude Code as a lean harness in the same week its own postmortem confesses a reasoning-effort bug plus a caching regression that inflated tokens by ~40% on identical tasks — and a community benchmark finds the new parallel Agent Teams mode costs 70%+ more for no quality gain. Abridge pitches 10–20 hours/week saved at a $5.3B valuation while a peer-reviewed audit finds 44% of its hallucinations are clinically major and Epic ships native AI Charting into the same workflow. OpenAI ships a Codex playbook for sales and ops roles where Gemini Enterprise already runs multi-day autonomous prospecting and Anthropic’s public repo bundles 15 prebuilt agentic workflows.

The round-ups sit alongside as practical counterweights — a Datasette plugin that finally puts a dollar cap on per-user LLM spend, plus the usual Willison shipments — but the frame is the features: marketing decks vs. what’s already on the record.

Claude Code’s “lean harness” pitch follows a quality meltdown

Source: ars-technica-ai · published 2026-05-15

TL;DR

- Anthropic’s April 23 postmortem admitted a reasoning-effort bug plus a caching regression inflated tokens by ~40% on identical tasks.

- New metered Agent SDK credits ($20 Pro / $200 Max 20x) finally split programmatic usage from the chat pool.

- Anthropic blocked third-party harnesses OpenCode and OpenClaw for spoofing the Claude Code client.

- A 52-run community benchmark found parallel “Agent Teams” mode costs 70%+ more with no quality gain.

The interview lands mid-cleanup, not mid-strategy

Cat Wu’s “we have no grand plan” framing for Claude Code reads very differently once you line it up against the last two months of Anthropic’s own disclosures. The April 23 engineering postmortem traced a six-week wave of “Claude got dumber” complaints to two concrete bugs: a hidden reasoning_effort default that silently downgraded the model to medium effort, and a caching regression that re-processed entire conversation histories — inflating token consumption by roughly 40% for identical tasks 1. That is not a philosophical posture about transparency; that is a vendor explaining why customers were paying more for worse output.

The metering changes Wu describes as user-friendly clarity are the operational fix. InfoWorld’s reporting on the new structure makes the split explicit: programmatic usage via the Agent SDK now draws from a dedicated monthly credit pool billed at standard API rates, rather than quietly competing with chat sessions on a Pro or Max subscription 2. That ends what was, in effect, a compute-arbitrage loophole — and it lines up suspiciously well with Anthropic’s parallel crackdown on third-party harnesses like OpenCode and OpenClaw, which VentureBeat reports were fingerprinted and blocked for spoofing the Claude Code client to tap subscription limits 3. “Lean harness” celebrates a minimal toolchain right up until someone else builds one.

The architectural bet is contested on both flanks

The “let the model decide” philosophy isn’t a settled industry view — it’s one side of a live fight.

| Position | Claim | Source |

|---|---|---|

| Anthropic | Role-specialized parallel agents beat single-agent baselines by 80%+ on hard tasks | 4 |

| Cognition (Walden Yan) | Multi-agent systems are fragile; context-sharing failures dominate | 4 |

| Community benchmark (n=52) | Claude Code’s own “Agent Teams” mode: +70% cost, no measurable quality gain; retry loops destroy correct code | 5 |

The community result is the awkward one. It’s not a competitor’s whitepaper — it’s 52 controlled runs of Anthropic’s own parallel feature finding that retries can regenerate entire files and overwrite previously correct sections 5. A “lean harness” that hands the model a hammer it uses to break working code is a design choice, not an absence of one.

Transparency has a security asterisk

The interview’s safety-by-default framing also needs CVE-2025-59536 next to it. The Hacker News documented that malicious repository-level config files could trigger remote code execution and exfiltrate a developer’s Anthropic API keys before Claude Code’s trust prompt ever rendered 6. Giving the model raw filesystem access is the whole product premise; a pre-prompt exfiltration path is exactly the failure mode that posture is supposed to prevent.

What’s actually being announced

Strip the philosophy and the substance of the Wu interview is a metering reset, an ecosystem boundary, and a quality apology repackaged as intentional minimalism. That’s a defensible product story — Anthropic genuinely did publish the postmortem, genuinely did decouple Agent SDK billing, and genuinely is shipping a coherent CLI. But “no grand plan” only sounds like humility if you skipped the last quarter. Read with the receipts, it sounds like a vendor that just spent six weeks being told its plan wasn’t working.

Abridge’s $5.3B scribe bet meets Epic and a consent suit

Source: latent-space · published 2026-05-14

TL;DR

- Abridge pitches 10–20 hours/week saved across 100M+ medical conversations on Latent Space

- Peer-reviewed analysis finds 44% of its hallucinations are clinically “major” — invented ECGs, misattributed family history

- Epic shipped native AI Charting in February 2026 after divesting its Abridge stake, threatening to Sherlock the category

- A $5.3B valuation on ~$110M ARR forces an existential pivot into prior-auth and RCM beyond the $1.6–2.1B scribe TAM

The founder pitch

On Latent Space, Janie Lee and Chai Asawa frame Abridge as the “operating system of healthcare”: 100M+ medical conversations, 250 health systems, 28 languages, and a “constellation of models” that treats the EHR as a filesystem for agents. The hero number is 10–20 hours/week of “pajama time” returned to clinicians. The headline product is prior authorization that runs against state-specific payer PDFs while the patient is still in the room, collapsing weeks of latency to minutes.

The technical story is genuinely interesting — fast/slow model routing, calibrated LLM judges, MD-engineers (“mutants”) sitting inside product, and one-way de-identification for HIPAA. But the episode is the founder narrative. The independent record adds three problems the conversation skips.

The accuracy gap is not cosmetic

A peer-reviewed npj Digital Medicine analysis of ~13,000 sentences logged a 1.47% hallucination rate — but 44% of those errors were “major,” including invented ECGs and mammograms, plus family-history symptoms misattributed to the patient 7. Practitioners on r/medicine are blunter, calling the output “clinical slop” and reporting fabricated exam findings and dropped negatives 8:

The A&P “sounds like a medical student.”

Abridge’s LFD (“Look at the F***ing Data”) and Linked Evidence stack are direct answers to this failure mode. The question is whether the residual tail is shrinking fast enough to outrun the lawsuit pipeline.

Epic is no longer a partner — it’s a competitor

The podcast treats EHR integration as pure tailwind. Business Insider described Abridge–Epic as “the messiest relationship in healthcare AI”: Epic divested its equity stake in 2025 while Abridge engineers were still on Epic’s campus co-developing features 9. In February 2026 Epic launched native AI Charting inside its “Art for Clinicians” suite, pulling context directly from the longitudinal record — HIT Consultant called it a “watershed moment” with explicit Sherlocking risk for ambient vendors 10. “EHR as filesystem for agents” reads differently when the filesystem owner ships a competing app with zero integration tax.

Valuation math demands the pivot

The June 2025 round implies roughly a 50x forward multiple on $100–117M contracted ARR against a physician-scribe TAM independent analysts size at $1.6–2.1B — barely larger than the valuation itself 11. That’s why prior auth and the broader $250B RCM market aren’t a roadmap item, they’re load-bearing.

And the legal overhang is sharper than the episode suggests. Washington v. Sutter Health, filed April 2026, alleges Abridge notes embedded boilerplate stating patients had been informed of and consented to recording — even when no such conversation occurred — implicating CIPA and federal wiretap statutes 12. A “consent hallucination” is the worst possible variant of the accuracy problem, because it fabricates the legal predicate for the entire product existing in the room.

The Abridge thesis can survive any one of these. It probably can’t survive all three compounding into the next funding cycle.

OpenAI recasts Codex as a sales-and-ops drafting agent

Source: openai-blog · published 2026-05-15

TL;DR

- OpenAI Academy shipped a 3-part Codex playbook covering sales, business ops, and data science roles.

- Google’s Gemini Enterprise Agent Platform already runs multi-day autonomous sales prospecting sequences.

- Anthropic’s public repo bundles 15 prebuilt agentic workflows wired into HubSpot, Salesforce, and QuickBooks.

- Codex agents already run OpenAI’s internal data platform — a proof point absent from the new playbooks.

A role-specific pivot, not a product launch

The Academy’s same-day drop of “How sales teams use Codex,” “How business operations teams use Codex,” and “How data science teams use Codex” is a positioning move, not a feature release. Codex began life as a coding agent; these guides reframe it as a drafting agent for initiative briefs, leadership decision packets, prospecting sequences, and analytical readouts. The throughline across all three is the same recipe: dump a dense context window from Slack/Docs/Sheets/CRM, run a “Starter Prompt” that forces the model to separate sourced facts from interpretation, then have a human pressure-test the result.

That’s a deliberate retreat from the autonomous-agent narrative OpenAI pushes elsewhere. Forbes reported in April that Codex agents are “running [OpenAI’s] data platform autonomously” 13 — a stronger internal case than anything in the Academy guides, which conspicuously stay in productivity-accelerator territory.

Catching up to Google and Anthropic

The competitive context explains the hedging. Rivals already shipped role-specific agents with infrastructure attached:

| Vendor | Shape of the offering | Integrations |

|---|---|---|

| Gemini Enterprise Agent Platform — long-running agents, multi-day sales sequences 14 | Agent Sandbox for browser automation | |

| Anthropic | Public financial-services repo — 15 prebuilt agentic workflows 15 | HubSpot, Salesforce, QuickBooks |

| OpenAI | Academy prose playbooks for 3 roles | Workspace, Slack, Gmail plugins (no prebuilt CRM templates) |

Google and Anthropic are shipping runnable code. OpenAI is shipping prompt patterns. For an ops lead deciding what to pilot in Q3, that’s a meaningful gap.

The trust gap nobody addresses

The same connectors that make the playbooks useful expand the attack surface. Researchers disclosed a now-patched Codex code-review vulnerability earlier this year that allowed attackers to steal GitHub credentials 16. None of the three guides discusses connector permissions, agent sandboxing, or audit trails — odd, given that the “Board and Company Progress Updates” use case has Codex reading financial models and drafting investor-facing copy.

flowchart LR

A[Slack / Gmail threads] --> B{Codex workspace agent}

C[Docs / Sheets / Slides] --> B

D[CRM + financial models] --> B

B --> E[Decision packets, board memos,<br/>prospecting sequences]

B -. credential / data<br/>exfiltration risk .-> F((External world))

The other unaddressed critique is epistemic. Practitioner Zack Proser argues Codex output is bounded by input quality: “a mediocre SME providing thin context will produce mediocre results, as AI models primarily pattern-match rather than reasoning from first principles” 17. The playbooks’ “What You Bring” framing quietly assumes the operator already has the synthesis judgment Codex is supposed to provide.

The story under the story

Independent commentary reads this drop as workforce reshaping, not prompt engineering. The “Copywriter Pattern” — non-technical staff shipping product changes without engineers — and the expectation that power users juggle 10+ parallel agentic tasks 18 both point at a quiet redefinition of what a junior AE, ops analyst, or data scientist is for. The Academy doesn’t say that out loud. It doesn’t have to: shipping playbooks for those exact three roles, in the same week, says it.

Further reading

- How sales teams use Codex — openai-blog

- How data science teams use Codex — openai-blog

Round-ups

datasette-llm-limits caps per-user LLM spend in Datasette

Source: simon-willison

A new alpha plugin lets Datasette admins enforce dollar-denominated LLM budgets per user or globally. Configuration uses a rolling-24h window with an amount_usd cap, working alongside datasette-llm and datasette-llm-accountant to track and curb runaway model spending inside multi-tenant deployments.

Ben’s Bites argues every app turns into a dev tool

Source: bens-bites

Agent-era software collapses the line between user and developer, the newsletter argues, as users increasingly configure, prompt, and wire up their own workflows. The takeaway: product teams should treat feedback loops and scripting surfaces as first-class features, not power-user afterthoughts.

Claude-built QR generator handles URLs and WiFi credentials

Source: simon-willison

Simon Willison shipped a browser-based QR code generator built with Claude’s help, supporting plain text, URLs, and WiFi network handoffs with SSID, password, and WPA security selection. Styling options include square format, optional border, size presets, and color choice.

Simon Willison ships inaturalist-clumper 0.1 for blog sightings

Source: simon-willison, simon-willison

The new 0.1 release groups iNaturalist observations into JSON clumps that feed Willison’s blog sightings pipeline, already running in production for several weeks. A companion post showing a Western Gull and Rock Pigeon spotted before PyCon demonstrates the output format in action.

Footnotes

-

Anthropic engineering postmortem (April 23, 2026) — https://www.anthropic.com/engineering/april-23-postmortem

↩A ‘reasoning effort’ bug defaulted models to a lower intelligence mode and a caching regression caused the model to re-process entire conversation histories, leading to ~40% token inflation for identical tasks.

-

↩Anthropic puts Claude agents on a meter across its subscriptions… programmatic usage including the Agent SDK transitions into a dedicated monthly credit system, billed at standard API rates rather than drawing from the general chat pool.

-

VentureBeat — https://venturebeat.com/technology/anthropic-cracks-down-on-unauthorized-claude-usage-by-third-party-harnesses

↩Anthropic cracks down on unauthorized Claude usage by third-party harnesses… blocking tools like OpenCode and OpenClaw that spoofed the Claude Code client to access more favorable subscription limits.

-

CTOL Digital — ‘AI leaders clash on agent architecture’ — https://www.ctol.digital/news/ai-leaders-clash-agent-architecture-cognition-anthropic-strategies/

↩ ↩2Cognition’s Walden Yan published ‘Don’t Build Multi-Agents,’ arguing multi-agent systems create fragile architectures due to poor context sharing… Anthropic countered that parallel role-specialized agents can outperform single-agent baselines by over 80% on complex tasks.

-

r/ClaudeAI — 52 controlled benchmarks — https://www.reddit.com/r/ClaudeAI/comments/1ss7f38/we_ran_52_controlled_benchmarks_on_claude_code/

↩ ↩2Claude Code’s ‘Agent Teams’ feature—which runs parallel sub-agents—was found to increase costs by over 70% without providing measurable gains in code quality; retry loops can actually degrade output by regenerating entire files and destroying previously correct sections.

-

The Hacker News — https://thehackernews.com/2026/02/claude-code-flaws-allow-remote-code.html

↩Claude Code flaws (CVE-2025-59536) allow remote code execution or API key theft via malicious repository-level configuration files… exfiltrating a developer’s Anthropic API keys before showing a trust prompt.

-

npj Digital Medicine study (PMC) — https://pmc.ncbi.nlm.nih.gov/articles/PMC12460601/

↩Analysis of nearly 13,000 sentences identified an overall hallucination rate of 1.47%, but 44% of those errors were ‘major’ — capable of affecting diagnosis or patient management, including invented ECGs/mammograms and misattributed family-history symptoms.

-

Reddit r/medicine / r/biotechmarketers practitioner threads — https://www.reddit.com/r/biotechmarketers/comments/1mm48l2/watch_the_ambient_ai_scribe_wars_abridge_vs/

↩Clinicians describe Abridge output as ‘clinical slop’ — the A&P ‘sounds like a medical student,’ the AI occasionally fabricates exam findings or ignores stated negatives, and time saved on drafting is often lost to intensive proofreading.

-

Business Insider — ‘messiest relationship in healthcare AI’ — https://www.businessinsider.com/inside-messiest-relationship-in-healthcare-ai-abridge-epic-2025-9

↩Epic divested its single-digit stake in Abridge in 2025 as it prepared to launch its own internal AI scribe, after Abridge engineers had spent significant time on Epic’s campus co-developing features.

-

HIT Consultant on Epic AI Charting launch — https://hitconsultant.net/2026/02/05/epic-releases-ai-charting-ambient-ai-market-implications/

↩Epic launched native AI Charting in February 2026 integrated directly into its ‘Art for Clinicians’ suite, pulling context from the longitudinal record — a ‘watershed moment’ that risks Sherlocking the ambient-AI market.

-

AICerts analysis of Abridge’s Series E — https://www.aicerts.ai/news/abridges-clinical-scribe-scale-evidence-and-business-risks/

↩The $5.3B valuation implies a ~50x forward multiple on ~$100–117M contracted ARR — ‘unhinged’ for a documentation tool when the physician-scribe TAM is estimated at only $1.6–2.1B, forcing a pivot into the $250B RCM market to justify the price.

-

Slashdot / Washington v. Sutter Health coverage — https://slashdot.org/story/26/04/13/0330204/californians-sue-over-ai-tool-that-records-doctor-visits

↩Plaintiffs allege Abridge’s AI-generated clinical notes often include boilerplate language stating the patient was informed of and consented to the recording, even in instances where no such conversation occurred — a ‘consent hallucination’ embedded in the medical record.

-

Forbes — Victor Dey — https://www.forbes.com/sites/victordey/2026/04/17/openai-says-codex-agents-are-running-its-data-platform-autonomously/

↩OpenAI says Codex agents are running its data platform autonomously

-

Google Cloud Blog — Gemini Enterprise Agent Platform — https://cloud.google.com/blog/products/ai-machine-learning/introducing-gemini-enterprise-agent-platform

↩Gemini Enterprise Agent Platform… long-running agents capable of autonomous sales prospecting sequences lasting several days

-

Anthropic financial-services GitHub repo — https://github.com/anthropics/financial-services

↩15 pre-built agentic workflows for sales, operations, and finance that integrate directly with HubSpot, Salesforce, and QuickBooks

-

BankInfoSecurity — https://www.bankinfosecurity.com/openais-coding-agent-flaw-exposed-github-passwords-a-31273

↩a now-patched vulnerability that allowed attackers to steal GitHub credentials through Codex’s code review feature

-

Zack Proser — OpenAI Codex Review 2026 — https://zackproser.com/blog/openai-codex-review-2026

↩a ‘mediocre SME’ providing thin context will produce mediocre results, as AI models primarily pattern-match rather than reasoning from first principles

-

Medium — ‘Are Codex and Claude becoming everything platforms for work?’ — https://medium.com/@ai_93276/are-codex-and-claude-becoming-everything-platforms-for-work-6789c47a7984

↩the ‘Copywriter Pattern,’ which allows non-technical staff to ship product changes without engineering intervention… successful users manage 10+ autonomous tasks simultaneously rather than perfecting a single prompt