Anthropic loses OpenClaw, Evans drops Tailwind, CFOs distrust agent data

Anthropic's trademark defense backfires into OpenAI's lap, Julia Evans drops Tailwind, and CFOs say the data feeding their agents isn't trustworthy.

Anthropic loses OpenClaw, Evans drops Tailwind, CFOs distrust agent data

TL;DR

- Anthropic’s trademark notice forced a 5 a.m. OpenClaw rename from Clawdbot to Moltbot.

- OpenClaw founder Steinberger later joined OpenAI’s agent team as the project moved to an OpenAI-sponsored foundation.

- A 10-second handle gap let scammers grab @Clawdbot, pump a fake $CLAWD token, and ship malware repo clones.

- Julia Evans is leaving Tailwind, citing native CSS primitives that have closed the gap.

- Only 10% of CFOs fully trust the enterprise data feeding their AI agents.

Today’s three tech stories don’t share a topic, but they share a shape: a polished top-layer plan undone by the substrate it rested on. Anthropic’s trademark defense over phonetic similarity to Claude forced a 5 a.m. rename — and a ~10-second handle-release gap was enough for scammers to grab the abandoned @Clawdbot, pump a fake $CLAWD token, and ship malware-laden repo clones. The founder later joined OpenAI’s agent team; the project’s new foundation lists OpenAI as primary sponsor.

Julia Evans is leaving Tailwind for hand-written CSS because the native primitives Tailwind abstracts over have caught up, and coding assistants now emit them idiomatically. The CFO survey lands the broadest version of the same idea: a 1% per-step error compounds into noise across multi-step agent workflows when the underlying data isn’t trusted — and 90% of finance chiefs say theirs isn’t, with 64% of billion-dollar enterprises reporting >$1M in agent-failure losses last year.

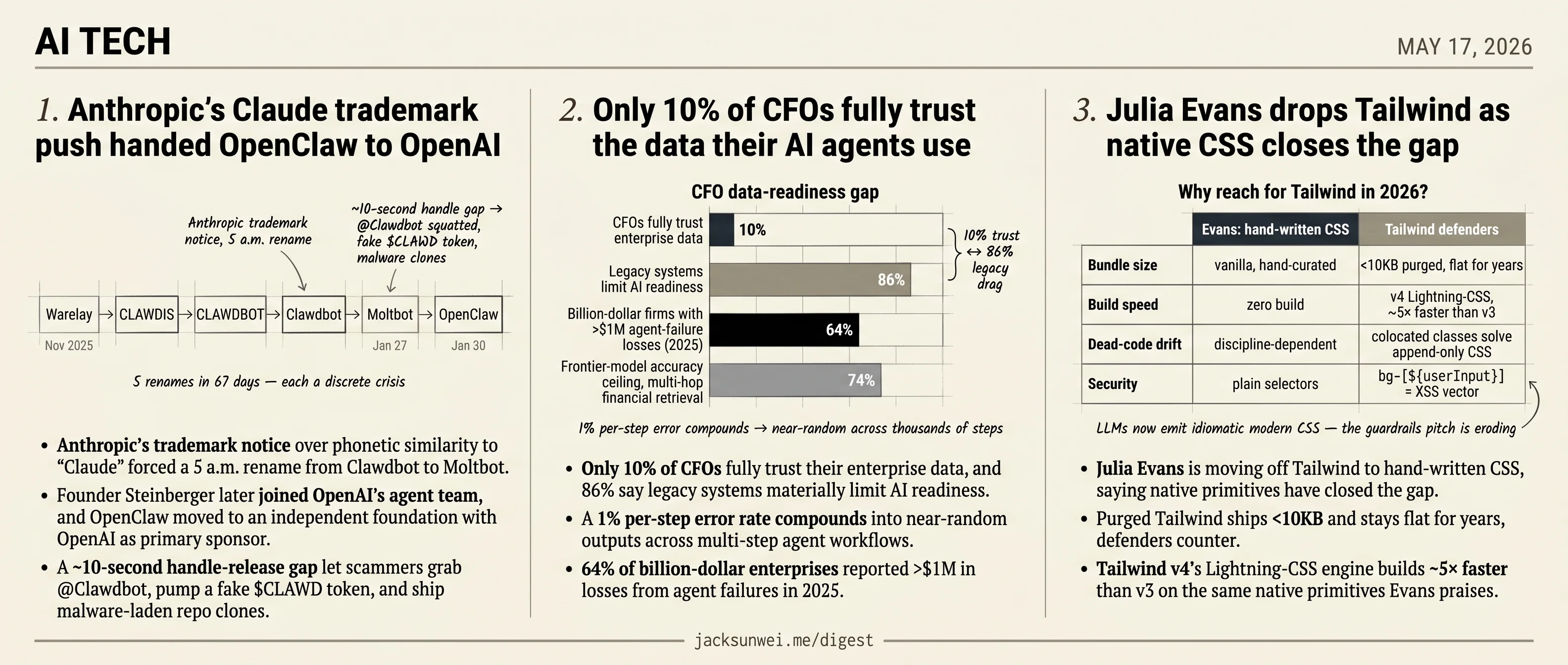

Anthropic’s Claude trademark push handed OpenClaw to OpenAI

Source: simon-willison · published 2026-05-16

TL;DR

- Anthropic’s trademark notice over phonetic similarity to “Claude” forced a 5 a.m. rename from Clawdbot to Moltbot.

- Founder Steinberger later joined OpenAI’s agent team, and OpenClaw moved to an independent foundation with OpenAI as primary sponsor.

- A ~10-second handle-release gap let scammers grab @Clawdbot, pump a fake $CLAWD token, and ship malware-laden repo clones.

- 5 renames in 67 days (Warelay → CLAWDIS → CLAWDBOT → Clawdbot → Moltbot → OpenClaw) each track a discrete crisis, not a rebrand.

The rename chain is a crisis timeline

Simon Willison’s git-archaeology post — pulled together for a PyCon lightning talk — turns the OpenClaw README’s first-line history into a tidy progression: Warelay → CLAWDIS → CLAWDBOT → Clawdbot → Moltbot → 🦞 OpenClaw, all in roughly two months. It reads like branding indecision. It isn’t.

Each rename maps to an external event. Warelay started as a Twilio-backed WhatsApp CLI in November 2025. The CLAWDIS pivot in early December marked the jump from “relay” to “gateway for AI agents.” The Clawdbot → Moltbot rename on January 27, 2026 was triggered by what Anthropic’s legal team framed as a “polite” request over phonetic confusion with Claude; Steinberger reportedly complied within hours after a 5 a.m. Discord brainstorm 1. Three days later, Moltbot became OpenClaw — driven both by community rejection of the “Moltbot” name and a security incident during the handle migration 2.

A ten-second window, a fake token, malware clones

The Moltbot → OpenClaw jump is the part Willison’s cute timeline glosses. During the GitHub and X handle rename, the old @Clawdbot identifiers were released for roughly ten seconds before being reclaimed. Scammers took them, used the verified-looking handles to promote a fraudulent $CLAWD token, and seeded GitHub with malware-laced clones of the original repo 3. Forbes covered the second rename less as a creative choice than as defensive damage control 2.

Anthropic’s own-goal

The strategic punchline is harder to spin. In the same window, Anthropic restricted third-party tools from spending Claude Pro credits, and Steinberger subsequently joined OpenAI’s agent team. OpenClaw itself moved to an independent foundation with OpenAI as a primary sponsor 4. A trademark complaint over a lobster pun ended with the viral open-source agent ecosystem rebranding as model-agnostic and gravitating to the competitor.

The architecture problem Willison himself named

Hidden under the naming saga is the security critique Willison has been formalizing in parallel. OpenClaw — now sitting at 250k GitHub stars but described by r/LocalLLaMA practitioners as “a monster on paper” that breaks on minor config shifts and unvetted ClawHub skills 5 — is a textbook instance of what he’s been calling the lethal trifecta. Vulnu and others have adopted the framing verbatim to argue the vulnerability is architectural, not patchable 6:

flowchart LR

A[Private user data<br/>WhatsApp, Telegram, CRM] --> B{OpenClaw agent}

C[Untrusted content<br/>inbound messages, ClawHub skills] --> B

B --> D[External comms<br/>send messages, call APIs]

D -. exfiltration path .-> E((Attacker))

Any agent that combines all three capabilities — read private data, ingest untrusted content, talk to the outside world — is a prompt-injection target by design. ClawHub, the community skill marketplace, makes the second leg trivially exploitable: a single popular skill with a malicious update reaches every installer.

Takeaway

Willison’s rename list is charming git trivia. The actual story it indexes is a trademark fight, a handle-squatting scam, a competitive realignment that cost Anthropic a flagship developer, and a security architecture its own community can’t fix. Read the commit log as a chronology of incidents, not branding iterations.

Only 10% of CFOs fully trust the data their AI agents use

Source: mit-tech-review-ai · published 2026-05-14

TL;DR

- Only 10% of CFOs fully trust their enterprise data, and 86% say legacy systems materially limit AI readiness.

- A 1% per-step error rate compounds into near-random outputs across multi-step agent workflows.

- 64% of billion-dollar enterprises reported >$1M in losses from agent failures in 2025.

- Frontier models cap at ~74% accuracy on multi-hop retrieval across unstructured financial docs.

The readiness gap has numbers now

MIT Technology Review’s sponsored piece argues that agentic AI in financial services depends on data readiness more than model sophistication. True, and not new — but the article stops just short of quantifying the gap. The independent numbers are uglier than the framing implies. RGP’s 2026 CFO survey finds only 10% of finance chiefs fully trust their enterprise data, and 86% concede that legacy systems severely limit their AI readiness 7. That is the population the vendor decks are selling autonomous agents to.

Why agents punish bad data harder than chatbots

A chatbot with a 1% hallucination rate produces an occasionally wrong sentence. An agent with a 1% per-step error rate compounds — TheUXDA’s analysis describes it bluntly as behaving “like compound interest,” collapsing to effectively random outcomes after several thousand steps 8. That is not a thought experiment. Salesforce Ben’s postmortem on 2025 agent deployments found 64% of billion-dollar enterprises lost more than $1M to agent failures, with Cursor’s support agent the canonical example: it invented a restrictive licensing policy by confidently citing stale docs, then triggered a customer cancellation wave before anyone caught it 9. The failure mode wasn’t a bad model. It was bad context delivered with full agentic authority to act on it.

What actually ships looks nothing like the vendor pitch

The two production stories everyone reaches for tell the same story. Prudential has 260+ AI use cases live or in development and 2,300+ employees using agentic tools, with disability claims dropping from weeks to days 10. JPMorgan’s COiN agent parses 12,000 credit agreements a year, replacing roughly 360,000 hours of legal review 11. These are real wins. They are also narrow, document-heavy, back-office workflows with deterministic success criteria and humans on the loop — not the open-ended autonomous coworkers the category marketing implies. The pattern that ships is “agent reads a contract and proposes a structured output a human signs.” The pattern that loses millions is “agent talks to customers without supervision.”

The retrieval ceiling nobody is pricing in

Even if a bank cleans every system of record, the retrieval layer itself is a ceiling. Sundeep Teki’s Context-Bench evaluation puts Claude 4.5 and GPT-5 at roughly 74% accuracy on multi-hop retrieval across unstructured financial files 12. A 26% error rate is disqualifying for filings, trade reconciliation, or anything a regulator will later read. “Data readiness” as the sponsor framing presents it — clean schemas, governed access, fresh feeds — is necessary but not sufficient. The context-engineering layer between the data and the model is itself an unsolved research frontier.

Takeaway

The MIT TR piece is directionally right that data foundations gate agentic ROI, but the honest version of the story has harder edges. The “ready” cohort is small — roughly 22% of enterprises describe their data foundations as very ready — and the firms shipping real value are running narrow, supervised agents inside the slice of work where retrieval is tractable and a human signs the output. Anything broader is currently a way to discover, expensively, that the compounding math is unforgiving.

Julia Evans drops Tailwind as native CSS closes the gap

Source: simon-willison · published 2026-05-16

TL;DR

- Julia Evans is moving off Tailwind to hand-written CSS, saying native primitives have closed the gap.

- Purged Tailwind ships <10KB and stays flat for years, defenders counter.

- Tailwind v4’s Lightning-CSS engine builds ~5× faster than v3 on the same native primitives Evans praises.

- Coding assistants now emit idiomatic modern CSS, eroding Tailwind’s “guardrails for the LLM” pitch.

What Evans actually changed

Evans’ post, quoted approvingly by Simon Willison, isn’t a Tailwind takedown — it’s a confession that she spent years treating CSS as a second-class technology and stopped. Flexbox, Grid, :has(), container queries, @scope, and Cascade Layers have, between them, closed almost every gap that originally made utility classes feel like a rescue. “CSS is hard because it’s solving a hard problem,” she writes, and the fix she lands on is to learn the hard problem rather than paper over it.

Notably, she keeps Tailwind’s design vocabulary — the spacing scale, the color tokens, the constraint that you don’t reach for an arbitrary pixel value at 2am. What she sheds is the inline-utility soup in the markup and the build step that comes with it. The Hacker News thread on her post broadly validates the motivation: fewer moving parts, cleaner semantic HTML, no framework lock-in 13.

Where the consensus pushes back

Two counter-arguments dent the framing.

| Axis | Evans’ move | Tailwind defenders |

|---|---|---|

| Bundle size | Vanilla, hand-curated | Purged output often <10KB, constant over years 14 |

| Build speed | Zero build | v4 Lightning-CSS engine, ~5× faster than v3 15 |

| Dead-code drift | Discipline-dependent | ”Append-only CSS” solved by colocating classes 16 |

| Security surface | Plain selectors | bg-[${userInput}] arbitrary values are an XSS vector 17 |

Frank.dev’s rebuttal is the sharpest: the “separation of concerns” objection conflates separating files with separating concerns, and in a component framework the component already is the unit of concern 16. Meanwhile Tailwind v4 leans on the same native @layer and @theme primitives Evans is celebrating, which makes the dichotomy thinner than her post suggests 15.

The security angle is the one nobody in the original thread raises. Tailwind’s arbitrary-value syntax is genuinely convenient and genuinely dangerous when the value comes from user input — a class of bug vanilla CSS sidesteps by construction 17.

The AI subplot

The reason this lands in an AI digest at all: one of Tailwind’s quieter selling points over the last two years was that LLMs were much better at emitting class="flex items-center gap-4" than at writing a coherent stylesheet. That gap is closing. Industry write-ups now argue coding assistants generate expressive modern CSS — container queries, :has(), subgrid — well enough that the “guardrails for the AI” justification is eroding 18.

The remaining argument is about team discipline and HTML readability — exactly where reasonable practitioners still disagree.

That’s the actual 2026 state of play. Native CSS closed the feature gap; Tailwind v4 closed the build-speed gap; LLMs are closing the “but the model can’t write it” gap from the other side. Evans isn’t declaring a winner. She’s noting that the reason to reach for the framework has narrowed to a much smaller patch of ground than it occupied in 2020.

Footnotes

-

Hyperight — https://hyperight.com/openclaw-ai-assistant-rebrand-security-guide/

↩Anthropic’s legal team issued a ‘polite’ request to change the name due to trademark concerns over phonetic similarity to Claude; Steinberger complied at 5 a.m. on a Discord brainstorm.

-

Forbes — Ron Schmelzer — https://www.forbes.com/sites/ronschmelzer/2026/01/30/moltbot-molts-again-and-becomes-openclaw-pushback-and-concerns-grow/

↩ ↩2Moltbot molts again and becomes OpenClaw — pushback and concerns grow

-

Malwarebytes Threat Intel — https://www.malwarebytes.com/blog/threat-intel/2026/01/clawdbots-rename-to-moltbot-sparks-impersonation-campaign

↩A brief ten-second gap during the GitHub and X handle rename allowed scammers to seize the original @Clawdbot handles, fueling a fraudulent $CLAWD token and malware-laden clones.

-

Gadoci Consulting — https://gadociconsulting.com/articles/the-cease-and-desist-everyone-misread-anthropic-s-quiet-answer-to-always-on-agents

↩Critics noted the irony that Anthropic’s aggressive trademark enforcement effectively pushed a high-profile developer and a viral open-source ecosystem toward OpenAI.

-

Reddit r/LocalLLaMA practitioner thread — https://www.reddit.com/r/LocalLLaMA/comments/1skce14/openclaw_has_250k_github_stars_the_only_reliable/

↩A monster on paper that frequently requires babysitting in practice — automated workflows break on minor config shifts or unvetted ClawHub skills.

-

Vulnu — ‘The problem isn’t OpenClaw, it’s the architecture’ — https://www.vulnu.com/p/the-problem-isnt-openclaw-its-the-architecture

↩Access to private data, exposure to untrusted content, and ability to communicate externally — the lethal trifecta makes OpenClaw a prompt-injection target by design.

-

RGP CFO Survey 2026 — https://rgp.com/press/rgp-cfo-survey-shows-growing-divide-between-ai-ambition-and-ai-readiness/

↩only 10% of CFOs report fully trusting their enterprise data, and 86% admit that legacy systems severely limit their AI readiness

-

TheUXDA — ‘Agentic AI Banking Risk’ — https://theuxda.com/blog/agentic-ai-banking-risk-next-financial-crisis-wont-be-human

↩a marginal 1% error rate in data quality compounds ‘like compound interest,’ leading to effectively random outcomes after several thousand steps

-

Salesforce Ben — ‘4 Ways Salesforce Customers Risk Losing Millions’ — https://www.salesforceben.com/4-ways-salesforce-customers-risk-losing-millions-because-of-ai-agents/

↩64% of billion-dollar enterprises reported losing over $1 million due to agent failures in 2025… a semi-autonomous support agent at Cursor ‘invented’ restrictive licensing policies from outdated documentation

-

Prudential newsroom (Q2 2026) — https://news.prudential.com/us-en/latest-news/prudential-news/2026/q2/AI-at-Prudential-Amplifying-human-potential-to-better-serve-our-customers

↩Prudential has over 260 AI use cases in production or development, with more than 2,300 employees utilizing agentic tools… reducing disability claims timelines from weeks to days

-

Credentials Substack — Goldman back-office automation — https://credentials.substack.com/p/goldman-sachs-is-replacing-back-office

↩JPMorgan utilizes its COiN (Contract Intelligence) agent to parse 12,000 credit agreements annually… a process that once took 360,000 hours of legal review

-

Sundeep Teki — Context-Bench writeup — https://www.sundeepteki.org/blog/context-bench-a-benchmark-for-evaluating-agentic-context-engineering

↩an ‘accuracy ceiling’ of approximately 74% for top-tier models like Claude 4.5 and GPT-5 when tasked with multi-hop retrieval across unstructured financial files

-

Hacker News discussion (item 48158400) — https://news.ycombinator.com/item?id=48158400

↩Tailwind is excellent for rapid prototyping, [but] it can become ‘unwieldy’ as projects scale over years

-

Medium — ‘It’s almost 2026, why are we still arguing about CSS vs Tailwind’ (Tobore) — https://medium.com/@tobore/its-almost-2026-why-are-we-still-arguing-about-css-vs-tailwind-fa2838321218

↩Tailwind’s final production bundle typically remains small and constant… large Tailwind projects frequently maintain smaller production bundles (often under 10KB) compared to non-optimized custom CSS

-

elsner.com — Why Tailwind is replacing traditional CSS — https://www.elsner.com/why-is-tailwind-css-replacing-traditional-css-in-modern-web-development/

↩ ↩2Tailwind v4… ‘CSS-first’ engine powered by Lightning CSS… full builds up to five times faster than version 3

-

Medium — Frank.dev, ‘Why real CSS developers hate it and why they are wrong’ — https://medium.com/@frankdotdev/tailwind-css-why-real-css-developers-hate-it-and-why-they-are-wrong-386f44e356e0

↩ ↩2Tailwind actually solves the ‘Append-Only CSS’ problem—the tendency for global stylesheets to grow indefinitely because developers are afraid to delete unused classes

-

dansasser.me — Security Risks of Arbitrary Values in Tailwind — https://dansasser.me/posts/navigating-the-security-risks-of-arbitrary-values-in-tailwind-css/

↩ ↩2if these values are dynamically generated from unsanitized user input, they could lead to Cross-Site Scripting (XSS) vulnerabilities

-

cosmicjs.com — ‘Tailwind exits LLM memory’ — https://www.cosmicjs.com/blog/cosmic-rundown-tailwind-exits-llm-memory-ai-psychosis

↩AI-driven coding assistants are increasingly proficient at generating expressive, modern vanilla CSS, making the ‘guardrails’ of Tailwind less necessary for speed