Bun ports to Rust, Granite tops sub-100M MTEB, Transformers ships async batching

Three unrelated infrastructure shipments today: Bun's LLM-driven Rust port, IBM Granite R2's open-licensed embedding lead, and Transformers' async batching catch-up.

Bun ports to Rust, Granite tops sub-100M MTEB, Transformers ships async batching

TL;DR

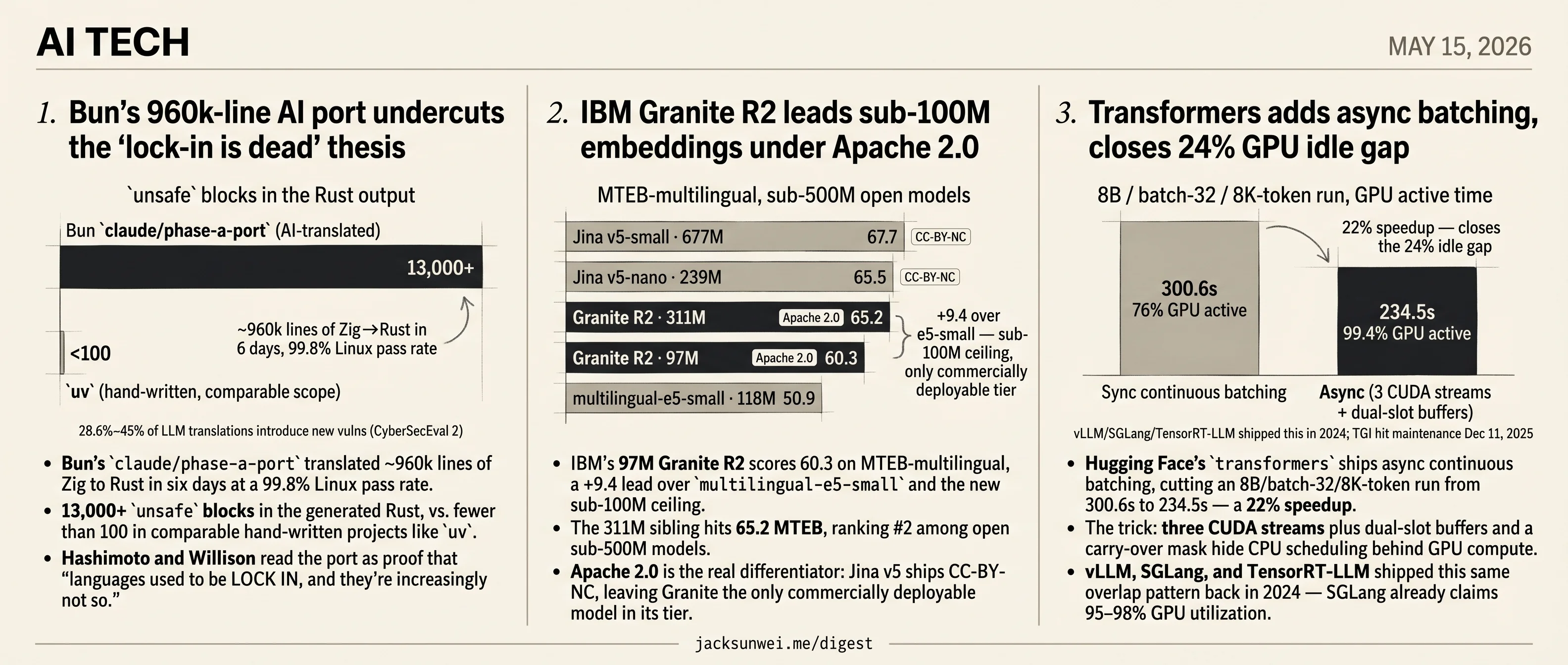

- Bun Codex-ports 960k Zig lines to Rust at 99.8% Linux pass with 13,000+ unsafe blocks.

- IBM Granite R2 scores 60.3 MTEB at 97M params under Apache 2.0, beating Jina v5’s CC-BY-NC.

- Transformers async batching cuts an 8B/batch-32 run 22%, copying vLLM’s 2024 overlap pattern.

- Datasette ships a Codex-built rate-limit plugin capping abusive crawlers at 60 reqs/60s.

- Boris Mann mocks ‘11 AI agents’ as a meaningless brag, like counting open browser tabs.

Today’s three tech stories don’t share a topic — a JavaScript runtime, an embedding model, and a serving library — but they share a measurement habit. Each release picks the baseline that makes the headline number land. Bun’s claude/phase-a-port posts a 99.8% Linux pass rate against the labor cost of a hand-rewrite, leaving the 13,000+ unsafe blocks the LLM emitted as the next maintainer’s problem. IBM’s Granite R2 leads MTEB at sub-100M, but the comparison that actually matters is the license: Apache 2.0 against Jina v5’s CC-BY-NC. Hugging Face’s transformers lands a 22% throughput win by adopting an overlap pattern vLLM and SGLang shipped back in 2024.

The round-ups echo the day’s preoccupation with the unit of work: a Codex-built Datasette plugin shipped in an afternoon, and two pointed jabs — from Boris Mann and Mo Bitar — at the AI-agent-counting school of marketing.

Bun’s 960k-line AI port undercuts the ‘lock-in is dead’ thesis

Source: simon-willison · published 2026-05-14

TL;DR

- Bun’s

claude/phase-a-porttranslated ~960k lines of Zig to Rust in six days at a 99.8% Linux pass rate. - 13,000+

unsafeblocks in the generated Rust, vs. fewer than 100 in comparable hand-written projects likeuv. - Hashimoto and Willison read the port as proof that “languages used to be LOCK IN, and they’re increasingly not so.”

- 28.6%–45% of LLM code translations introduce new vulnerabilities in independent benchmarks, mostly in input validation.

The thesis

Two posts dropped together this week and they’re being read as one argument. Hashimoto’s line — “Programming languages used to be LOCK IN, and they’re increasingly not so” — was provoked by Bun’s AI-driven port from Zig to Rust. Willison’s companion post adds a second data point: a mid-sized company that used coding agents to rewrite legendary native iOS and Android apps to React Native, with the explicit fallback that “if it turned out to be the wrong decision, they could just port back to native.”

The framing is that agents have collapsed the activation energy for language and framework migrations. Choices that used to dictate a decade of hiring and roadmap are now reversible bets. Block’s public write-up of an agent-driven Base Web → Fluent UI migration “without a flag day” suggests the same playbook is already running inside large engineering orgs, not just startup blog posts 1.

What the Bun case actually shows

The Bun port is the cluster’s load-bearing evidence, and it’s messier than the headline. Techzine reports the Zig→Rust move wasn’t purely a technical preference: Zig’s core team had rejected Bun’s parallelized semantic-analysis patches under a strict no-AI-code policy, and that governance friction is what made Rust’s ecosystem suddenly attractive 2. The “languages are fungible” thesis quietly imports a corollary — communities are also fungible, and you may exercise the option for social reasons, not economic ones.

The output is also structurally suspicious. Reviewers counted 13,000+ unsafe blocks in the generated Rust against fewer than 100 in mature Rust projects of similar scope, prompting “vibecoded” charges 3. The port passes tests; whether it is maintainable Rust is a separate question that won’t be answered for months.

Where the skeptics land

Practitioner pushback is sharper than either Willison or Hashimoto acknowledges. One widely-quoted Hacker News comment frames the risk as an

ontological difference between using code that is no longer understood by humans and deploying code that was never understood by any human in the first place 4

— with reviewer fatigue causing engineers to LGTM agent output they cannot audit. Academic benchmarks back the worry: method-level translation pass rates above 80% collapse once class-level cross-method dependencies are required, and passing translations frequently run orders of magnitude slower than the source 5. The security picture is worse: CyberSecEval 2 results find 28.6%–45% of LLM-translated code introduces new vulnerabilities, particularly in input-validation paths 6. Syntactic equivalence is not security equivalence.

The honest version of the claim

Initiation cost of a migration has genuinely fallen — that part of the Hashimoto/Willison thesis holds. Total cost has not. The Bun PR is the canonical specimen: shipped in six days, passes the suite, carries structural debt that may take longer to repay than the migration saved 34. Treat “lock-in is dead” as a directional shift in switching economics, not a settled fact about whether you should exercise the option this quarter.

Further reading

- Quoting Mitchell Hashimoto — simon-willison

IBM Granite R2 leads sub-100M embeddings under Apache 2.0

Source: huggingface-blog · published 2026-05-14

TL;DR

- IBM’s 97M Granite R2 scores 60.3 on MTEB-multilingual, a +9.4 lead over

multilingual-e5-smalland the new sub-100M ceiling. - The 311M sibling hits 65.2 MTEB, ranking #2 among open sub-500M models.

- Apache 2.0 is the real differentiator: Jina v5 ships CC-BY-NC, leaving Granite the only commercially deployable model in its tier.

- A known llama.cpp GGUF bug silently breaks multilingual text — the headline use case — by dropping

strip_accentspreprocessing.

What’s actually new

The R2 family swaps R1’s XLM-RoBERTa base for a ModernBERT encoder, which is what unlocks the 32K context window (a 64× jump from 512) via Rotary Position Embeddings and Flash Attention 2. The gain on long-context retrieval is the most concrete win in the release: the 311M model jumps +34.0 points on LongEmbed over R1, the 97M model +31.3. That’s the kind of delta you get from an architecture change, not from training tricks.

The other notable engineering choice is tokenization. The 311M ships with the Gemma 3 tokenizer (262K) and the 97M with a pruned GPT-OSS tokenizer (180K) — both chosen to keep the 32K window useful in scripts like Thai or Hindi where older multilingual tokenizers blow up token counts. Matryoshka training on the 311M lets you truncate 768-dim vectors to 256 dims for a 3× storage reduction at a 0.5-point quality cost, or 128 dims while keeping ~97% of retrieval quality.

Where it sits on the leaderboard

PremAI’s 2026 roundup puts the 311M at 65.2 MTEB-multilingual, behind Jina v5-small (67.7, 677M) and v5-nano (65.5, 239M) 7. Granite wins parameter efficiency, not absolute quality. Above that tier, Qwen3-Embedding-8B still dominates MTEB-v2 above 70.5, and BGE-M3 keeps the unique trick of producing dense, sparse, and ColBERT vectors in one pass — hybrid-search functionality Granite doesn’t match 8. On the Massive Legal Embedding Benchmark, the English R2 model placed #18 overall but did beat larger BGE-M3 and E5-Large, suggesting the data-curation story has real teeth in domain retrieval 9.

| Model | Params | MTEB-multi | License |

|---|---|---|---|

| Qwen3-Embedding-8B | 8B | 70.5+ | Apache 2.0 |

| Jina v5-small | 677M | 67.7 | CC-BY-NC-4.0 |

| Jina v5-nano | 239M | 65.5 | CC-BY-NC-4.0 |

| Granite R2 311M | 311M | 65.2 | Apache 2.0 |

| Granite R2 97M | 97M | 60.3 | Apache 2.0 |

| multilingual-e5-small | 118M | 50.9 | MIT |

The license is doing more work than the score. Jina v5’s CC-BY-NC-4.0 rules it out for most commercial RAG; Apache 2.0 makes Granite the default choice in that lane 7.

Deployment gotchas the post skips

The blog touts llama.cpp/GGUF support, but a known issue documents that GGUF conversion of ModernBERT-family models drops the strip_accents preprocessing step, mapping non-ASCII characters to [UNK] and silently breaking embeddings for exactly the multilingual workloads R2 is sold for 10. The same thread notes corrupted CUDA output at full 32K context and Ollama defaulting num_ctx to 4,096 — long documents get truncated unless you override it explicitly 10.

The model-merging pipeline IBM markets as a feature also has a recent scar: the companion R2 English reranker shipped with a defective merged checkpoint that returned scores near 1.0 for clearly irrelevant documents. IBM patched it after researchers traced the bug to the merged-model component 11. Worth pinning a known-good revision.

Takeaway

Granite R2 is a credible Apache-2.0 workhorse for latency-bound, compliance-sensitive RAG — not a SOTA embedder. If you need the absolute top of MTEB and can stomach the license or VRAM, Qwen3-8B and Jina v5 are still ahead. If you need a permissively licensed, sub-100M, 32K-context multilingual model that drops into Sentence-Transformers without instruction prefixes, this is now the obvious pick — once you’ve verified your tokenizer path actually works.

Transformers adds async batching, closes 24% GPU idle gap

Source: huggingface-blog · published 2026-05-14

TL;DR

- Hugging Face’s

transformersships async continuous batching, cutting an 8B/batch-32/8K-token run from 300.6s to 234.5s — a 22% speedup. - The trick: three CUDA streams plus dual-slot buffers and a carry-over mask hide CPU scheduling behind GPU compute.

- vLLM, SGLang, and TensorRT-LLM shipped this same overlap pattern back in 2024 — SGLang already claims 95–98% GPU utilization.

- TGI hit maintenance mode on Dec 11, 2025, so

transformersitself can no longer afford to be the slow path.

What actually changed

Standard continuous batching is stop-and-wait: the CPU samples tokens, updates the KV cache, and schedules the next step while the GPU sits idle, then the GPU runs the forward pass while the CPU sits idle. Profiling the synchronous path on an 8B model with batch 32 and 8K tokens shows the GPU idle for 24% of wall-clock time.

The new ContinuousBatchingAsyncIOs class kills that bubble with three coordinated pieces: separate CUDA streams for H2D transfer, compute, and D2H transfer, ordered with CUDA events; dual-slot input tensors so batch N+1 can be staged while batch N is still on the GPU; and a carry-over mask that lets the CPU prepare N+1 with placeholder zeros, then patches in N’s just-generated tokens via a small kernel captured inside the CUDA graph. Result: GPU active 99.4% of the time, total runtime 234.5s instead of 300.6s.

Prior art: this is transformers catching up

Framed honestly, this is table-stakes scheduling arriving in the PyTorch library two years late. vLLM’s own September 2024 retrospective measured early versions spending only 38% of H100 wall-clock on actual compute, with 29% lost to the scheduler and 33% to the HTTP server — the same bubble HF is now closing 12. SGLang’s Zero-Overhead Batch Scheduler ships an OverlapThread that “prepares the metadata for the next batch while the GPU executes the current forward pass,” pushing utilization to 95–98% 13. NVIDIA’s TensorRT-LLM Overlap Scheduler pipelines forward pass n+1 against CPU sampling of n and pays the exact same one-token latency tax that HF’s carry-over mask incurs 14.

The technique isn’t novel. The fact that it now lives in transformers itself is.

Why now: TGI is dead

Hugging Face placed Text Generation Inference into maintenance mode on December 11, 2025, and now explicitly routes production users to vLLM or SGLang 15. Read in that context, ContinuousBatchingAsyncIOs looks like part of a Transformers v5 strategy to fold what used to be TGI-only tricks directly into the core library, so the default path stops being embarrassing.

The post itself flags RL as the leading use case. That framing makes sense: rollout, reference-logprob inference, and actor updates are tightly coupled, which plausibly makes RL trainers the constituency least willing to bolt on a separate inference server. Independent async-GRPO benchmarks show 42% gains over veRL and 11× over standard TRL when those phases are overlapped 16 — the kind of headroom HF presumably wants reachable from transformers natively, though that strategic read is mine, not theirs.

Caveats the post glosses

Two things to flag. Dual-slot buffering doubles the I/O tensor footprint and pins CUDA-graph memory pools — fine on an H200, painful on a 24 GB consumer card. And the 22% headline assumes a CPU-bound regime; for memory-bandwidth-bound decode (small batches, large models, no MoE), the GPU is already stalled waiting on HBM reads and CPU/GPU overlap recovers very little 17. The 8B / batch-32 / 8K benchmark is close to the best case.

A solid engineering win — but the news is the strategic posture, not the kernel trick.

Round-ups

Codex vibe-codes Datasette rate-limit plugin to block crawlers

Source: simon-willison

Datasette.io was getting hammered by misbehaving crawlers, so Simon Willison had Codex (GPT-5.5 xhigh) build datasette-ip-rate-limit 0.1a0. The plugin reads a configurable header like Fly-Client-IP and blocks abusive IPs per-path — production rules cap demo databases at 60 requests per 60 seconds.

Boris Mann: ‘11 AI agents’ is a meaningless brag

Source: simon-willison

Counting agents tells you nothing about the work, Boris Mann argues on Bluesky. Saying you have 11 AI agents, he writes, conveys about as much as saying you have 11 spreadsheets or 11 browser tabs open — a jab at agent-count marketing.

Mo Bitar’s satirical ‘Ralph Loop’ pitch skewers AI hype careerism

Source: simon-willison

Want a promotion? Mo Bitar’s TikTok bit advises walking into the CEO’s office, asking for $18,000 in API credits to demo a ‘Ralph Loop,’ and tagging coworkers you’ve ‘automated’ in Slack. The joke lands because nobody, he notes, actually knows what they’re doing.

Footnotes

-

Block Engineering — Base Web → Fluent UI migration — https://engineering.block.xyz/blog/from-base-web-to-fluent-ui-without-a-flag-day

↩Block describes an agent-driven UI framework migration completed ‘without a flag day,’ showing the same playbook Simon’s RN anecdote points to is already running inside large engineering orgs — not just startups.

-

Techzine — ‘Bun takes a surprising step from Zig to Rust’ — https://www.techzine.eu/news/devops/141364/bun-takes-a-surprising-step-from-zig-to-rust/

↩The pivot followed the Zig core team’s rejection of Bun’s parallelized semantic-analysis contributions under a strict ‘no AI code’ policy for the compiler — a governance conflict, not just a technical preference, drove the language switch.

-

byteiota.com — Bun Zig→Rust rewrite analysis — https://byteiota.com/bun-zig-to-rust-rewrite-ai-agents-port-300k-lines/

↩ ↩2Bun’s claude/phase-a-port branch translated roughly 960,000 lines of Zig to Rust in about six days, hitting a 99.8% pass rate on Linux x64 — but reviewers counted over 13,000

unsafeblocks compared to fewer than 100 in mature Rust projects like uv, prompting critics to label the output ‘vibecoded’. -

Hacker News discussion (item 44740693) — https://news.ycombinator.com/item?id=44740693

↩ ↩2Skeptics describe an ‘ontological difference between using code that is no longer understood by humans and deploying code that was never understood by any human in the first place,’ warning of reviewer fatigue when engineers ‘LGTM’ agent output they cannot fully audit.

-

arXiv 2508.11468 — class-level code translation benchmark — https://arxiv.org/html/2508.11468v1

↩Method-level benchmarks like HumanEval show >80% pass@k, but class-level benchmarks (ClassEval-T, TRACY) reveal sharp drops once cross-method dependencies are required, and translations that pass functional tests are often orders of magnitude slower than the source.

-

Infosecurity Europe — LLM cybersecurity benchmarks summary — https://www.infosecurityeurope.com/en-gb/blog/future-thinking/top-8-llm-benchmarks-for-cybersecurity-practices.html

↩Studies using CyberSecEval 2 indicate 28.6%–45% of LLM-based code translations introduce new vulnerabilities, particularly in input-validation paths — syntactic equivalence is not security equivalence.

-

PremAI blog — ‘Best Embedding Models for RAG 2026’ — https://blog.premai.io/best-embedding-models-for-rag-2026-ranked-by-mteb-score-cost-and-self-hosting/

↩ ↩2Jina Embeddings v5-small (677M) achieves a state-of-the-art score of 67.7 on the Multilingual MTEB… IBM’s Granite Multilingual R2 (May 2026) focuses on extreme efficiency; its 311M-parameter version scores 65.2.

-

Atal Upadhyay — ‘Embedding Models for RAG: Top 5 Ranked’ — https://atalupadhyay.wordpress.com/2026/02/13/embedding-models-for-rag-top-5-models-ranked-benchmarked/

↩Qwen3-Embedding-8B currently leads major multilingual leaderboards, including MTEB-v2, with scores frequently exceeding 70.5… BGE-M3 remains the only ‘all-in-one’ workhorse that natively produces dense, sparse, and ColBERT-style representations in a single pass.

-

AIMultiple — Open-Source Embedding Models review — https://aimultiple.com/open-source-embedding-models

↩Independent testing on the Massive Legal Embedding Benchmark (MLEB) placed the Granite English R2 model at #18 overall, outperforming several larger models such as BGE-M3 and E5 Large in retrieval tasks.

-

llama.cpp GitHub issue #11282 — https://github.com/ggml-org/llama.cpp/issues/11282

↩ ↩2Loss of strip_accents preprocessing during GGUF conversion… causes non-ASCII characters (common in the R2’s 200+ supported languages) to be mapped to the [UNK] token, effectively breaking embeddings for multilingual text.

-

r/LocalLLaMA discussion on production embedding models — https://www.reddit.com/r/LocalLLaMA/comments/1pxtwn2/which_is_the_best_embedding_model_for_production/

↩Users reported that the granite-embedding-reranker-english-r2 model produced scores dangerously close to 1.0 even for clearly non-relevant documents, a behavior IBM researchers later attributed to a faulty ‘merged model’ component.

-

vLLM blog (perf update, Sept 2024) — https://vllm.ai/blog/2024-09-05-perf-update

↩Profiling of early vLLM versions indicated that on a single H100 GPU, only 38% of wall-clock time was spent on actual GPU computation… approximately 33% consumed by the HTTP API server and 29% by the scheduler.

-

turion.ai — vLLM vs SGLang 2026 — https://turion.ai/blog/vllm-vs-sglang-inference-comparison-2026/

↩SGLang’s Zero-Overhead Batch Scheduler… uses an OverlapThread that prepares the metadata for the next batch while the GPU executes the current forward pass, pushing GPU utilization toward 95–98%.

-

NVIDIA TensorRT-LLM architecture docs — https://nvidia.github.io/TensorRT-LLM/architecture/overview.html

↩The Overlap Scheduler launches the GPU forward pass for iteration n+1 immediately after pass n completes, without waiting for the CPU to process the results… it introduces a one-token latency lag in the pipeline as a trade-off for the massive throughput gain.

-

Prem AI — LLM inference servers compared 2026 — https://blog.premai.io/llm-inference-servers-compared-vllm-vs-tgi-vs-sglang-vs-triton-2026/

↩Hugging Face officially placed Text Generation Inference (TGI) into maintenance mode on December 11, 2025… For production workloads, Hugging Face now explicitly recommends migrating to vLLM or SGLang.

-

Hugging Face — async RL training landscape — https://huggingface.co/blog/async-rl-training-landscape

↩Independent benchmarks of the Async-GRPO architecture show efficiency gains of up to 42% over veRL and 11x over standard TRL by overlapping rollout, reference logprob inference, and actor training.

-

Medium — throughput/latency tradeoff in LLM inference — https://medium.com/better-ml/throughput-latency-tradeoff-in-llm-inference-part-iii-4e96fa9753af

↩Since the decode phase of LLMs is often memory-bandwidth bound rather than compute-bound, overlapping compute with communication may yield negligible gains if the GPU is already idling while waiting for weights to stream from VRAM.