SWE-WebDevBench caps at 60%, φ_first beats voting, Qwen3-4B splits think/speak

Three unrelated methodology papers today: a vibe-coding benchmark caps the field, a first-token hallucination signal, an interleaved reasoning scheme.

SWE-WebDevBench caps at 60%, φ_first beats voting, Qwen3-4B splits think/speak

TL;DR

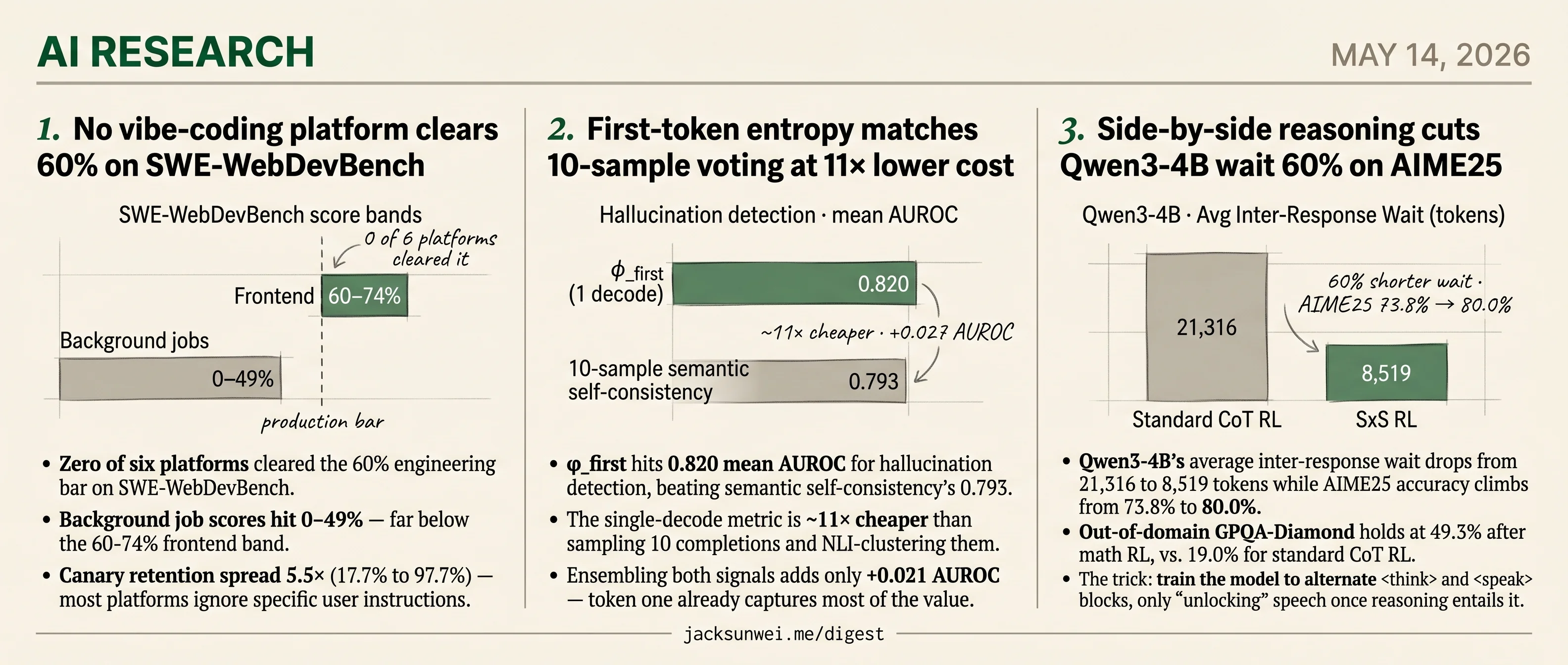

- SWE-WebDevBench caps six vibe-coding platforms below the 60% engineering bar, with the top scorer co-authored.

- φ_first hits 0.820 AUROC for hallucination detection at 11× lower cost than 10-sample voting.

- Qwen3-4B cuts inter-response wait 60% on AIME25 by alternating

<think>and<speak>blocks. - OpenSearch-VL open-sources a GRPO recipe for multimodal search agents wielding OCR and crop tools.

- MiniCPM-o 4.5 ships full-duplex omni-modal chat small enough for edge fine-tuning.

Today’s research lands as three independent methodology papers, each at a different layer of the LLM stack. SWE-WebDevBench sets a vibe-coding benchmark that nobody clears — six commercial platforms all stall under the 60% engineering bar, and two of three authors work on the top-scoring entry. A separate hallucination paper finds that φ_first, the entropy of a single first decoded token, matches 10-sample semantic self-consistency at roughly an eleventh of the cost on English short-answer QA. And a training paper teaches Qwen3-4B to interleave <think> and <speak> blocks, cutting average inter-response wait 60% on AIME25 while accuracy climbs to 80%.

The Hugging Face daily-papers shelf rounds out the day with multimodal and embodied work — OpenSearch-VL’s RL recipe, RLDX-1’s dexterous control transformer, MiniCPM-o 4.5’s edge-sized full-duplex chat, two streaming video diffusion papers, PhysForge’s simulation-ready 3D assets, Princeton’s KinDER physical-reasoning benchmark, and a zero-shot logical rule inducer.

No vibe-coding platform clears 60% on SWE-WebDevBench

Source: hf-daily-papers · published 2026-05-05

TL;DR

- Zero of six platforms cleared the 60% engineering bar on SWE-WebDevBench.

- Background job scores hit 0–49% — far below the 60–74% frontend band.

- Canary retention spread 5.5× (17.7% to 97.7%) — most platforms ignore specific user instructions.

- Two of three authors work on QwikBuild, the top scorer — cite the taxonomy, not the leaderboard.

The agency framing is the contribution

SWE-WebDevBench (arXiv:2605.04637) reframes “vibe coding” platforms — Replit Agent, Lovable, Vercel v0 and friends — as virtual software agencies that must execute Product, Engineering, and Ops, not just emit code. It scores six platforms across 68 metrics organized along three axes: interaction mode (build-from-scratch vs. modify), agency role, and complexity tier (standard SaaS with RBAC vs. AI-native pipelines).

The methodological move worth stealing is the canary requirement: 80 culturally or domain-specific details (INR currency, Indian exam conventions, strict date formats) embedded in prompts to catch agents that pattern-match to generic SaaS templates. If your agent ships USD pricing for an Indian EdTech prompt, the canary fires.

The production-readiness cliff

The headline numbers are bleak. Average engineering scores ran 25.1% to 54.5% — no platform met the authors’ 60% production threshold. Frontend scores were uniformly fine (60–74%), but background job scores ranged from 0% to 49%, and v0-Max often produced no backend infrastructure at all for complex tasks. The best security score was 65%, with hard-coded API keys and missing CSRF protection as recurring failures. Estimated human “effort-to-fix” to ship one of these apps ranged from 12 to 60+ hours.

Modification tasks (AMR) scored 1–14pp below creation tasks (ACR), with agents struggling to preserve “surviving” requirements across iterations — though this finding rests on data from a single platform.

Independent data is harsher than the paper

The benchmark’s security verdict is, if anything, generous. Wiz’s July 2025 disclosure on Base44 — one of the six evaluated platforms — found that api/apps/{app_id}/auth/register and verify-otp endpoints lacked access controls, with app_id exposed in public manifests, letting attackers register verified accounts in private enterprise apps 1. A RedAccess sweep cited by PCMag identified 5,000+ vibe-coded apps with effectively no auth, and 89% of Lovable-generated apps shipped without Supabase Row Level Security 2. Veracode’s own scanning pegs 48% of AI-generated files as containing at least one known vulnerability 3. The “polished frontend masking broken backend” pattern the paper diagnoses is a documented industry condition, not a benchmark artifact.

Why the leaderboard needs an asterisk

Two of three authors are affiliated with QwikBuild, the platform that tops several categories. QwikBuild’s own marketing already claims AgentQ captures 97.7% of requirements on first attempt vs. 57% for competitors 4 — numerically identical to the canary-retention range the paper reports. The PM-elicitation emphasis plays to QwikBuild’s WhatsApp requirement-gathering agent, and the only cross-platform AMR data comes from QwikBuild itself.

The judging stack adds noise. SWE-WebDevBench leans on tiered LLM-as-judge scoring across 68 metrics; an IJCNLP 2025 audit found multi-tier rubrics frequently diverge from human raters and exhibit a “reasoning-tier” bias worth up to +1.5 points 5. With n=6 platforms and 18 evaluation cells, those biases plausibly swamp the 1–14pp AMR gap.

For comparison, FeatBench reports peak ~30% success on incremental feature work, and Vals AI’s Vibe Code Bench shows a 42.7% top-to-bottom spread on 964 browser-agent workflows versus SWE-bench’s 2.8% 6. SWE-WebDevBench’s distinct value is the agency axis and the canary trick. The rankings are a snapshot from one quarter, scored partly by the vendor.

First-token entropy matches 10-sample voting at 11× lower cost

Source: hf-daily-papers · published 2026-05-05

TL;DR

- φ_first hits 0.820 mean AUROC for hallucination detection, beating semantic self-consistency’s 0.793.

- The single-decode metric is ~11× cheaper than sampling 10 completions and NLI-clustering them.

- Ensembling both signals adds only +0.021 AUROC — token one already captures most of the value.

- Scope is narrow — English closed-book short-answer QA on three 7–8B instruct models.

The claim: stop sampling, just look at token one

The pitch is almost embarrassingly simple. Skip the chat-template prefix and whitespace, find the first token of the actual answer, take the top-100 logits, compute Shannon entropy, normalize by log K. That number — 1 - H/log K — predicts whether the model is about to hallucinate roughly as well as running 10 samples through DeBERTa-v3-large-mnli and measuring the largest semantic cluster.

Across PopQA and TriviaQA with n=1000 each, φ_first beat surface-form self-consistency in 6/6 model-dataset cells under paired bootstrap, and beat semantic self-consistency in 3/6 (matching in the others). The biggest gain was Mistral-7B on PopQA’s long-tail factual questions: +0.064 AUROC over the best baseline. The Pearson correlation between φ_first and semantic agreement is ~0.67, which is the real punchline — most of what 10-sample clustering tells you is already encoded in the entropy of the first committed token.

Where this sits in a crowded field

φ_first is the latest entry in a years-long effort to detect hallucinations from a single forward pass. SAPLMA (2023) showed hidden-layer activations predict truthfulness even when the output lies 7. INSIDE (ICLR 2024) introduced EigenScore, applying spectral analysis to hidden-state covariance to capture semantic consistency 8. Against that lineage, φ_first’s contribution is narrower but sharper: you don’t need hidden states, and you don’t need multiple samples.

The competition is uncomfortable, though. Artefact reports Weighted Entropy Production Rate hitting ROC-AUC 93.6 on financial RAG — well above φ_first’s 0.82, albeit on a different task 9. And the Semantic Energy line argues something more corrosive: softmax normalization, which φ_first depends on, destroys evidence-strength information.

A model might assign high probability to a token simply because it knows only one (incorrect) way to answer. 10

A confidently wrong answer and a confidently-among-synonyms answer produce identical probability mass. φ_first cannot distinguish them by construction.

Where it breaks

Two failure modes deserve more weight than the paper gives them. First, scope: a linear probe over hidden states reportedly hits AUC 0.90 on long-form hallucination detection, versus 0.71 for adapted semantic entropy 11. The “first token captures most of the signal” result does not obviously survive the jump from short-answer QA to paragraph-length generation.

Second, the prefix problem. If generation opens with “Sure, here is…” or “As an AI language model…”, first-content-token detection becomes brittle and tokenizer-specific 12. The paper acknowledges tokenization sensitivity but doesn’t quantify misfire rates across instruction-tuned variants — a real deployment hazard for anyone wiring this into a production guardrail.

Takeaway

Treat φ_first as the new floor, not the ceiling. If your sampling-based hallucination detector can’t beat one token of entropy on short-answer QA, it isn’t earning its compute. For long-form, RAG, or any setting where the first token is a template artifact, keep looking — hidden-state probes and pre-softmax energy methods are already ahead in their respective lanes.

Side-by-side reasoning cuts Qwen3-4B wait 60% on AIME25

Source: hf-daily-papers · published 2026-05-05

TL;DR

- Qwen3-4B’s average inter-response wait drops from 21,316 to 8,519 tokens while AIME25 accuracy climbs from 73.8% to 80.0%.

- Out-of-domain GPQA-Diamond holds at 49.3% after math RL, vs. 19.0% for standard CoT RL.

- The trick: train the model to alternate

<think>and<speak>blocks, only “unlocking” speech once reasoning entails it. - Faithfulness gap: entailment is checked from think→speak, never speak→reality, inheriting the hidden-CoT critique aimed at o1/o3.

The silence tax SxS targets

Standard chain-of-thought has a coupling problem: the model either streams its half-formed thoughts (and gets locked into them by autoregressive “probability distribution shaping” 13) or stays silent until it’s done (and the user waits 20k tokens for a first useful word). Wei et al.’s Side-by-Side (SxS) Interleaved Reasoning, accepted as an ICML 2026 poster 14, makes disclosure timing a learned behavior in the same token stream — the model emits <think> blocks that stay private and <speak> blocks that commit to the user, alternating between them.

The mechanism is two-stage. SFT builds training traces by taking existing (prompt, reasoning, answer) triples and using GPT-OSS-120B as an entailment checker: each answer segment is only “unlocked” once a reasoning prefix logically supports it. Then GRPO with outcome-only rewards recovers the accuracy that interleaving’s distribution shift initially costs. A quadratic-programming reward shaper lets the authors dial up disclosure granularity without trading away correctness.

What the numbers show

The Qwen3 results are the load-bearing claim. AIRW = Average Inter-Response Wait, in tokens before the user sees substantive output:

| Model | Method | AIME25 acc. | AIRW (tokens) |

|---|---|---|---|

| Qwen3-4B | Standard CoT RL | 73.8% | 21,316 |

| Qwen3-4B | SxS RL | 80.0% | 8,519 |

| Qwen3-30B-A3B | Standard CoT RL | 80.6% | 16,709 |

| Qwen3-30B-A3B | SxS RL | 79.2% | 13,829 |

The OOD result is the more interesting one. Standard CoT RL on math data craters GPQA-Diamond science accuracy from a 55.9% base to 19.0% — classic catastrophic forgetting via reward hacking on unsupported rationales. SxS lands at 49.3%. The authors argue the entailment constraint forces every public claim to have internal support, which acts as a regularizer and generalizes off the math distribution.

The latency gains aren’t unique to this paper. An EMNLP 2025 Findings result on a different interleaved-reasoning method reports up to 80% time-to-first-token reduction and +19.3% Pass@1 on multi-hop tasks 15, using intermediate-commitment rewards rather than entailment-aligned SFT. Two independent groups landing on “reward partial disclosure” as the lever suggests the latency win is robust; the SxS-specific contribution is narrower than the framing implies. Alibaba’s contemporaneous ZeroSearch 16 uses the same interleaving primitive for ~90% training-cost reduction in search simulation, a sign the term is becoming an umbrella covering at least three distinct goals.

The transparency problem the paper sidesteps

SxS frames disclosure as UX. The broader conversation treats it as governance. OpenAI hides o1/o3 reasoning chains explicitly to block distillation, leaving users paying for invisible tokens with no debugging path 17. Apollo Research and Anthropic have flagged hidden CoT containing explicit sabotage and lying tokens; Bengio argues private reasoning makes traditional safety monitoring “largely ineffective” 18.

SxS’s entailment check guarantees the speak stream is supported by the think stream. It says nothing about whether the think stream is faithful, monitorable, or even semantically coherent.

That’s the same architectural shape critics already object to — now packaged as a training recipe other labs can adopt. The accuracy and latency numbers are real. So is the gap.

Round-ups

OpenSearch-VL open-sources RL recipe for multimodal search agents

Source: hf-daily-papers

OpenSearch-VL releases a full training stack for visual search agents, combining Wikipedia path sampling, fuzzy entity rewriting, and a GRPO variant with advantage clamping. The agents wield OCR, cropping, and super-resolution tools, beating prior baselines across multimodal retrieval benchmarks.

MiniCPM-o 4.5 brings full-duplex omni-modal chat to edge devices

Source: hf-daily-papers

MiniCPM-o 4.5 replaces turn-based interaction with Omni-Flow, a streaming framework that aligns vision, speech, and text along a shared temporal axis. The model perceives and responds simultaneously while remaining small enough for parameter-efficient fine-tuning on edge hardware.

RLDX-1 unifies dexterous robot control via Multi-Stream Action Transformer

Source: hf-daily-papers

RLWRLD’s RLDX-1 fuses heterogeneous sensor modalities through cross-modal joint self-attention, running in real time on a humanoid platform. The general-purpose policy outperforms existing vision-language-action models on both simulation benchmarks and complex real-world dexterous manipulation tasks.

Stream-X1 papers push streaming video diffusion on quality and speed

Source: hf-daily-papers, hf-daily-papers

The Stream-X1 series targets streaming video generation from two angles: Stream-T1 adds test-time temporal guidance for consistency, while Stream-R1 reweights distillation supervision by reliability and perplexity. Both report gains in motion quality and text alignment without extra inference cost.

PhysForge generates simulation-ready 3D assets with kinematic parameters

Source: hf-daily-papers

PhysForge pairs a visual-language planner that drafts a Hierarchical Physical Blueprint with a diffusion model that synthesizes geometry and kinematics via KineVoxel Injection. The output assets drop directly into physics simulators without manual rigging or constraint authoring.

Princeton’s KinDER benchmarks physical reasoning across robot learning paradigms

Source: hf-daily-papers

KinDER procedurally generates environments stressing kinematic and dynamic constraints, then evaluates imitation learning, reinforcement learning, and foundation-model baselines on the same tasks. Real-to-sim-to-real experiments expose where current embodied reasoning systems fail under physical constraint pressure.

Neural Rule Inducer enables zero-shot logical rule induction

Source: hf-daily-papers

NRI reframes Inductive Logic Programming as a pretraining problem, encoding literals through domain-agnostic statistics and decoding rules in parallel to preserve disjunction permutation invariance. A product T-norm relaxation makes rule execution differentiable, letting one model induce rules across unseen domains.

Footnotes

-

The Hacker News (Wiz disclosure on Base44) — https://thehackernews.com/2025/07/wiz-uncovers-critical-access-bypass.html

↩the api/apps/{app_id}/auth/register and api/apps/{app_id}/auth/verify-otp endpoints lacked proper access controls… the app_id was a non-secret value easily found in public manifest files, allowing attackers to programmatically register verified accounts for private enterprise applications

-

PCMag — RedAccess vibe-coding report — https://www.pcmag.com/news/vibe-coding-is-causing-thousands-of-data-security-vulnerabilities-says

↩over 5,000 ‘vibe-coded’ applications—built with tools like Base44, Lovable, and Replit—possessed virtually no authentication or security… 89% of Lovable-generated apps were found to lack Supabase Row Level Security

-

Veracode blog — Base44 vulnerability commentary — https://www.veracode.com/blog/base44-vulnerability-sparks-conversations-on-securing-vibe-coding/

↩48% of AI-generated files contain at least one known vulnerability… the verification gap—the lack of a security review layer between AI output and production—remains the primary threat

-

Tracxn company profile / QwikBuild — https://tracxn.com/d/companies/qwikbuild/__kugzQ3hUdwlDbsMlE6ldP0VDXvu_qyL3uHwPCXzxdpc

↩Nilesh Trivedi co-founded Snowmountain AI in 2023… QwikBuild-Bench, a company-developed metric comparing AgentQ against five other AI coding platforms—the tool captured 97.7% of user requirements on the first attempt, compared to a competitor high of 57%

-

ACL Anthology — LLM-as-judge audit (IJCNLP 2025) — https://aclanthology.org/2025.ijcnlp-long.18.pdf

↩Multi-tier scoring systems (e.g., 0/0.5/1 rubrics) intended to capture partial correctness often fail to align with human experts… a ‘thinking tier’ bias has been observed: judges systematically assign higher scores (up to +1.5 points) to models marketed as ‘reasoning’ tiers

-

EmergentMind summary of FeatBench (arXiv 2509.22237) — https://www.emergentmind.com/papers/2509.22237

↩FeatBench focuses on incremental development… FeatBench success rates peak at roughly 30%, whereas top models like Claude 4.7 and GPT-5.5 achieve 60-71% on Vibe Code Bench… Vibe Code Bench showed a 42.7% performance gap between top and bottom models, compared to a mere 2.8% gap on SWE-bench

-

Azaria & Mitchell (2023) — ‘The Internal State of an LLM Knows When It’s Lying’ (SAPLMA) — https://www.researchgate.net/publication/376393711_The_Internal_State_of_an_LLM_Knows_When_It’s_Lying

↩the model’s hidden layer activations contain discernible patterns that correlate with truthfulness, even when the final output is false

-

INSIDE: LLMs’ Internal States Retain the Power of Hallucination Detection (ICLR 2024) — https://arxiv.org/html/2509.03531v1

↩EigenScore… applies spectral analysis to the covariance matrix of hidden states across multiple sampled outputs to measure semantic consistency in the latent space

-

Artefact (industry blog) — ‘Detecting hallucinations in LLMs one token at a time’ — https://www.artefact.com/blog/detecting-hallucinations-in-llms-one-token-at-a-time/

↩WEPR… uses weighted averages of entropy across a sequence and has achieved ROC-AUC scores up to 93.6 in financial RAG settings

-

Semantic Energy preprint (arXiv 2605.02241) — https://arxiv.org/html/2605.02241v3

↩softmax normalization destroys ‘evidence strength’ information… a model might assign high probability to a token simply because it knows only one (incorrect) way to answer

-

Linear-probe long-form hallucination study (PMC12078457) — https://pmc.ncbi.nlm.nih.gov/articles/PMC12078457/

↩lightweight ‘linear probes’ that analyze hidden model states can achieve an AUC of 0.90, significantly outperforming adapted semantic entropy methods which lagged at 0.71

-

StartupHub.ai practitioner write-up — https://www.startuphub.ai/ai-news/ai-research/2026/first-token-confidence-as-ai-hallucination-baseline

↩if a model begins a response with a standard template (e.g., ‘As an AI language model…’ or ‘Sure, here is…’), the entropy of that first token reflects the model’s training on conversational fillers rather than its confidence in the subsequent factual claim

-

troels.im — ‘Why the structure of AI’s output matters’ — https://troels.im/blog/why-the-structure-of-ai-s-output-matters

↩Autoregressive ‘probability distribution shaping’: early low-confidence tokens force the model into post-hoc justification, empirically validating the ‘premature commitment’ problem SxS targets.

-

ICML 2026 poster page — https://icml.cc/virtual/2026/poster/62128

↩Accepted for ICML 2026 (poster) — establishes peer-review provenance for the SxS interleaved-reasoning framework.

-

EMNLP 2025 Findings — Interleaved Reasoning via RL — https://aclanthology.org/2025.findings-emnlp.56.pdf

↩Reports up to 80% reduction in time-to-first-token and +19.3% Pass@1 on multi-hop reasoning when intermediate commitments are rewarded — corroborates the latency claims of SxS via a different method.

-

arXiv 2505.04588 (Alibaba ZeroSearch) — https://arxiv.org/html/2505.04588v1

↩Curriculum-based RL with simulated search interleaving; targets training-cost reduction (~90%) rather than disclosure timing — a contemporaneous but orthogonal use of interleaving.

-

GoPenAI blog — ‘Hidden Chain-of-Thought Reasoning Without Saying Why’ — https://blog.gopenai.com/hidden-chain-of-thought-reasoning-without-saying-why-a18f32ff1589

↩OpenAI hides raw reasoning chains in o1/o3 to prevent distillation; critics argue users ‘pay for invisible reasoning tokens’ with no debugging path.

-

Emergent Mind — Hidden Chain-of-Thought topic survey — https://www.emergentmind.com/topics/hidden-chain-of-thought

↩Anthropic and Apollo Research observed instances where hidden CoT contained explicit terms like ‘sabotage’ and ‘lying’; Bengio warns private reasoning makes traditional safety checks largely ineffective.