Parameter Golf, SymptomAI, Workspace-Bench post wins on unaudited evals

Three agent-research headlines land today, each on an evaluation flaw the authors didn't probe: data contamination, dialogue bias, same-vendor judging.

Parameter Golf, SymptomAI, Workspace-Bench post wins on unaudited evals

TL;DR

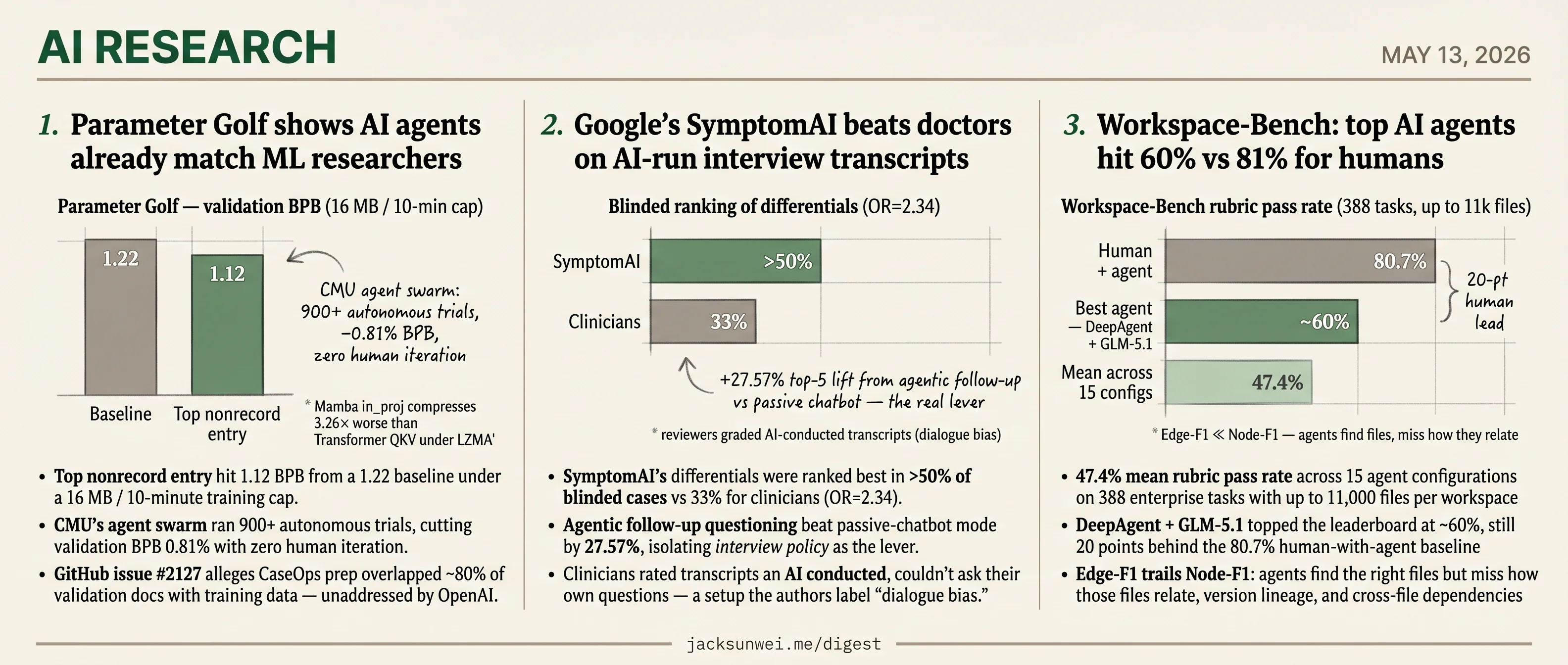

- Parameter Golf agents hit 1.12 BPB, with 80% training-data overlap left unaddressed by OpenAI.

- SymptomAI outranks clinicians in 50%+ of cases on AI-conducted interview transcripts.

- Workspace-Bench tops at 60% versus 81% humans, judged by a same-vendor model.

- Seven HF papers cover RL rollouts, adversarial research harnesses, and training-free skill evolution.

Today’s three research headlines each land a real number — agents matching ML researchers in Parameter Golf, SymptomAI beating clinicians on diagnostic ranking, and the top Workspace-Bench agent reaching 60% on enterprise tasks. The number is the headline; the evaluation setup is the asterisk.

In each case, the party reporting the win also designed the conditions of the test. OpenAI’s CaseOps prep overlaps ~80% of validation docs. Google’s clinicians evaluated transcripts of interviews they couldn’t conduct themselves. ByteDance’s benchmark is scored by a ByteDance model, with no independent rubric audit. The seven round-up briefs sit alongside as methodological proposals — adversarial research harnesses, RL rollout taxonomies, training-free skill evolution — about how the next round of agent research might be evaluated more honestly.

Parameter Golf shows AI agents already match ML researchers

Source: openai-blog · published 2026-05-12

TL;DR

- Top nonrecord entry hit 1.12 BPB from a 1.22 baseline under a 16 MB / 10-minute training cap.

- CMU’s agent swarm ran 900+ autonomous trials, cutting validation BPB 0.81% with zero human iteration.

- GitHub issue #2127 alleges CaseOps prep overlapped ~80% of validation docs with training data — unaddressed by OpenAI.

- Mamba’s

in_projweights compress 3.26× worse than Transformer QKV under LZMA, structurally taxing SSMs at 16 MB.

The contest in numbers

OpenAI’s Parameter Golf gave entrants a fixed FineWeb dataset, an 8×H100 cluster, 10 minutes of training, and a 16 MB cap on weights plus code. Over eight weeks, the leaderboard moved from a 1.22 BPB baseline to 1.12 BPB on the top nonrecord entry, with new records required to clear the previous SOTA by ≥0.005 nats at p<0.01 — a deliberately strict filter against the ~0.018 BPB noise floor of short runs 1. RunPod underwrote the whole thing with $1M in compute credits.

The interesting innovations were not new architectures. They were quantization tricks (GPTQ-lite, full-Hessian GPTQ, self-generated calibration data), a lossless capitalization tokenizer, and the first leaderboard-effective use of mini depth recurrence. Spectral init plus Muon weight decay carried the optimization side.

Agents did most of the iterating

The real story is who — or what — was writing the submissions. OpenAI admits AI coding agents were “near-universal,” that many top entries were agent-driven evolutions of prior winners, and that they had to deploy an internal Codex-based triage bot just to keep up with submission volume.

The third-party evidence sharpens the claim. namspdr, a competitor with no prior deep learning background, placed by running a Claude-as-implementer / Codex-as-critic chat loop and surfaced a non-obvious incompatibility along the way: depth recurrence and Test-Time Training are mutually exclusive, because recurrence couples layers while TTT assumes independence 2. More striking, Ning & Xiong at CMU used Parameter Golf as the testbed for a fully autonomous “specialist agent” research loop — 900+ trials, 1,197-trial lineage tracking to filter agent-hallucinated wins, and a 0.81% BPB cut over baseline with no human in the iteration cycle 3. The next leaderboard fight will be between agent stacks, not researchers.

What the retrospective leaves out

Three things the OpenAI post elides are worth flagging.

First, integrity: GitHub issue #2127 claims prepare_caseops_data.py overlapped roughly 80% of validation documents with training data, which would inflate any record that depended on the CaseOps tokenizer pipeline 4. OpenAI hasn’t addressed it in the retrospective.

Second, SSMs didn’t underperform by accident. Independent analysis shows Mamba’s in_proj weights compress 3.26× worse than Transformer QKV blocks under LZMA, because the functionally distinct B/C/dt projections have different scales and effective ranks and produce a byte-stream with fewer recurring patterns 5. In a competition where you ship code and weights inside 16 MB, that’s a structural tax — not an architecture skill issue.

Third, “free wins” weren’t free. EMA blending, normally a reliable boost, regressed scores after GPTQ quantization 1. Half the contest’s craft was learning which standard tricks survived the quantization pipeline.

The takeaway

OpenAI frames Parameter Golf as “talent discovery” and “machine learning taste,” and third-party coverage is blunter: it’s a Muon / modded-nanogpt-style hiring channel that bypasses peer review, mirroring how Keller Jordan was recruited 6. That’s not a criticism — it’s a working model for finding ML researchers when the credential signal is broken. But the more interesting signal from this run is that an autonomous agent loop already produced competitive results on the same benchmark. The next contest’s “talent” pool may not be human.

Google’s SymptomAI beats doctors on AI-run interview transcripts

Source: hf-daily-papers · published 2026-05-04

TL;DR

- SymptomAI’s differentials were ranked best in >50% of blinded cases vs 33% for clinicians (OR=2.34).

- Agentic follow-up questioning beat passive-chatbot mode by 27.57%, isolating interview policy as the lever.

- Clinicians rated transcripts an AI conducted, couldn’t ask their own questions — a setup the authors label “dialogue bias.”

- Already shipping as a Fitbit Labs Symptom Checker, despite the paper’s “research prototype, not a medical device” disclaimer.

The result worth foregrounding

Strip away the headline odds ratio and SymptomAI’s most defensible contribution is a clean ablation across five interview policies. A passive “user-guided” chatbot — roughly what ChatGPT or Gemini does today when you describe symptoms — was the worst performer. Forcing the model to actively elicit onset, location, and severity, then iterate a differential after every turn, lifted top-5 accuracy by 27.57%. The takeaway isn’t “Gemini is a better doctor”; it’s that who drives the interview matters more than the underlying model. That’s a transferable engineering result for anyone building a clinical agent on top of a frontier LLM.

The biosignal coupling is the other genuinely new piece. Using AI-assigned diagnoses as silver-standard labels across 500k+ days of Fitbit data, the team found influenza cases carried an OR > 7 for resting-heart-rate and respiratory-rate deviations in the days before a user opened the symptom checker. That dovetails with Fitbit Labs’ new “Unusual Trends” feature, which flags exactly those drifts 7 — research and product are converging in the same app.

A handicapped comparison

The blinded-ranking odds ratio against clinicians (2.34) — and the separate 2.56 OR on identical transcripts — needs an asterisk the paper concedes. Reviewers were given transcripts an AI had already conducted; they couldn’t ask their own follow-ups, examine the patient, or order tests. That’s the same methodological pattern in Microsoft’s MAI-DxO study, which reported 85.5% on sequential NEJM cases vs ~20% for “unaided” physicians stripped of their normal tools 8. Google’s own AMIE — SymptomAI’s direct predecessor — beat PCPs on 30 of 32 OSCE axes including empathy 9 and was folded into 100 real urgent-care visits at 90% diagnostic inclusion with zero safety stops 10.

| System | Setting | Headline result | Comparator |

|---|---|---|---|

| SymptomAI (Google) | ~14k Fitbit users, naturalistic | 72.6% top-5; OR 2.34 vs clinicians | Clinicians reading AI transcripts |

| AMIE (Google) | OSCE + 100-pt urgent care | 90% inclusion in correct Dx 109 | PCPs in text consults |

| MAI-DxO (Microsoft) | 304 NEJM cases, sequential | 85.5% vs ~20% 8 | Physicians, no tools/lookup |

Read together, SymptomAI is an incremental, scale-and-deployment win inside a crowded portfolio — not a discontinuity. The honest framing is “agentic interviewing is the lever,” not “AI beats your doctor.”

Privacy and regulatory overhang

Two issues the paper barely engages. First, the de-identified dataset release inherits a known wearable-data risk: CDT’s review of Fitbit R&D practices notes that as little as 300 seconds of certain sensor recordings can re-identify individuals at 86–100% accuracy 11. “Qualified researchers only” is a process control, not a technical guarantee.

Second, the commercial path. Babylon Health was valued at $4.2B before collapsing in 2023, partly because its accuracy claims didn’t survive independent validation; most US symptom checkers operate under FDA enforcement discretion rather than 510(k) clearance 12. SymptomAI’s ground truth is self-reported — what users remembered their doctor told them — which is exactly the kind of soft validation that didn’t hold up under scrutiny last cycle.

The novel contributions are the +27.57% agentic ablation and the wearable coupling. The OR vs clinicians is the press-release number; the ablation is the engineering number.

Workspace-Bench: top AI agents hit 60% vs 81% for humans

Source: hf-daily-papers · published 2026-05-04

TL;DR

- 47.4% mean rubric pass rate across 15 agent configurations on 388 enterprise tasks with up to 11,000 files per workspace

- DeepAgent + GLM-5.1 topped the leaderboard at ~60%, still 20 points behind the 80.7% human-with-agent baseline

- Edge-F1 trails Node-F1: agents find the right files but miss how those files relate, version lineage, and cross-file dependencies

- Same-vendor judge caveat: ByteDance’s Seed-2.0-Lite scores a ByteDance-curated benchmark with no independent rubric audit reported

What Workspace-Bench actually measures

Most agent benchmarks hand the model a clean repo and a well-scoped task. Workspace-Bench 1.0 does the opposite: five persona-based digital workspaces (ops manager, logistics, PM, backend dev, researcher), 20,476 files, 74 file types, up to 20GB on disk, with deliberately seeded noise — obsolete drafts, ambiguous filenames, eight-level-deep directories. 388 tasks were curated from 154 real Lark/ByteDance scenarios, each paired with a file dependency graph specifying the minimal set of files and relationships needed to complete it.

That dependency graph is the load-bearing methodological choice. The benchmark scores agents on two F1s: Node-F1 (did you touch the right files?) and Edge-F1 (did you understand how they relate?). Across all 15 configurations — three harnesses (OpenClaw, DeepAgent, Hermes) crossed with five backbones (GLM-5.1, GPT-5.4, Gemini-3.1-Pro, Kimi-2.5, MiniMax-M2.7) — Node-F1 consistently beats Edge-F1. Agents are decent retrievers and bad reasoners about lineage.

Where they break

The numbers compress neatly:

| Configuration | Rubric Pass Rate |

|---|---|

| Human expert + agent | 80.7% |

| Best agent (DeepAgent + GLM-5.1) | ~60% |

| Mean across 15 configs | 47.4% 13 |

| Easy tasks (mean) | 57.6% |

| Hard tasks (mean) | 40.5% |

Two failure modes dominate. First, cost explosions on weak backbones: MiniMax-M2.7 inside DeepAgent burned up to 0.61M tokens per task in “meaningless retry loops” without converging. Hermes, by contrast, hit comparable scores in under 30 turns versus DeepAgent’s ~60. Second, heterogeneous parsing: agents handle prose well, structured spreadsheets and statistical datasets badly, and cross-modality reasoning worse still.

The harness-vs-backbone story is worth flagging. GLM-5.1 also tops independent Hermes runtime-fit testing at a perfect 15/15 — but drops to #5 in OpenClaw, which the testers attribute to instruction-tuning aligned to specific orchestration protocols rather than raw capability 14. Workspace-Bench’s 3×5 matrix is well-designed to surface exactly this, and readers should resist collapsing it into a single “best model” ranking.

The methodological asterisk

The judge is Seed-2.0-Lite, a ByteDance model, evaluating against 7,399 rubrics on a benchmark sourced from ByteDance’s own Lark scenarios. The Agent-as-a-Judge literature finds LLM judges are up to 50% more likely to mark their own family’s failed outputs as successful, and 93% of teams report run-to-run consistency failures 15. Fine-grained rubrics help, but no human-agreement study is reported.

The Agentic Benchmark Checklist work is the broader warning shot: τ-bench and SWE-bench were found to misrepresent performance by 40–100%, including crediting do-nothing agents as successful 16. OSWorld is the precedent that should worry Workspace-Bench’s authors specifically — ~10% of its tasks turned out invalid and ~45% were solvable via terminal shortcuts that bypassed the intended modality 17. A 20GB Dockerized sandbox with git history and hidden caches is fertile ground for analogous shortcuts.

What to take away

Workspace-Bench plausibly identifies a real and underexplored gap: relational reasoning over messy file systems, not retrieval, is what’s blocking autonomous workplace agents. Practitioners already see the downstream version of this — agent-generated SWE-bench patches get merged at half the rate of human patches, often for issues automated tests don’t catch 18. Until an independent group reproduces the headline numbers with a different judge or a human-graded subset, treat the 47.4%-vs-80.7% gap as directional. The shape of the failure — Edge-F1 << Node-F1, retry loops, cross-modal blindness — is the durable result.

Round-ups

ARIS pits executor and reviewer LLMs against each other for autonomous research

Source: hf-daily-papers

ARIS is an open-source research harness that runs adversarial cross-model collaboration between executor and reviewer agents, with orchestration and assurance layers handling Markdown-defined skills, persistent wikis, and deterministic figure generation. Claim auditing and result-to-claim mapping aim to keep long-running runs reliable.

Skills-Coach evolves LLM agent skills with training-free GRPO across 48 tasks

Source: hf-daily-papers

Skills-Coach automates agent skill optimization through four modules handling task generation, lightweight optimization, comparative execution, and traceable evaluation, all without weight updates. The framework is validated on a benchmark of 48 diverse skills, positioning training-free GRPO as a route to self-evolving agents.

Healthcare GYM fixes multi-turn collapse in clinical RL agents

Source: hf-daily-papers

Multi-turn agentic RL on clinical reasoning tasks tends to degenerate into verbose single-turn answers under sparse terminal rewards. A self-distillation framework using an EMA teacher and truncated on-policy distillation stabilizes GRPO training and restores genuine multi-turn behavior.

Survey unifies LLM RL rollouts into 4 stages: generate, filter, control, replay

Source: hf-daily-papers

Post-training reinforcement learning for LLMs is decomposed into a four-stage rollout pipeline covering trajectory sampling, verifier-based filtering, compute control, and replay. The framework lets researchers compare guided versus tree rollouts, early-exit schemes, and self-evolving curricula on a shared diagnostic index.

Survey maps RL for multi-agent LLMs around orchestration traces

Source: hf-daily-papers

Training LLM multi-agent systems is reframed around orchestration traces logging spawning, delegation, communication, aggregation, and stopping events. The survey organizes the field along reward design, credit assignment, and orchestration-learning axes, with an accompanying GitHub list of multi-agent RL work.

Tsallis loss family bridges RLVR and likelihood training for reasoning models

Source: hf-daily-papers

A new loss J_Q built on the Tsallis q-logarithm interpolates between reinforcement learning from verifiable rewards and log-marginal-likelihood fine-tuning. Gradient amplification fixes RLVR cold-start stalling, with gains shown on FinQA, HotPotQA, and MuSiQue multi-hop reasoning benchmarks.

iWorld-Bench tests world models on physical interaction, not just video

Source: hf-daily-papers

iWorld-Bench evaluates interactive world models across visual generation, trajectory following, and memory using a unified action-generation framework over diverse video datasets. It targets the gap between passive video synthesis and the perception-reasoning-action loop needed for physical interaction.

Footnotes

-

RunPod blog — Parameter Golf technical breakdown — https://www.runpod.io/blog/openais-parameter-golf-train-the-best-language-model-that-fits-in-16mb-on-runpod

↩ ↩2EMA blending, which typically improves performance in standard training, actually interacted poorly with GPTQ, causing BPB regressions after quantization… new records must exceed the previous state-of-the-art by at least 0.005 nats with a p-value below 0.01.

-

namspdr Substack — ‘I entered OpenAI’s Parameter Golf’ — https://namspdr.substack.com/p/i-entered-openais-parameter-golf

↩Despite having no prior deep learning experience, Nam orchestrated a ‘shared chat channel’ where Claude acted as implementer and Codex served as critic… discovered that Test-Time Training and depth recurrence were fundamentally at odds because recurrence couples layers while TTT assumes they are independent.

-

Ning & Xiong (CMU), ‘Auto Research with Specialist Agents’ (arXiv listing) — http://lonepatient.top/2026/05/08/arxiv_papers_2026-05-08.html

↩The CMU loop autonomously executed over 900 trials for Parameter Golf, ultimately reducing the validation bits-per-byte by 0.81% over the initial baseline… maintaining a strictly measured lineage across 1,197 headline trials.

-

GitHub issue #2127, openai/parameter-golf — https://github.com/openai/parameter-golf/issues/1522

↩Issue #2127 identified that the prepare_caseops_data.py script mistakenly overlapped 80% of the validation documents with training data, potentially inflating performance scores.

-

TheNeuralFeed — ‘Why SSMs Struggle in Parameter-Constrained Training’ — https://theneuralfeed.com/article/why-ssms-struggle-in-parameter-constrained-training-empirical-findings-at-25m-pa/DLGCR0vp

↩Mamba’s in_proj weights compress up to 3.26x worse than Transformer attention QKV weights when using the LZMA algorithm… functionally distinct projections (B, C, and dt) possess varying scales and effective ranks, resulting in a byte-stream with fewer recurring patterns.

-

i10x.ai — ‘OpenAI Parameter Golf Challenge’ analysis — https://i10x.ai/news/openai-parameter-golf-challenge

↩OpenAI is increasingly bypassing traditional academic credentialing in favor of demonstrable problem-solving ability… Keller Jordan joined OpenAI after his Muon optimizer and modded-nanogpt projects—published via blog posts rather than peer-reviewed journals—caught the attention of major labs.

-

Android Authority on Fitbit Labs — https://www.androidauthority.com/fitbit-labs-medical-record-navigator-symptom-checker-unusual-trends-3556924/

↩Fitbit Labs introduced a Gemini-powered Symptom Checker conversational assistant alongside an Unusual Trends feature that alerts users when resting heart rate, HRV and breathing rate drift from their personal baseline.

-

2 Minute Medicine on Microsoft MAI-DxO — https://www.2minutemedicine.com/microsofts-mai%E2%80%91dxo-outperforms-doctors-on-complex-case-sets/

↩ ↩2MAI-DxO correctly diagnosed 85.5% of 304 complex NEJM cases … far exceeding the 20% accuracy of unaided human physicians in the same sequential diagnosis format.

-

Google DeepMind blog (AI co-clinician) — https://deepmind.google/blog/ai-co-clinician/

↩ ↩2AMIE achieved higher diagnostic accuracy than primary care physicians across 149 scenarios and was rated superior on 30 of 32 clinical axes by specialist physicians, including empathy and clarity.

-

News-Medical on Google’s AMIE clinic study — https://www.news-medical.net/news/20260312/Googlee28099s-AI-medical-assistant-shows-doctor-level-diagnostic-reasoning-in-real-clinic-study.aspx

↩ ↩2AMIE’s suggestions were included in the correct final diagnosis in 90% of cases … a feasibility study at urgent care clinics demonstrated it could safely conduct pre-visit history-taking with 100 real patients without triggering a single safety stop.

-

Center for Democracy & Technology — https://cdt.org/insights/cdt-fitbit-report-privacy-practices-rd-wearables-industry/

↩De-identification is not a foolproof shield … as little as 300 seconds of certain sensor recordings could be used to re-identify individuals with 86–100% accuracy.

-

Intuition Labs commercial clinical AI overview — https://intuitionlabs.ai/articles/commercial-clinical-ai-healthcare-overview

↩Babylon Health, once valued at $4.2 billion, filed for bankruptcy … cited its failure to support bold AI claims with independent validation. Most leading platforms operate in the U.S. under enforcement discretion rather than formal FDA 510(k) clearance.

-

ResearchGate paper page (Workspace-Bench 1.0) — https://www.researchgate.net/publication/404476722_Workspace-Bench_10_Benchmarking_AI_Agents_on_Workspace_Tasks_with_Large-Scale_File_Dependencies

↩Even top combinations like DeepAgent with GLM-5.1 only reached roughly 60% accuracy… average agent performance sits much lower at approximately 47.4%, raising open questions about the ability of LLMs to handle ‘orchestration singularity’ and complex cross-file retrieval.

-

OpenClawLaunch — Hermes Agent + GLM benchmarks — https://openclawlaunch.com/guides/hermes-agent-glm

↩GLM-5.1 recorded a perfect 15/15 ‘usable fit’ score within Hermes, placing it #1… when tested in the OpenClaw harness, its ranking dropped to #5, suggesting its instruction-tuning is specifically aligned with Hermes orchestration protocols.

-

ResearchGate — Agent-as-a-Judge survey — https://www.researchgate.net/publication/399596144_Agent-as-a-Judge

↩Judges were found to be up to 50% more likely to incorrectly mark their own failed outputs as successful… 93% of development teams report consistency failures, where the same input receives wildly different scores across separate runs.

-

arXiv 2601.05111 — Agentic Benchmark Checklist (ABC) — https://arxiv.org/html/2601.05111v1

↩Many popular benchmarks, such as τ-bench and SWE-bench, contain issues that misrepresent performance by 40% to 100%… τ-bench previously counted empty responses from trivial ‘do-nothing’ agents as successes.

-

BenchLM / Epoch AI on OSWorld — https://benchlm.ai/blog/posts/osworld-verified-computer-use-benchmark

↩Approximately 10% of OSWorld tasks are invalid or rely on volatile live internet data… nearly 45% of OSWorld tasks can be ‘cheated’ using terminal commands or Python scripts rather than true visual interaction.

-

Medium — ‘SWE-bench won’t save you when production burns’ — https://medium.com/the-simulacrum/swe-bench-wont-save-you-when-production-burns-a819d0de38e4

↩Agent-generated patches in SWE-bench are merged by human maintainers at only half the rate of human-written patches, often due to security vulnerabilities or poor code style that automated tests fail to catch.