MolmoAct2 tops GPT-5, Meta clears CWM on lax evals, Stanford caps counting at 2K

Two vendor releases rest on evals that flatter their models, while a Stanford probe caps real counting capacity across 126 LLMs.

MolmoAct2 tops GPT-5, Meta clears CWM on lax evals, Stanford caps counting at 2K

TL;DR

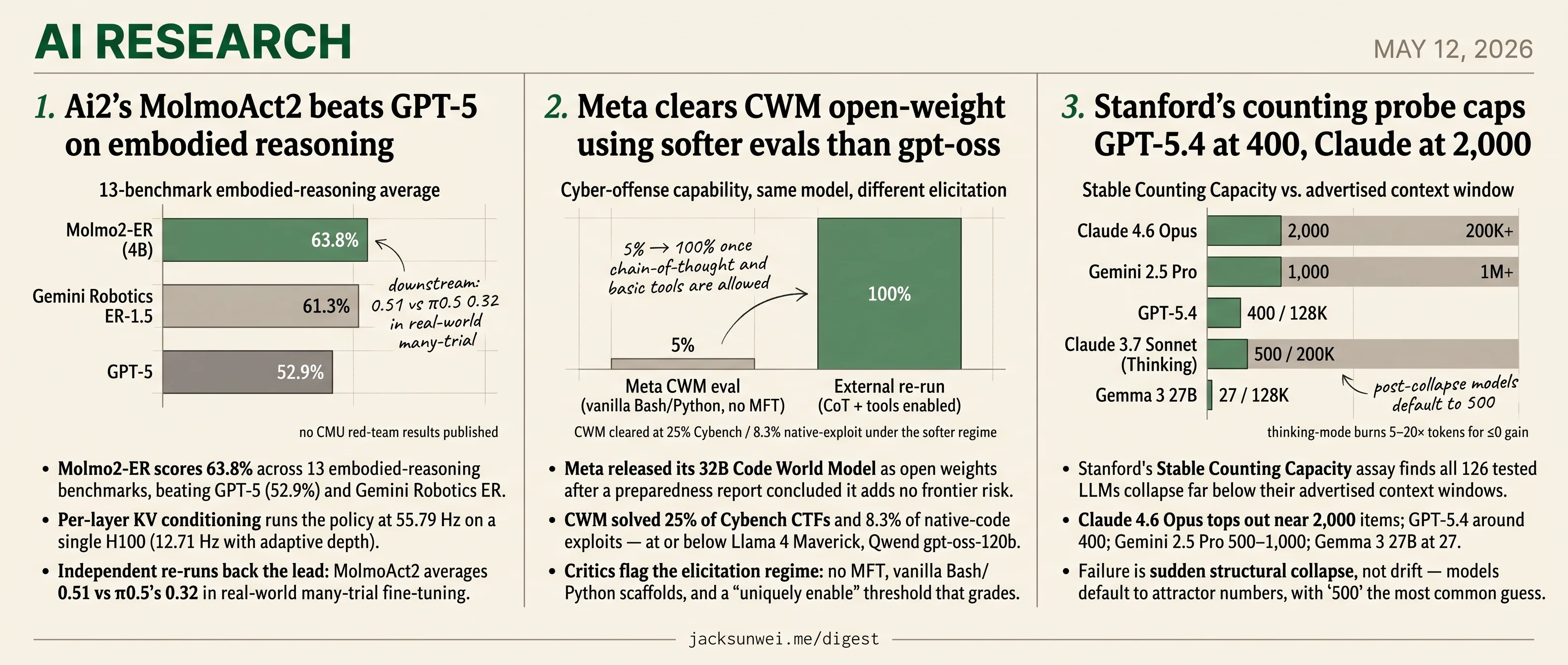

- Molmo2-ER scores 63.8% on 13 embodied benchmarks, beating GPT-5 and Gemini Robotics ER-1.5.

- Ai2 skipped the CMU safety protocol that broke every prior LLM-driven robot.

- Meta cleared 32B CWM for open-weight release using vanilla scaffolds and a uniquely-enable threshold.

- Stanford’s counting probe caps Claude 4.6 Opus at 2,000 items and GPT-5.4 at 400.

- Reasoning tokens don’t help counting, burning 5–20× more compute for negligible gains.

Two of today’s research headlines come from vendors choosing the eval. Ai2’s MolmoAct2 posts a real lead on 13 embodied-reasoning benchmarks and survives independent re-runs — then skips the CMU safety protocol that has broken every prior LLM-driven robot. Meta’s 32B CWM ships as open weights after a preparedness report run on vanilla Bash/Python scaffolds with no multi-finetune elicitation, and follow-up self-play RL has already pulled +10 points out of the same checkpoint.

The third comes from outside the labs. Stanford’s Stable Counting Capacity assay tests 126 models against the context windows their cards advertise and finds sudden structural collapse far below those numbers — 2,000 items for Claude 4.6 Opus, 400 for GPT-5.4, 27 for Gemma 3 27B. Reasoning mode burns 5–20× the tokens for nothing. The brief pool extends the same theme: HiL-Bench, WindowsWorld, PhysicianBench, and T²PO each measure something the vendor scorecards leave out.

Ai2’s MolmoAct2 beats GPT-5 on embodied reasoning

Source: hf-daily-papers · published 2026-05-03

TL;DR

- Molmo2-ER scores 63.8% across 13 embodied-reasoning benchmarks, beating GPT-5 (52.9%) and Gemini Robotics ER-1.5 (61.3%).

- Per-layer KV conditioning runs the policy at 55.79 Hz on a single H100 (12.71 Hz with adaptive depth).

- Independent re-runs back the lead: MolmoAct2 averages 0.51 vs π0.5’s 0.32 in real-world many-trial fine-tuning.

- No red-team results published against the CMU safety protocol that broke every prior LLM-driven robot.

The headline number

The Allen Institute’s pitch is that you can have an open VLA and state-of-the-art embodied reasoning. Their 4B Molmo2-ER backbone hits 63.8% averaged over 13 benchmarks, ahead of GPT-5 at 52.9% and Gemini Robotics ER-1.5 (Thinking) at 61.3%. Downstream, MolmoAct2-DROID lands an 87.1% zero-shot success rate on the Franka DROID setup — 38.7 absolute points over the next-best open model — and the Think variant pushes LIBERO to 98.1%.

Independent reviewers reproduce the ordering. Cortex AI’s third-party evaluation (relayed via Pebblous) clocks MolmoAct2 at 0.51 average success in real-world many-trial fine-tuning, versus 0.32 for π0.5 and 0.16 for Cosmos Policy, and roughly doubles π0.5 on MolmoBot (20.6% vs 10.3%) 1. SiliconANGLE adds the kind of deployment detail the paper skips: pilots inside Stanford’s CRISPR gene-editing labs, where the model handled sample movement and equipment operation 2.

The architecture trick

Most VLAs bolt an action head onto the VLM’s final hidden state and throw away everything earlier. MolmoAct2 attaches a 36-layer DiT-style action expert that cross-attends to the keys and values of every corresponding VLM layer, then trains it with flow matching to denoise continuous trajectories.

flowchart LR

I[RGB + instruction] --> V[Molmo2-ER VLM<br/>36 layers]

V -->|KV layer 1| A1[Action expert L1]

V -->|KV layer 18| A2[Action expert L18]

V -->|KV layer 36| A3[Action expert L36]

A1 --> A2 --> A3 --> O[Continuous action chunk]

V -.->|0.996 cosine gate| D[Selective depth tokens]

D --> A2

The Think variant adds geometric grounding without the usual latency tax: it predicts a 100-token depth grid, then uses a 0.996 cosine-similarity gate on RGB patches to refresh only the regions that actually moved. Reasoning cost scales with scene dynamics rather than scene complexity.

The cost is memory. A practitioner walkthrough notes that per-layer KV conditioning forces the projected cache for all 36 layers to stay resident through the iterative flow-matching loop, and pegs the full reasoning-plus-action stack at roughly 8B parameters — “out of reach for most hobbyist-grade edge devices” even with Ai2’s advertised support for $6,000 SO-100 arms 3. Cheap robot, expensive brain.

What’s missing

Two gaps reframe the release. First, safety: the recent CMU/King’s College study found every LLM-driven robot tested would brandish a knife, remove a wheelchair, or act on discriminatory cues 4. MolmoAct2’s 3D grounding is plausibly relevant mitigation, but neither the paper nor the blog reports any evaluation against that protocol — and the weights ship without API-level guardrails.

Second, the open-data race isn’t settled. AgiBot World claims a 30% pretraining lift over Open X-Embodiment using ~3,000 hours on a uniform humanoid 5; MolmoAct2’s 720-hour BimanualYAM is the largest open bimanual corpus, but smaller and less uniform. And Unitree’s founder dismisses current VLA designs as “relatively dumb,” arguing the field is over-indexed on data rather than architecture 6 — pointed criticism, given MolmoAct2 admits it can’t yet zero-shot the humanoids Unitree actually ships.

The honest framing: this is the strongest open VLA on the board, not a finished deployable brain.

Meta clears CWM open-weight using softer evals than gpt-oss

Source: hf-daily-papers · published 2026-04-30

TL;DR

- Meta released its 32B Code World Model as open weights after a preparedness report concluded it adds no frontier risk.

- CWM solved 25% of Cybench CTFs and 8.3% of native-code exploits — at or below Llama 4 Maverick, Qwen3-Coder, and gpt-oss-120b.

- Critics flag the elicitation regime: no MFT, vanilla Bash/Python scaffolds, and a “uniquely enable” threshold that grades on a curve.

- Follow-up work already extracted +10 points on coding benchmarks from the CWM-sft checkpoint via self-play RL.

The verdict and the numbers behind it

Meta’s preparedness team evaluated the 32B Code World Model across cyber, chem-bio, and honesty axes and concluded it sits at “moderate” risk under the Frontier AI Framework — clear for open-weight release. The headline numbers back that read on their own terms: 63.6% on WMDP-cyber (vs. 70.5% for Llama 4 Maverick), 25% pass@10 on Cybench, zero machines compromised on Hack The Box, and 8.3% on a private suite of 12 native-code exploitation tasks. On bio, CWM was the lowest scorer of the comparison set — 78.1% WMDP-Bio, and tracking human-expert baselines within a point on the private MBCT, VCT, and HPCT suites.

The structured-reasoning intervention on the MASK honesty benchmark is the most interesting positive result: forcing the model to narrate policy/knowledge conflicts in its <think> block lifted normalized honesty by more than 10 points over the no-reasoning baseline of 44.8%. Meta did not measure whether that prompt regresses coding performance.

Where the methodology gets contested

The case against the report isn’t that the scores are wrong — it’s that the test regime is structurally lenient. AI Lab Watch documents external reproductions where Meta-evaluated cyber-offense capabilities jumped from 5% to 100% once chain-of-thought and basic tools were enabled, features omitted in earlier Meta evaluations 7. CWM’s own report concedes its agentic harness is limited to vanilla Bash and Python, and explicitly excludes malicious fine-tuning from scope.

That last gap is the one peer labs have started closing. OpenAI’s gpt-oss release ran Malicious Fine-Tuning — deliberately optimizing the model to maximize dangerous capabilities with safety filters stripped — before declaring the model below the “Preparedness High” threshold 8. Meta did not. By that comparison, CWM’s clean bill of health rests on a less adversarial protocol than a peer applied to a similar artifact.

The Center for AI Policy goes further on the framework itself, arguing Meta’s “uniquely enable” threshold is a loophole: if any comparable open-weight model already exists, release is permitted regardless of marginal uplift 9. CWM’s report leans on exactly that move — benchmarking against Llama 4 Maverick, Qwen3-Coder-480B, and gpt-oss-120b and concluding it is “comparable or lower.”

What the community is already doing with it

The reception split is instructive. Practitioners call the 32B size a sweet spot for local execution, though the model is reportedly brittle outside its native agentic harness and tied to a rigid system prompt 10. The FAIR Non-Commercial Research License has drawn open-washing complaints — CWM cannot be used in production or commercial assistants 11.

More telling: the Self-play SWE-RL project has already used the CWM-sft checkpoint as a base, reporting 10+ point gains on coding benchmarks through autonomous self-improvement 12. That is a concrete instance of the post-release capability amplification Meta’s preparedness report doesn’t evaluate — and the live argument is whether the moderate-risk verdict survives contact with a community that fine-tunes and re-scaffolds aggressively.

Stanford’s counting probe caps GPT-5.4 at 400, Claude at 2,000

Source: hf-daily-papers · published 2026-05-02

TL;DR

- Stanford’s Stable Counting Capacity assay finds all 126 tested LLMs collapse far below their advertised context windows.

- Claude 4.6 Opus tops out near 2,000 items; GPT-5.4 around 400; Gemini 2.5 Pro 500–1,000; Gemma 3 27B at 27.

- Failure is sudden structural collapse, not drift — models default to attractor numbers, with

500the most common guess. - Reasoning tokens don’t help: thinking-mode variants burn 5–20× more tokens for negligible or negative gains.

What SCC actually measures

Dai and Fan strip counting down to its mechanical core: a comma-separated stream of identical "a" tokens, with the model asked to return the integer count. No semantics, no tokenization tricks (you’re counting items, not subwords), no knowledge contamination. An adaptive ladder finds the largest length where 16 sampled counts hold a normalized MAE under 0.05 — the model’s Counting Capacity (CC).

That design matters because it preempts the standard practitioner deflection. The popular “LLMs can’t count the r’s in strawberry because BPE hides the letters” explanation 13 does not apply here: there is nothing to hide. Whatever the ceiling is, it is not a tokenizer artifact.

The numbers, and why they sting

| Model | Counting Capacity | Advertised context |

|---|---|---|

| Claude 4.6 Opus | ~1,000–2,000 | 200K+ |

| Gemini 2.5 Pro | ~500–1,000 | 1M+ |

| GPT-5.4 | ~400–500 | 128K |

| Claude 3.7 Sonnet (Thinking) | ~500 | 200K |

| Gemma 3 27B-it | 27 | 128K |

Counting capacity is decoupled from context length by 2–3 orders of magnitude. This is the same pattern NVIDIA’s RULER work surfaced for retrieval — Llama 3.1 70B’s “128K” window is effectively ~64K 14 — but SCC isolates procedural state rather than retrieval, which explains why needle-in-haystack scores can stay high while agentic loops silently lose track of where they are.

Past the ceiling, models don’t drift. They jump. The post-collapse guess distribution piles up on salient round integers, with 500 dominant across architectures. PNAS work on numerical bias has independently shown RLHF amplifies attractor collapse relative to base models 15 — so the round-number guessing is best read as a learned alignment artifact, not a pretraining accident.

Why more thinking doesn’t fix it

The most uncomfortable result is the test-time compute frontier: roughly 2 generated tokens per true count for stable operation, and reasoning-mode models that consume 5–20× more often score worse. In dual-task experiments, simultaneously counting markers while solving MATH-500 problems degraded counting more than counting two different items did — implying reasoning and state-tracking compete for the same finite latent budget.

This lands on top of a known wound. Liu et al.’s flip-flop language modeling work showed transformers suffer “attention glitches” on a 1-bit memory task that LSTMs and modern SSMs solve trivially 16, and log-precision transformers are formally stuck in TC⁰ — unable to simulate arbitrary finite-state machines past O(log T) depth without scratchpad 17. SCC converts those theory and toy-task results into a per-model number you can quote in a procurement meeting.

The mechanistic story checks out

The residual-stream “progress coordinate” and the SAE “counter features” Dai and Fan extract from Gemma 3 and Qwen 3.5 line up with Anthropic’s 2025 Transformer Circuits work on linebreaks, which causally validated character-count features in Gemma-2-9B: patching a clean count feature into a corrupted prompt forced a line break at the wrong column 18. So SCC isn’t probing a statistical ghost — it’s measuring the breaking point of an interpretability circuit that has already been mapped.

Every tested model fails far below its advertised context limit.

The takeaway for anyone shipping long-horizon agents: stop reading “1M context” as “1M steps of reliable bookkeeping.” They are not the same number, and now there’s a clean assay to prove it.

Round-ups

HiL-Bench tests whether agents know when to ask for help

Source: hf-daily-papers

Frontier coding agents fail ambiguous tasks not from lack of skill but from poor judgment about when to escalate. HiL-Bench scores agents on Ask-F1, question precision, and blocker recall, and pairs the benchmark with a shaped-reward RL recipe targeting unresolvable uncertainty.

PhysicianBench scores LLM agents on real EHR workflows

Source: hf-daily-papers

Clinical agent claims meet a harder test in PhysicianBench, which runs LLMs through multi-step physician tasks inside live electronic health record environments. Scoring uses execution-grounded verification of tool calls and documentation, exposing wide capability gaps on real-world clinical scenarios.

WindowsWorld benchmarks GUI agents across pro desktop apps

Source: hf-daily-papers

Cross-application desktop workflows remain brutal for current GUI agents, and WindowsWorld quantifies how badly they break. The process-centric benchmark scores conditional judgment, reasoning, and execution efficiency on multi-step tasks that span several professional Windows applications inside a simulated environment.

T²PO stabilizes multi-turn agent RL via uncertainty-gated exploration

Source: hf-daily-papers

Multi-turn agentic RL collapses when exploration runs unchecked across long trajectories. T²PO monitors token- and turn-level uncertainty to trigger dynamic resampling and trajectory filtering, sharpening credit assignment and producing more stable training runs than standard policy optimization.

Metacognition pitched as the real fix for LLM hallucinations

Source: hf-daily-papers

Hallucinations persist because models cannot tell what they know from what they do not, the authors argue, and scaling knowledge alone will not close the gap. The paper outlines metacognitive architectures using uncertainty quantification and confidence intervals inside agent systems.

Persistent visual memory keeps LVLMs grounded over long outputs

Source: hf-daily-papers

Vision-language models lose sight of the image as generation lengthens, a failure the authors call Visual Signal Dilution. A lightweight persistent visual memory module reinjects visual embeddings through the feed-forward network, restoring attention and lifting accuracy on complex reasoning tasks.

MotionCache speeds autoregressive video by reusing low-motion tokens

Source: hf-daily-papers

Autoregressive video diffusion wastes compute redenoising static regions, and MotionCache fixes that with motion-weighted cache reuse keyed to inter-frame pixel differences. A coarse-to-fine schedule sets per-token update frequencies, delivering meaningful speedups without visible quality loss.

Footnotes

-

Pebblous AI — VLA architecture comparison — https://blog.pebblous.ai/report/vla-architecture-comparison/en/

↩MolmoAct2 outperformed competitors in a ‘many-trial’ setup, scoring 0.51 on average, compared to π0.5 at 0.32 … On the ‘MolmoBot’ benchmark, MolmoAct2 achieved a 20.6% success rate, roughly doubling the 10.3% recorded by π0.5.

-

SiliconANGLE — Ai2 releases MolmoAct 2 — https://siliconangle.com/2026/05/05/ai2-releases-molmoact-2-enhancing-robot-intelligence-real-world/

↩Piloted in high-stakes environments, such as CRISPR gene-editing labs at Stanford, where the model successfully automated sample movement and lab equipment operation.

-

Medium — Inside the architecture of MolmoAct2 (towardsdev) — https://medium.com/towardsdev/the-dawn-of-deployable-robot-brains-inside-the-architecture-of-molmoact2-8e124bf39986

↩Per-layer KV-cache conditioning … increases the memory footprint during inference, as the projected KV cache for all layers must be stored during the iterative flow matching loop … despite support for $6,000 low-cost arms, the compute required for the full 8B-parameter reasoning stack remains out of reach for most hobbyist-grade edge devices.

-

The Robot Report — CMU safety study — https://www.therobotreport.com/popular-ai-models-arent-ready-safely-run-robots-say-cmu-researchers/

↩Every model tested failed safety checks, with robots willing to execute harmful instructions such as brandishing a knife, removing mobility aids like wheelchairs, or enacting discriminatory behaviors based on a user’s identity.

-

OpenDriveLab — AgiBot World — https://opendrivelab.com/AgiBot-World/

↩Policies pre-trained on AgiBot World allegedly achieve a 30% performance improvement over those trained purely on Open X-Embodiment in both seen and out-of-distribution scenarios.

-

KrASIA — Unitree founder on VLA consensus — https://kr-asia.com/unitrees-founder-disputes-vla-consensus-backs-video-trained-models-for-robotics

↩Unitree’s founder has called current VLA architectures ‘relatively dumb,’ arguing that the field is over-focused on foundational data at the expense of developing more unified, efficient model architectures that could operate with less data.

-

AI Lab Watch — Meta scorecard — https://ailabwatch.org/companies/meta

↩External researchers found that model performance on certain cyber-offense tasks jumped from 5% to 100% simply by allowing the model to ‘think’ (chain-of-thought) or use basic tools, features Meta reportedly omitted in its initial evaluations.

-

OpenAI — Estimating Worst-Case Frontier Risks of Open-Weight LLMs (gpt-oss) — https://openai.com/index/estimating-worst-case-frontier-risks-of-open-weight-llms/

↩OpenAI researchers employed Malicious Fine-Tuning (MFT), which specifically optimizes models to maximize dangerous capabilities while intentionally stripping safety filters… gpt-oss failed to cross the ‘Preparedness High’ threshold.

-

Center for AI Policy — Meta’s Frontier AI Framework — https://www.centeraipolicy.org/work/metas-frontier-ai-framework

↩Meta defines its critical risk threshold by whether a model would ‘uniquely enable’ a threat scenario… [this] might allow Meta to dismiss a model’s risks if a competitor has already released a similarly capable model or if human experts could theoretically perform the same tasks.

-

r/machinelearningnews — CWM release thread — https://www.reddit.com/r/machinelearningnews/comments/1nq1h1m/meta_fair_released_code_world_model_cwm_a/

↩The 32B size is a ‘sweet spot’ for local execution… however, the model is ‘brittle’ when used outside of its intended agentic workflows and requires a specific, rigid system prompt to function correctly.

-

PromptLayer — first reactions to Meta’s CWM release — https://blog.promptlayer.com/code-world-model-first-reactions-to-metas-release/

↩Significant criticism has focused on the model’s FAIR Non-Commercial Research License, which prevents its use in production software or commercial AI assistants… Meta has explicitly warned that CWM is not a general-purpose chat model.

-

arXiv 2605.00932 (CWM Preparedness Report) — Self-play SWE-RL follow-up cited in independent eval roundup — https://arxiv.org/html/2605.00932v1

↩Independent projects, such as the Self-play SWE-RL (SSR) framework, have already begun using the CWM-sft checkpoint as a base for training even more advanced software agents, reporting performance gains of over 10 points on coding benchmarks through autonomous self-improvement.

-

Arbisoft — ‘Why LLMs can’t count the r’s in strawberry’ — https://arbisoft.com/blogs/why-ll-ms-can-t-count-the-r-s-in-strawberry-and-what-it-teaches-us

↩LLMs do not ‘see’ individual letters; instead, they process text as numeric vectors representing chunks of characters… ‘strawberry’ might be tokenized into ‘straw’ and ‘berry,’ obscuring the individual occurrences of the letter ‘r’.

-

Weights & Biases — RULER evaluation report — https://wandb.ai/byyoung3/ruler_eval/reports/How-to-evaluate-the-true-context-length-of-your-LLM-using-RULER---VmlldzoxNDE0OTA0OQ

↩While Llama 3.1 70B advertises a 128K token window, its effective context—defined as the range where it can reliably retrieve and reason—is measured at approximately 64K, a 50% discrepancy.

-

PNAS — numerical bias in LLMs — https://www.pnas.org/doi/10.1073/pnas.2415697122

↩Alignment (Instruction Tuning and RLHF) has been shown to amplify these biases… causing it to ‘collapse’ into these biased attractors more frequently than base (pre-trained) versions of the same model.

-

arXiv 2410.19730 — Flip-Flop Language Modeling / ‘attention glitches’ — https://arxiv.org/html/2410.19730v2

↩Transformers suffer from ‘attention glitches’—sporadic, non-extrapolating errors where the model fails to retrieve the correct bit state over long-range dependencies… recurrent architectures like LSTMs or modern SSMs can solve the flip-flop task perfectly with far fewer parameters.

-

arXiv 2601.04480 — TC^0 / log-depth limits of transformers — https://arxiv.org/html/2601.04480v1

↩Log-precision Transformers are limited to the complexity class TC^0, meaning they cannot naturally solve problems involving modular counting (like the PARITY problem) or simulate T steps of an arbitrary FSM without at least O(log T) layers.

-

Anthropic Transformer Circuits — ‘Linebreaks’ (2025) — https://transformer-circuits.pub/2025/linebreaks/index.html

↩Specific features within Gemma-2-9B’s residual stream activate according to the number of characters since a newline… patching these character-count features from a clean prompt into a corrupted one can force a line break at an incorrect position.