Agibot's LWD beats SFT, Odysseus tops GPT-5.4 5×, TreeFlow reframes boosting

Three research wins credit the training scaffolding — a flywheel, a CNN critic, and a tree-boosting reframe — over the model itself.

Agibot’s LWD beats SFT, Odysseus tops GPT-5.4 5×, TreeFlow reframes boosting

TL;DR

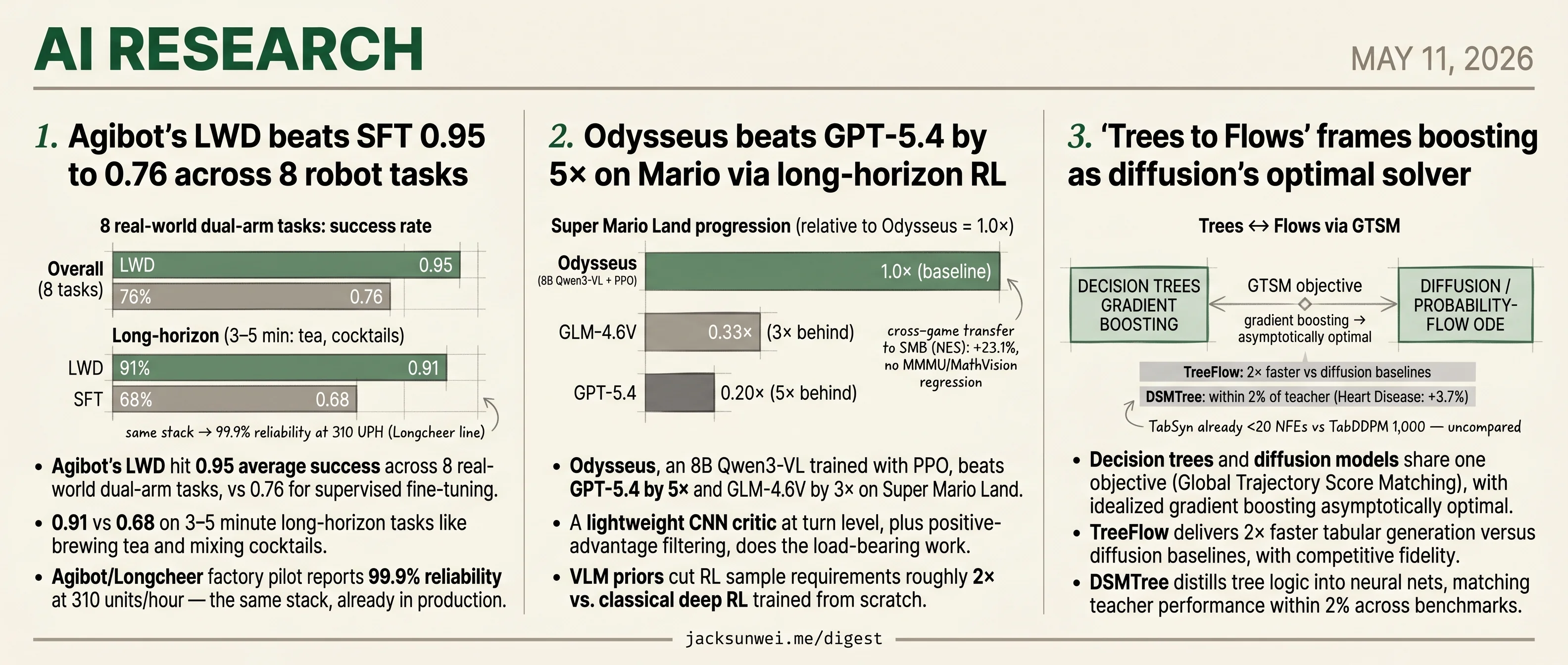

- Agibot’s LWD posts 0.95 success on 8 dual-arm tasks, beating SFT at 0.76.

- Odysseus beats GPT-5.4 by 5× on Super Mario Land using a turn-level CNN critic.

- TreeFlow ships 2× faster tabular generation by reframing boosting as diffusion’s optimal solver.

- Each headline credits the training scaffolding, not the underlying model.

Three research releases land today, and in each one the headline number traces back to something other than the model itself. Agibot’s LWD posts 0.95 success on dual-arm tasks, but the work is done by a Quality-Aware Memory flywheel that critics warn may refine known skills rather than discover new ones. Odysseus beats GPT-5.4 by 5× on Mario, but the load-bearing piece is a turn-level CNN critic plus positive-advantage filtering — the 8B Qwen3-VL is mostly along for the ride. TreeFlow ships 2× faster tabular generation, but the contribution is a theoretical reframing — gradient boosting as diffusion’s asymptotically optimal solver — not a new architecture.

It’s a useful frame for the round-ups too: sigmoid attention for single-cell transformers, online self-calibration for VLMs, MASCing’s routing-mask steering for MoE safety. The model is increasingly the substrate; what gets reported is what you wrap around it.

Agibot’s LWD beats SFT 0.95 to 0.76 across 8 robot tasks

Source: hf-daily-papers · published 2026-04-30

TL;DR

- Agibot’s LWD hit 0.95 average success across 8 real-world dual-arm tasks, vs 0.76 for supervised fine-tuning.

- 0.91 vs 0.68 on 3–5 minute long-horizon tasks like brewing tea and mixing cocktails.

- Agibot/Longcheer factory pilot reports 99.9% reliability at 310 units/hour — the same stack, already in production.

- Critics flag a Support-Binding Dilemma in QAM — the flywheel may refine known skills rather than discover new ones.

The flywheel, not the algorithm, is the news

LWD (arXiv:2605.00416) is Agibot’s pitch that generalist Vision-Language-Action policies should keep learning after shipping. Sixteen Agibot G1 dual-arm robots stream episodes — successes, failures, and human interventions — into a distributed buffer; a JAX learner trains a PaliGemma-2B actor with a 300M-parameter action expert; the new weights go back to the fleet. Offline pre-training on 652.5 hours of demonstrations, historical rollouts, and “play data” gives the policy a starting point; online RL pushes it past what any expert demonstrated.

The numbers earn the framing. Against the SFT baseline of 0.76, LWD reaches 0.95 averaged over eight tasks spanning grocery restocking and long-horizon manipulation. RECAP — Physical Intelligence’s filtered-BC approach used in π0.5/π0.6 — lands at 0.85, and HG-DAgger at the same 0.85. The gap widens on long-horizon work, where compounding errors normally crush imitation policies.

Two algorithmic bets

LWD rests on two design choices, both aimed at the messiness of fleet data.

DIVL (Distributional Implicit Value Learning) keeps a full categorical distribution over multi-step returns instead of a scalar critic, so rare successes don’t get averaged away by frequent failures of the same state-action pair. An entropy-adaptive τ schedule tunes optimism per state. The ablations defend both choices: replacing DIVL with scalar expectile regression costs ~16.7% on long-horizon online tasks, and the adaptive τ beats a constant baseline 0.88 to 0.84 1.

QAM (Q-learning via Adjoint Matching) sidesteps the usual pain of doing PPO on flow/diffusion policies — log-probs are expensive, ODE backprop is unstable — by turning the critic’s action-space gradient ∇ₐQ into a local regression target for the actor’s vector field. Stable, cheap, and it actually trains.

The dissent worth taking seriously

QAM’s stability isn’t free. A published critique argues it suffers a Support-Binding Dilemma: because it is multiplicatively regularized against the empirical behavior prior, it shows popularity bias (suppressing rare-but-optimal actions) and a “zero-support trap” where gradients vanish off-manifold 2. The practical read: improvements are bounded by what the fleet has already done.

That reframes the flywheel uncomfortably. RECAP gets characterized similarly — “filtered behavioral cloning” that converges to the best behavior in the buffer rather than a globally optimal policy 3. LWD’s distributional critic gives it more reach than RECAP, but if QAM can’t escape the support of past behavior, the system refines known skills rather than inventing new ones. RLinf’s reproductions also note HG-DAgger hits 0.94–0.98 on semantically precise tasks because human corrections beat sparse rewards 4 — so LWD’s edge is real but task-shaped, strongest on long-horizon stitching.

The industrial tell

The strongest evidence isn’t in the paper. Agibot’s joint deployment with Longcheer Technology puts G2 robots on a consumer-electronics precision manufacturing line at 310 UPH, ~19s cycle time, 140+ hours of continuous operation, integrated in 36 hours 5, and The Robot Report describes robots acquiring new skills “in tens of minutes” with 99.9% reliability on active production lines 6. That converts a 0.95 lab metric into factory-floor evidence — and reframes “16 robots” as the small end of what’s actually shipping.

The open question is whether the next order of magnitude of fleet data buys genuinely new behaviors, or just faster convergence to old ones.

Odysseus beats GPT-5.4 by 5× on Mario via long-horizon RL

Source: hf-daily-papers · published 2026-04-30

TL;DR

- Odysseus, an 8B Qwen3-VL trained with PPO, beats GPT-5.4 by 5× and GLM-4.6V by 3× on Super Mario Land.

- A lightweight CNN critic at turn level, plus positive-advantage filtering, does the load-bearing work.

- VLM priors cut RL sample requirements roughly 2× vs. classical deep RL trained from scratch.

- Cross-game transfer to Super Mario Bros. (NES) gave 23.1% average improvement with no catastrophic forgetting on MMMU or MathVision.

The recipe, not the score, is the contribution

The interesting thing about Odysseus isn’t that it crushes GPT-5.4 at Mario — frontier VLMs are uniformly weak at long-horizon games, with DeepSeek-R1’s recent BALROG SOTA topping out at 34.9% progression 7. It’s that the authors found a stack that makes RL training of an 8B vision-language model stable past 100 turns, on a single discrete action space, without burning a second VLM as a critic.

Three ingredients do the work. First, a NatureCNN value head assigns credit at turn granularity instead of per-token, decoupling RL bookkeeping from text generation and dropping the memory bill. Second, positive-advantage filtering clips negative advantages to zero, which keeps gradients from blowing up the policy — a known failure mode when PPO meets large pretrained models. Third, an inverse-trajectory-weighted curriculum oversamples harder levels (those where average rollouts die early) so the optimizer doesn’t drown in easy World 1-1 trajectories.

The interaction protocol matters too. Each turn the model emits structured <perception>, <reasoning>, and <answer> blocks; actions apply for 5–15 frames so each turn produces visible state change. A 5,000-frame SFT warmup with GPT-o3-generated chain-of-thought injects domain grounding (the base model can’t reliably identify Mario), then RL takes over.

Calibrating the headline numbers

The 5× over GPT-5.4 reads better than it should in isolation. PokeGym work from the same author group documents that frontier models like GPT-5.4 hit “aware deadlocks” — they recognize they’re stuck but can’t recover 8. That’s exactly the failure mode a turn-level critic plus positive-advantage filter is designed to suppress, so Odysseus is winning on the axis it was engineered for, against baselines never trained for it.

Sample efficiency deserves the same scrutiny. The “2× more efficient than classical deep RL” claim is real, but baseline PPO on Mario routinely needs 1–10M steps just to clear the first world 9. Odysseus’s tens of millions of samples is a meaningful win on top of an already expensive baseline, not a fundamentally cheaper recipe — the VLM prior buys you a head start, not a free lunch.

Positive-advantage filtering improves stability, but it can reduce output diversity, as the model is never explicitly penalized for repeating specific sub-optimal but ‘safe’ patterns. 10

That tradeoff matches the paper’s own observation that the learned policy trends toward conservative exploration. For Mario it’s fine; for genres that reward creative play, less so.

What’s actually generalizable

Lead author Seth Karten previously built PokéChamp, a minimax LLM agent for Pokémon battles that hit human-expert play without RL 11 — Odysseus is the visual long-horizon installment of a longer “games as VLM benchmarks” thesis. The reusable artifact here is the VeRL/HybridFlow + lightweight CNN critic + positive-advantage filter stack. VeRL already shows 1.5–20× throughput over DeepSpeed-Chat, though community chatter suggests newer frameworks like Verlog may pull ahead past ~400-turn horizons 12, a regime Odysseus hasn’t been tested in. That’s the next ceiling worth watching, not the next Mario score.

’Trees to Flows’ frames boosting as diffusion’s optimal solver

Source: hf-daily-papers · published 2026-04-30

TL;DR

- Decision trees and diffusion models share one objective (Global Trajectory Score Matching), with idealized gradient boosting asymptotically optimal.

- TreeFlow delivers 2× faster tabular generation versus diffusion baselines, with competitive fidelity.

- DSMTree distills tree logic into neural nets, matching teacher performance within 2% across benchmarks.

- TabSyn already cuts TabDDPM’s 1,000 NFEs to <20, a stronger baseline TreeFlow doesn’t centrally compare against.

The correspondence

Ramachandran and Sra’s claim is that hierarchical decision trees and continuous diffusion processes are two views of the same object. In an appropriate limit, trees define probability-flow ODEs; conversely, diffusions induce tree-like hierarchical clusterings of the data manifold 13. The glue is Global Trajectory Score Matching (GTSM), an objective both model classes minimize — and for which gradient boosting, in an idealized form, is asymptotically optimal.

That framing matters because score-based diffusion and gradient boosting have lived in entirely separate corners of ML literature. If the equivalence holds, decades of boosting theory (convergence rates, regularization, feature importance) become available to reason about diffusion training, and vice versa.

The hybrid is not new; the bridge is

The paper isn’t the first to splice trees into diffusion. Han & Zhou’s Diffusion Boosted Trees already parameterized each denoising timestep with a single decision tree, framing the entire diffusion process as boosting 14. Jolicoeur-Martineau’s Forest-Diffusion used XGBoost to estimate scores and beat GPU-trained TabDDPM at AISTATS 2024. What’s new here is the theoretical correspondence — claiming the hybrid was always implicit in the math — rather than another engineering recipe.

That’s also why the headline benchmark numbers deserve scrutiny.

The benchmarks have moved

TreeFlow’s “2× speedup on tabular data” is anchored against diffusion baselines, but the tabular-diffusion frontier has accelerated. TabSyn (ICLR 2024) reduces TabDDPM’s column-wise distribution error by up to 86% and pairwise-correlation error substantially, while needing fewer than 20 function evaluations versus TabDDPM’s 1,000 15. Worse for any tabular generation paper: plain SMOTE interpolation still matches or beats TabDDPM on machine-learning utility for simpler datasets 16. A 2× speedup over the wrong baseline isn’t a 2× speedup over the field.

The theoretical claim has live dissent

“Gradient boosting is asymptotically optimal for GTSM” is a strong statement, and it lands in an unsettled debate about why score-based models work at all. A competing 2024 view recasts diffusion as flow-matching to a Wasserstein Gradient Flow velocity field, noting that trained networks routinely fail to learn truly conservative score fields yet still generate well because they minimize transport cost 17.

neural networks often fail to learn ‘conservative’ vector fields (true scores), yet still succeed because they effectively minimize transport costs between distributions

Under that lens, the boosting analogy captures part of the optimization geometry but not all of it, and the “idealized” qualifiers in the optimality proof become load-bearing.

The distillation result is the durable one

The cleanest practical contribution is DSMTree, which transfers a trained tree’s hierarchical decision logic into a neural network. It matches teacher performance within 2% across benchmarks, and on the Heart Disease dataset the distilled network actually exceeded the original tree by 3.7% 18. That solves a real problem — getting tree-style inductive bias into differentiable, end-to-end pipelines — where Frosst & Hinton’s soft decision trees plateaued years ago.

Treat the paper as an elegant unification with one solid empirical hook, not a tabular-generation SOTA. The bridge is what’s worth reading.

Round-ups

Web2BigTable runs bi-level multi-agent search across the open web

Source: hf-daily-papers

Web2BigTable splits internet-scale extraction between a coordinator and worker agents, decomposing tasks for parallel execution and looping through a run-verify-reflect cycle. Shared external memory lets the agents tackle both broad sweeps and deep lookups without losing context across iterations.

Sigmoid attention speeds single-cell foundation model training

Source: hf-daily-papers

Swapping softmax for sigmoid attention in single-cell transformers yields better cell-type separation, faster convergence, and steadier gradients thanks to bounded derivatives and a diagonal Jacobian. FlashSigmoid and TritonSigmoid kernels keep throughput on par with FlashAttention-2.

Online self-calibration cuts hallucinations in vision-language models

Source: hf-daily-papers

The framework closes the generative-discriminative gap in VLMs by running Monte Carlo tree search to mine preference pairs, then applying direct preference optimization with a dual-granularity reward. Self-supervision happens online, so models keep tightening calibration without fresh human labels.

1D semantic tokenizer enables end-to-end autoregressive image generation

Source: hf-daily-papers

Jointly optimizing the tokenizer and autoregressive generator with a 1D semantic code, rather than freezing a separate VQ stage, posts state-of-the-art FID on ImageNet 256x256. Shared training aligns reconstruction and generation objectives that previously fought each other.

Themis-RM scores code generations across languages and criteria

Source: hf-daily-papers

Themis-RM is a multilingual code reward model trained on a large preference dataset spanning functional correctness, style, and other dimensions. Parameter-efficient fine-tuning enables cross-lingual transfer, letting one reward stack score generations flexibly along chosen criteria.

MASCing reconfigures MoE safety behavior without retraining

Source: hf-daily-papers

MASCing learns steering masks over routing logits using an LSTM surrogate, letting operators toggle expert circuits tied to behaviors like jailbreak defense or adult-content blocking. Switching safety profiles needs no weight updates, only a swap of the steering matrix.

Stable-GFlowNet stabilizes diverse red-teaming attacks on LLMs

Source: hf-daily-papers

Stable-GFN drops the brittle partition-function estimate in GFlowNet training for pairwise contrastive trajectory balance, then adds robust masking and a fluency stabilizer. The result is red-teaming prompts that stay coherent and varied instead of collapsing into gibberish or single modes.

Footnotes

-

Moonlight review of LWD — https://www.themoonlight.io/de/review/learning-while-deploying-fleet-scale-reinforcement-learning-for-generalist-robot-policies

↩Replacing DIVL with standard scalar expectile regression dropped long-horizon online performance by ~16.7%; the entropy-adaptive τ schedule outperformed a constant τ baseline (0.88 vs 0.84).

-

Moonlight review of Entropy-Regularized Adjoint Matching — https://www.themoonlight.io/en/review/entropy-regularized-adjoint-matching-for-offline-rl

↩QAM is strictly regularized against the empirical behavior distribution… suffers from a ‘Support-Binding Dilemma’ — popularity bias suppresses rare optimal actions and a zero-support trap prevents exploration of novel high-reward actions.

-

Federico Sarrocco blog on π*0.6 / RECAP — https://federicosarrocco.com/blog/pi-star-06-recap

↩RECAP is essentially filtered behavioral cloning that sidesteps policy gradient complexities, but it is limited by its training data; it converges to the best behavior present in the experience buffer rather than discovering a globally optimal policy.

-

RLinf docs (HG-DAgger baseline) — https://rlinf.readthedocs.io/en/latest/rst_source/examples/embodied/hg-dagger.html

↩SOP+HG-DAgger often achieves the highest peak performance (0.94–0.98) on tasks requiring high semantic precision because human corrections provide a cleaner signal than sparse environment rewards.

-

PR Newswire (Agibot/Longcheer joint release) — https://www.prnewswire.com/apac/news-releases/agibot-and-longcheer-technology-achieve-worlds-first-embodied-ai-deployment-in-consumer-electronics-precision-manufacturing-mass-production-line-302742873.html

↩World’s first embodied AI deployment in consumer electronics precision manufacturing mass production line… 310 UPH, ~19s cycle time, 140+ hours continuous operation, integrated within 36 hours.

-

The Robot Report — https://www.therobotreport.com/agibot-deploys-real-world-reinforcement-learning-system/

↩Agibot deploys real-world reinforcement learning system… robots acquired new skills in tens of minutes by learning directly from environmental feedback, achieving 99.9% reliability on active production lines.

-

NVIDIA developer blog on BALROG — https://developer.nvidia.com/blog/benchmarking-agentic-llm-and-vlm-reasoning-for-gaming-with-nvidia-nim/

↩DeepSeek-R1 recently set a new state-of-the-art on the BALROG leaderboard with a 34.9% progression rate… even the most advanced models typically achieve less than 50% accuracy in strategic multi-agent environments

-

PokeGym benchmark (ResearchGate) — https://www.researchgate.net/publication/403683401_PokeGym_A_Visually-Driven_Long-Horizon_Benchmark_for_Vision-Language_Models

↩GPT-5.4 exhibits higher ‘Aware Deadlocks’—recognizing it is stuck but failing to recover

-

ResearchGate: PPO vs DQN comparative study — https://www.researchgate.net/publication/393613412_Comparative_Study_of_Reinforcement_Learning_Performance_Based_on_PPO_and_DQN_Algorithms

↩Baseline PPO models often show only marginal progress at 300,000 timesteps, with meaningful level completion rates appearing only after 1–10 million steps

-

Medium: PPO for LLM alignment — https://medium.com/data-science/proximal-policy-optimization-ppo-the-key-to-llm-alignment-923aa13143d4

↩filtering improves stability, it can reduce output diversity (entropy), as the model is never explicitly penalized for repeating specific sub-optimal but ‘safe’ patterns

-

Seth Karten personal site (lead author) — https://sethkarten.ai/

↩co-founded the PokéAgent Challenge and authored PokéChamp, a minimax language agent for Pokémon battles that achieved human-expert performance without traditional RL training

-

SkyPilot blog on VeRL/HybridFlow — https://blog.skypilot.co/verl-rl-training/

↩HybridFlow has demonstrated throughput improvements between 1.5x and 20x compared to earlier frameworks like DeepSpeed-Chat… some newer frameworks like Verlog may outperform it in ‘extreme’ long-horizon tasks exceeding 400 turns

-

alphaXiv overview — https://www.alphaxiv.org/overview/2605.00414

↩decision trees define continuous probability flow ODEs, while diffusion processes naturally induce tree-like hierarchical clusterings

-

Han & Zhou, ‘Diffusion Boosted Trees’ (arXiv 2311.14922) — https://arxiv.org/abs/2311.14922

↩parameterize each diffusion timestep with a single decision tree, effectively treating the entire diffusion process as a boosting algorithm

-

TabSyn / latent-diffusion tabular survey (arXiv 2502.17119) — https://arxiv.org/html/2502.17119v1

↩TabSyn reduces error rates in column-wise distribution and pair-wise correlation estimation by up to 86% compared to TabDDPM … requiring fewer than 20 NFEs vs 1,000 for TabDDPM

-

ResearchGate: ‘Diffusion Models for Tabular Data: Challenges, Current Progress and Future Directions’ — https://www.researchgate.net/publication/389316416_Diffusion_Models_for_Tabular_Data_Challenges_Current_Progress_and_Future_Directions

↩the interpolation-based method SMOTE remains an unexpectedly strong competitor in terms of machine learning utility, sometimes matching or exceeding TabDDPM on simpler datasets

-

Wasserstein-Gradient-Flow perspective on diffusion (arXiv 2406.01813) — https://arxiv.org/html/2406.01813v1

↩neural networks often fail to learn ‘conservative’ vector fields (true scores), yet still succeed because they effectively minimize transport costs between distributions

-

lonepatient arxiv digest (2026-05-04) — http://lonepatient.top/2026/05/04/arxiv_papers_2026-05-04.html

↩DSMTree successfully bridges the performance gap … matching teacher performance within a 2% margin … On the Heart Disease dataset the distilled neural network actually exceeded the original decision tree by 3.7%