OncoAgent posts 100% on a retrieval proxy, skips the NCCN check

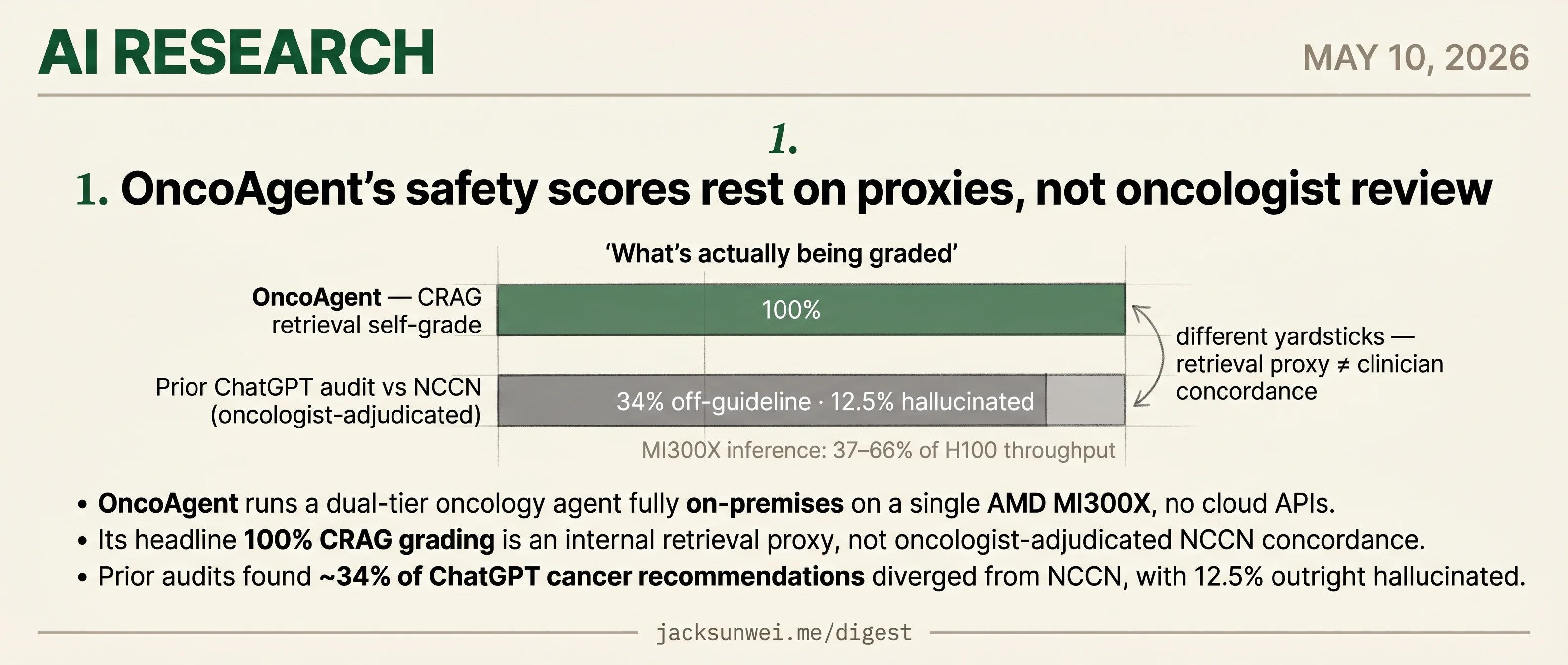

OncoAgent reports 100% on a self-run retrieval proxy while the oncologist-facing concordance check that would matter clinically goes unmeasured.

OncoAgent posts 100% on a retrieval proxy, skips the NCCN check

TL;DR

- OncoAgent runs a dual-tier oncology agent fully on-premises on one AMD MI300X.

- Its 100% CRAG score measures retrieval grading, not oncologist-adjudicated NCCN concordance.

- Prior ChatGPT audits found 34% of cancer recommendations diverged from NCCN, 12.5% hallucinated.

- MI300X throughput lands at 37–66% of H100 in outside benchmarks, leaving bedside latency unvalidated.

Today’s research slate is a single paper, and it’s a worked example of a familiar gap: the metric a clinical-AI team picks to grade itself is rarely the metric a clinician would pick to grade it. OncoAgent ships a dual-tier oncology agent that runs entirely on-premises on one AMD MI300X, which is a real deployment story — no cloud round-trips, no patient data leaving the hospital. The headline number is 100% on CRAG, a retrieval-grading proxy the authors run themselves.

What CRAG doesn’t measure is NCCN concordance — whether the recommendation an oncologist would actually act on matches guideline care. Prior audits of ChatGPT against the same standard found ~34% of cancer recommendations diverged, 12.5% outright hallucinated. And the MI300X inference numbers — 37–66% of H100 throughput in outside benchmarks — mean bedside latency is its own separate validation question the paper doesn’t answer.

OncoAgent’s safety scores rest on proxies, not oncologist review

Source: huggingface-blog · published 2026-05-09

TL;DR

- OncoAgent runs a dual-tier oncology agent fully on-premises on a single AMD MI300X, no cloud APIs.

- Its headline 100% CRAG grading is an internal retrieval proxy, not oncologist-adjudicated NCCN concordance.

- Prior audits found ~34% of ChatGPT cancer recommendations diverged from NCCN, with 12.5% outright hallucinated.

- MI300X inference lands at 37–66% of H100 throughput in practitioner benchmarks — bedside latency needs separate validation.

What the hackathon actually shipped

OncoAgent is a LangGraph-orchestrated, 8-node clinical decision support stack built during the Lablab.ai AMD Developer Hackathon. A complexity router sends simple triage to a 9B Qwen model and Stage IV / multi-mutation cases to a 27B model, with mandatory clinician review on any Tier 2 output or confidence below 0.3. The retrieval side ingests 70+ NCCN and ESMO guidelines, and a Reflexion cascade (formatting, safety, LLM entailment) runs as deterministic code so a prompt can’t talk its way past it. PHI is stripped before any token reaches an LLM, and per-patient thread IDs enforce memory isolation.

flowchart LR

Q[Clinical query] --> R[PHI redaction]

R --> C{Complexity router}

C -->|simple| T1[Qwen 9B - Tier 1]

C -->|complex| T2[Qwen 27B - Tier 2]

T1 --> RAG[CRAG over NCCN/ESMO]

T2 --> RAG

RAG --> S[Reflexion safety cascade]

S --> H{Confidence < 0.3 or Tier 2?}

H -->|yes| HITL[Clinician review]

H -->|no| OUT[Streamed response]

The engineering is genuinely tight. Unsloth + ROCm 6.2 cut VRAM ~60% and doubled training speed; sequence packing got a 266k-sample fine-tune down from a 5-hour estimate to roughly 50 minutes; synthetic data generation hit 6,800 cases/hour, a claimed 56× over API generation.

The “100%” number is doing a lot of work

The problem isn’t the architecture — it’s what’s being measured. “100% document-grading success” is the CRAG retrieval grader saying its own retrievals are relevant. That is not the same as an oncologist saying the resulting treatment plan matches NCCN. The Brigham/JAMA Oncology audit of ChatGPT found about a third of cancer-treatment recommendations diverged from NCCN and 12.5% were hallucinated, including curative regimens for non-curative disease 1. A PMC review found 81.8% of LLM errors in medical oncology had medium-to-high potential for moderate-to-severe harm if a clinician acted on them 2. Grounding in guideline PDFs plus a 0.10 cosine-distance refusal threshold should help, but no clinician concordance benchmark is reported. Stanford’s “centaur evaluation” group has been blunt about this pattern: models scored in isolation systematically over-promise, and trustworthy clinical AI needs joint human-AI task evaluation, not higher headline accuracy 3.

CRAG itself carries clinical baggage. Surveys warn that its decompose-then-recompose “knowledge strip” can drop contraindications and patient-specific nuance, and that CRAG’s canonical web-search fallback is an unverified hallucination vector for hospital use 4. OncoAgent appears to dodge the second risk by hard-refusing (“Information non-conclusive”) below the similarity threshold — a defensible departure from canonical CRAG, but one that trades recall for safety in ways the paper doesn’t quantify.

Hardware wins ≠ bedside wins

The MI300X numbers are plausible for QLoRA, where 192GB HBM3 genuinely changes what fits. But r/LocalLLaMA practitioners benchmarking the same silicon for inference report 37–66% of H100 throughput and silent 4-bit decode NaN bugs requiring pre-release dependency pins 5. Training-time speedups don’t translate cleanly to streaming Gradio latency at the bedside.

What would make this credible

LLMs are prone to providing ‘device-like’ unauthorized advice even when prompted to follow FDA CDS constraints. 6

OncoAgent’s HITL gate and Zero-PHI redaction are aimed squarely at FDA Non-Device CDS criteria, but Kaggle’s OncoMix-AI writeup notes 17% of oncology LLM studies use non-standardized metrics, making cross-comparison nearly impossible 6. The fix is unglamorous: a held-out, oncologist-graded case set with concordance scored against NCCN, published alongside the retrieval metrics. Until that exists, OncoAgent is a well-engineered prototype whose safety story is told entirely by components grading themselves.

Footnotes

-

ScienceDaily on JAMA Oncology study (Brigham & Women’s) — https://www.sciencedaily.com/releases/2023/08/230824111917.htm

↩About one-third of [ChatGPT’s] cancer treatment recommendations included at least one that did not align with NCCN guidelines… 12.5 percent of responses were ‘hallucinated,’ or were not part of any recommended treatment.

-

PMC review of LLMs in oncology decision-making — https://pmc.ncbi.nlm.nih.gov/articles/PMC11499929/

↩81.8% of incorrect answers from LLMs in medical oncology were rated as having a medium to high likelihood of causing moderate to severe harm if acted upon by a clinician or patient.

-

Stanford Digital Economy Lab — ‘Centaur Evaluations’ — https://digitaleconomy.stanford.edu/publication/ai-should-not-be-an-imitation-game-centaur-evaluations/

↩Models evaluated in isolation systematically over-promise: better evaluation, including how humans and AI perform tasks jointly, is the prerequisite for trustworthy clinical AI — not higher headline accuracy.

-

arXiv survey of CRAG for clinical RAG — https://arxiv.org/html/2601.03266v2

↩The decompose-then-recompose ‘knowledge strip’ filter can decontextualize medical facts, omitting contraindications or patient-specific nuance; web-search fallback when local retrieval is graded ‘Incorrect’ introduces an unverified hallucination vector into clinical workflows.

-

r/LocalLLaMA MI300X benchmark thread — https://www.reddit.com/r/LocalLLaMA/comments/1rk5ftz/benchmarked_the_main_gpu_options_for_local_llm/

↩Real-world LLM inference on MI300X often lands at 37–66% of H100 throughput despite the 192GB VRAM advantage, due to ROCm kernel maturity and silent 4-bit decode NaN bugs requiring pre-release dependency builds.

-

Kaggle BigQuery AI Hackathon writeup (OncoMix-AI) — https://www.kaggle.com/competitions/bigquery-ai-hackathon/writeups/oncomix-ai

↩ ↩217% of oncology-specific LLM studies use non-standardized or customized performance measures… LLMs are prone to providing ‘device-like’ unauthorized advice even when prompted to follow FDA CDS constraints.