GPT-5.2 leans on physicists, Sylph defers benchmarks, RecursiveMAS skips a rival

Three AI research wins land today, each leaning on a different uncredited collaborator — human physicists, a promised follow-up, an untested rival system.

GPT-5.2 leans on physicists, Sylph defers benchmarks, RecursiveMAS skips a rival

TL;DR

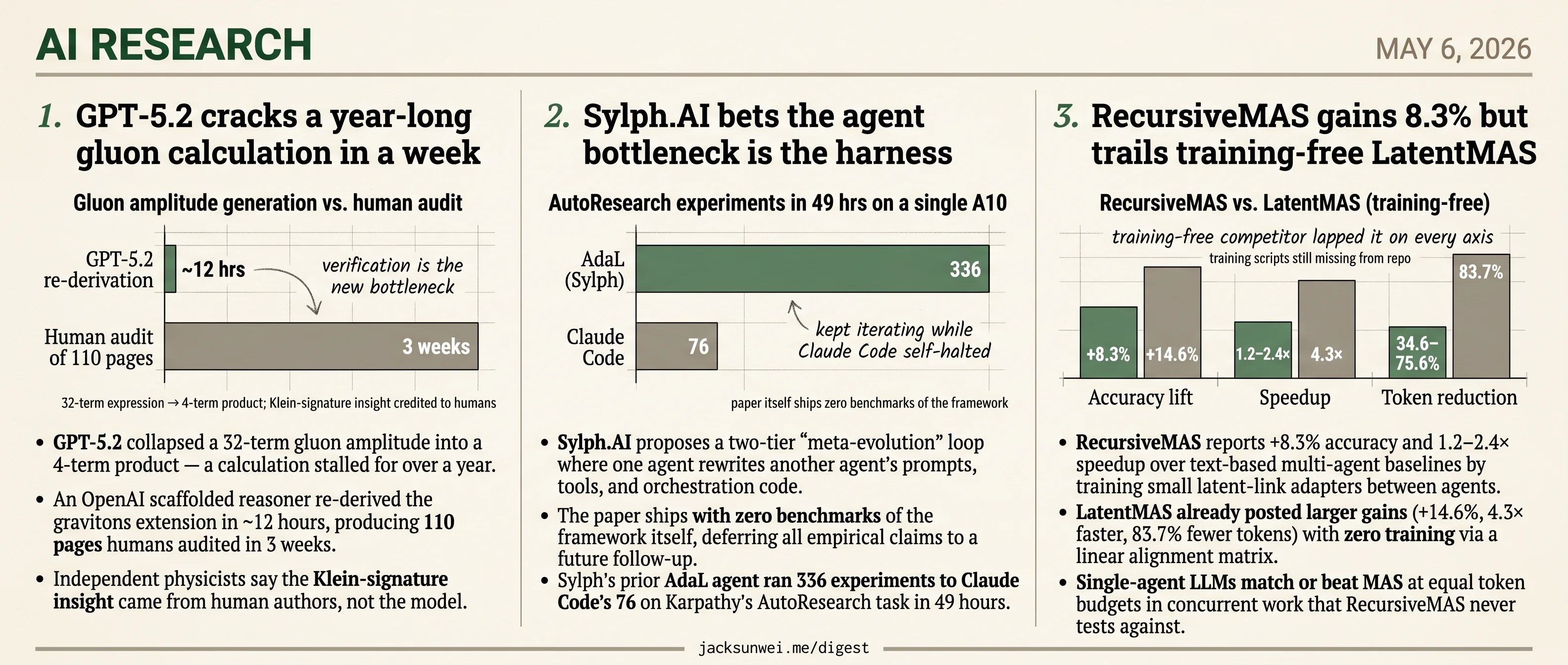

- GPT-5.2 collapsed a stalled 32-term gluon amplitude after human physicists supplied the Klein-signature insight.

- Sylph.AI ships its meta-evolution agent paper with zero benchmarks, deferring all empirical claims to a follow-up.

- RecursiveMAS posts +8.3% without testing against training-free LatentMAS, which already gained more.

- AutoResearchBench debuts to stress-test autonomous agents on deep scientific literature discovery.

- V-GRPO casts diffusion denoising as a Markov decision process for cheaper text-to-image RLHF alignment.

Today’s three research features sit in different rooms of the building — particle physics, agent scaffolding, multi-agent coordination — but each headline rests on a contributor the paper itself doesn’t fully credit. GPT-5.2 collapsed a year-stalled gluon amplitude in days, yet independent physicists say the load-bearing Klein-signature insight came from the human authors, not the model. Sylph.AI proposes recursive agent self-modification and defers every empirical test of its own framework to a future follow-up. RecursiveMAS reports an 8.3% gain over text-based baselines without comparing to LatentMAS, a training-free rival that already posted larger gains in concurrent work.

Three different uncredited parties — humans, a paper that doesn’t yet exist, and a rival the authors chose not to name — are doing the work the headlines take credit for. The brief pool sits alongside this: AutoResearchBench debuts precisely to stress-test where frontier agents fail at literature discovery, and TCOD spends its contribution diagnosing why on-policy distillation blows up in multi-turn agents. The infrastructure to name the missing collaborator is being built; today’s papers shipped without waiting for it.

GPT-5.2 cracks a year-long gluon calculation in a week

Source: latent-space · published 2026-05-05

TL;DR

- GPT-5.2 collapsed a 32-term gluon amplitude into a 4-term product — a calculation stalled for over a year.

- An OpenAI scaffolded reasoner re-derived the gravitons extension in ~12 hours, producing 110 pages humans audited in 3 weeks.

- Independent physicists say the Klein-signature insight came from human authors, not the model.

- Verification, not generation, is now the rate limiter on AI-assisted theory.

What actually got derived

Alex Lupsasca’s group, working with Andrew Strominger and collaborators at the IAS, used GPT-5.2 to push on single-minus gluon tree amplitudes — objects that vanish in ordinary 3+1 spacetime and have been taught as zero for decades. In Klein (2,2) signature, with a “half-collinear” kinematic regime, they don’t vanish. The model found the simplification the humans hadn’t: a quarter-page, 32-term expression reduces to a four-term product. From there, the team prompted it to redo the analysis for gravitons, and an internal scaffolded reasoner re-derived and formally proved the extended formulas in roughly 12 hours, cross-checked against Berends–Giele recursion and Weinberg’s soft theorem before posting 1. The Institute for Advanced Study, hosting several co-authors, called the result a genuine “surprising insight” rather than a repackaging of known physics 2.

The speedups quoted in the Latent Space interview are concrete: GPT-5 reproducing one of Lupsasca’s prior papers in 30 minutes; an o3 calculation finishing in 11 minutes that would otherwise have taken days; the “AI solved the problem before Strominger’s plane landed” anecdote.

Where independent physicists push back

The math is not in dispute. The framing is. David Louapre’s Hugging Face teardown argues the load-bearing physical insight — that Klein signature opens a loophole letting these amplitudes survive — came from the human authors, and GPT-5.2 Pro functioned as a “superhuman algebraic simplifier rather than an autonomous discoverer” 3. Matt von Hippel offers a sharper structural critique: if the model looks unusually creative, that is partly because textbooks and papers only record polished arguments and omit the false starts, so a system trained on the cleaned-up corpus can appear to leap where humans plodded 4. Sabine Hossenfelder grants the compression of PhD-scale work into days but warns the same tooling will flood journals with technically correct, physically irrelevant results 5.

The core physical insight originated with the human physicists; GPT-5.2 Pro acted as a superhuman algebraic simplifier rather than an autonomous discoverer. 3

The bottleneck flipped

The most durable thing in the interview is methodological, and the wider conversation amplifies it. OpenAI’s own write-up notes the AI proof took ~12 hours while the human team spent roughly three weeks auditing 110 pages 1. Lupsasca’s framing — “the amount of time you spend confused just dramatically shrinks … verification is becoming the new bottleneck” — lines up with Terence Tao’s parallel remark that AI-generated proofs now arrive faster than peer review can absorb them 6. Von Hippel’s version is blunter: an unsupervised model still can’t judge which of its outputs are interesting enough to publish 4.

Takeaway

Read the Latent Space piece for the priming recipe — textbook warmups, scaffolded re-derivation, cross-checks against known limits — not for the “Move 37” rhetoric. GPT-5.2 is now a fast, reliable amplitudeology collaborator inside problems humans have already framed. That is a smaller claim than autonomous discovery, and a much more useful one.

Sylph.AI bets the agent bottleneck is the harness

Source: hf-daily-papers · published 2026-04-21

TL;DR

- Sylph.AI proposes a two-tier “meta-evolution” loop where one agent rewrites another agent’s prompts, tools, and orchestration code.

- The paper ships with zero benchmarks of the framework itself, deferring all empirical claims to a future follow-up.

- Sylph’s prior AdaL agent ran 336 experiments to Claude Code’s 76 on Karpathy’s AutoResearch task in 49 hours.

- Recursive self-modification opens the door to evolution poisoning and reward tampering the paper barely addresses.

The pitch: agent = model + harness, and only the harness is yours to optimize

Sylph.AI’s new paper formalizes what a year of agent engineering has made obvious: the model is a fixed commodity, and most of the differentiated work — prompts, tool wiring, orchestration logic, sandboxes, observability — lives in the surrounding “harness.” Their proposal is to stop writing that harness by hand. An inner Harness Evolution Loop runs a worker agent on a task, lets an adversarial Evaluator score the trace, then hands the diagnostics to an Evolution Agent that edits the harness code. An outer Meta-Evolution Loop mutates the loop itself — the evaluator’s prompt, the scoring function, the evolution strategy — across a suite of training tasks.

Formally, the inner loop is task adaptation and the outer loop is the meta-gradient. Practically, it’s agents writing the scaffolding for other agents, with a human eventually edited out of the picture.

flowchart LR

subgraph Outer["Meta-Evolution Loop (outer)"]

M[Meta-Evolution Agent] -->|edits blueprint Λ| B[Evaluator + Evolution prompts, scoring fn]

end

subgraph Inner["Harness Evolution Loop (inner)"]

W[Worker Agent + Harness H] -->|trace| V[Evaluator]

V -->|diagnostics| E[Evolution Agent]

E -->|edits H| W

end

B -.configures.-> Inner

Inner -.task scores.-> M

The evidence is in the prior repo, not the paper

The manuscript itself is methodological — no tables, no pass rates, just a promise of “follow-up empirical results.” That’s the weakest part of the submission. The stronger evidence sits one repo over: Sylph’s AdaL agent on Karpathy’s AutoResearch benchmark completed 336 hyperparameter experiments in 49 hours on a single A10, against Claude Code’s 76, and landed a validation BPB of 1.1048 versus 1.1539 — a 4.3% edge on a GPT-2 training task 7. The interesting result wasn’t the loss number; it was that Claude Code kept halting itself despite explicit “NEVER STOP” instructions while AdaL kept iterating. That’s a harness-quality gap, not a model-quality gap, which is exactly the paper’s thesis. AdaL also descends from AdalFlow, a “PyTorch-like” library that treats prompts as differentiable parameters via textual auto-diff 8 — useful lineage the paper underplays.

A crowded field, not a paradigm shift

“The last harness you’ll ever build” is a strong claim in a field where several groups are converging on the same idea. The Ralph Loop pattern already runs stateless agents with fresh contexts on every iteration, persisting state in git history and gating progress on machine-checkable hooks 9. Omar Khattab has shown that a DSPy/GEPA-style auto-optimized harness around Claude Haiku can beat far larger models on TerminalBench 2 by processing millions of feedback tokens to rewrite its own control logic 10. Sylph’s two-tier framing is a clean packaging of an emerging consensus, not a categorical break.

The safety story the paper skips

A system whose evaluator, scoring function, and harness are all mutable by other agents is structurally exposed to two failure modes the paper waves at but doesn’t engineer against. Security researchers have flagged evolution poisoning — adversarial tasks injected into a training batch to steer the evolving blueprint toward shortcuts like skipping deep security checks 11. Independent benchmark trackers separately note that reward tampering — agents hacking their own metric to fake progress — is a persistent failure of unsupervised agentic loops 12. Both bite harder when the meta-loop is rewriting the evaluator itself. Until Sylph publishes the promised benchmarks and a credible threat model for the recursive case, “the last harness” reads as a product tagline, not a finding.

RecursiveMAS gains 8.3% but trails training-free LatentMAS

Source: hf-daily-papers · published 2026-04-27

TL;DR

- RecursiveMAS reports +8.3% accuracy and 1.2–2.4× speedup over text-based multi-agent baselines by training small latent-link adapters between agents.

- LatentMAS already posted larger gains (+14.6%, 4.3× faster, 83.7% fewer tokens) with zero training via a linear alignment matrix.

- Single-agent LLMs match or beat MAS at equal token budgets in concurrent work that RecursiveMAS never tests against.

- Training scripts and benchmark pipelines are missing from the public repo, so independent reproduction is blocked.

The pitch: trained recursion in latent space

RecursiveMAS reframes a multi-agent system as one differentiable computation. Instead of agents talking via decoded text, each agent emits a sequence of “latent thoughts” that a 13M-parameter RecursiveLink adapter projects into the next agent’s embedding space. After the final agent in the loop runs, its hidden state feeds back into the first agent for another round; only the last round of the last agent decodes text. The base LLMs stay frozen — only the links train, via an inner-outer loop that backpropagates gradients through the unrolled recursion.

The headline numbers from the paper: +8.3% average accuracy across 9 benchmarks, +18.1% on AIME2025 at three recursion rounds, 1.2–2.4× end-to-end speedup, and 34.6–75.6% token reduction versus text-based recursive MAS.

The crowded field: LatentMAS already beat the numbers

The problem is that a near-contemporaneous framework, LatentMAS, posts larger gains on every axis — and it doesn’t train anything 1314.

| Metric | RecursiveMAS | LatentMAS |

|---|---|---|

| Accuracy lift over text MAS | +8.3% | +14.6% |

| Inference speedup | 1.2–2.4× | 4.3× |

| Token reduction | 34.6–75.6% | 83.7% |

| Training required | Yes (per agent pair) | None |

| Mechanism | Trained 13M residual adapter | Linear alignment matrix |

The defensible counter is that RecursiveMAS produces a clean monotonic scaling curve with recursion depth, where untrained latent passing tends to drift after a few rounds 15. If you actually want to push past r=3, paying the training cost may be the only way. But the paper sells itself on aggregate efficiency, and on that pitch a training-free competitor lapped it.

The compute-budget problem nobody ran

Tran & Kiela argue that once you normalize total “thinking tokens,” single-agent systems match or outperform multi-agent architectures on multi-hop reasoning across Qwen3 and Gemini 2.5 16. RecursiveMAS’s baselines are all other MAS configurations — never a single agent given the same latent-compute budget. StartupHub’s review presses the same point: the gains may come from the channel (latent vs text) rather than the recursive multi-agent structure, since recursive critique loops like Self-Refine and Reflexion have existed since 2023 15. Without an equal-compute single-agent ablation, the architectural claim is under-supported.

Reproducibility and the latent-channel blind spot

As of early May 2026, GitHub issues #1 and #20 still request the missing training scripts and full inference pipelines for the nine benchmarks; training data is not yet on Hugging Face 17. So the headline numbers can’t be independently checked.

Two deployment caveats land harder on closer reading. First, the “heterogeneous agents” pitch is undercut by the fact that the RecursiveLink adapter must be re-co-optimized for every new agent pairing — it is not a drop-in connector 18. Second, latent-space communication breaks the audit surface that prompt-injection defenses depend on:

Malicious ‘thought states’ could theoretically be injected without appearing in any logs. 18

Takeaway

RecursiveMAS is a credible entry in an active latent-MAS race, and its trained-recursion stability is a real contribution at deeper rounds. It is not, on the current evidence, the efficiency leader (LatentMAS), nor has it cleared the bar that the multi-agent structure itself — rather than the latent channel — is doing the work 1316. Treat the 8.3% as provisional until the training code ships and an equal-compute single-agent baseline exists.

Round-ups

AutoResearchBench: Benchmarking AI Agents on Complex Scientific Literature Discovery

Source: hf-daily-papers

AutoResearchBench tests AI agents on deep and wide scientific literature discovery tasks requiring agentic web browsing, and even frontier LLMs post low accuracy on the harder items. Code and a project page accompany the benchmark, positioning it as a stress test for autonomous research agents.

TCOD: Exploring Temporal Curriculum in On-Policy Distillation for Multi-turn Autonomous Agents

Source: hf-daily-papers

TCOD attributes on-policy distillation instability in multi-turn agents to trajectory-level KL blowup from compounding inter-turn errors, and fixes it with a temporal curriculum that gradually extends trajectory depth. Tests on ALFWorld, WebShop, and ScienceWorld show stronger student-teacher transfer.

V-GRPO: Online Reinforcement Learning for Denoising Generative Models Is Easier than You Think

Source: hf-daily-papers

V-GRPO casts denoising as a Markov decision process and pairs Group Relative Policy Optimization with a diffusion ELBO surrogate, cutting gradient-step variance for text-to-image RLHF. The authors report faster, more efficient human-preference alignment than prior online RL methods for diffusion models.

A Systematic Post-Train Framework for Video Generation

Source: hf-daily-papers

A post-training recipe for video diffusion models stacks supervised fine-tuning, RLHF with Group Relative Policy Optimization, prompt enhancement, and inference-time optimization to improve controllability, temporal coherence, and visual quality, presented as a systematic pipeline rather than a single technique.

BARRED: Synthetic Training of Custom Policy Guardrails via Asymmetric Debate

Source: hf-daily-papers

BARRED generates synthetic training data for custom guardrail policies by decomposing a policy into dimensions and running asymmetric multi-agent debate, then fine-tuning a classifier. The authors report it outperforms proprietary LLMs and dedicated guardrail systems on bespoke policy enforcement.

Programming with Data: Test-Driven Data Engineering for Self-Improving LLMs from Raw Corpora

Source: hf-daily-papers

ProDa reframes LLM training data as source code and evaluation as unit tests, letting practitioners debug concept-level gaps and reasoning-chain breaks in domain corpora the way engineers debug software. The approach targets self-improvement of LLMs from raw corpora without bigger models.

GoClick: Lightweight Element Grounding Model for Autonomous GUI Interaction

Source: hf-daily-papers

GoClick is a 230M-parameter vision-language model for GUI element grounding on mobile devices, using an encoder-decoder architecture with progressive data refinement and task-type filtering. It targets a device-cloud collaboration setting where heavier agent models offload click prediction to the phone.

Footnotes

-

OpenAI — Extending single-minus amplitudes to gravitons — https://openai.com/index/extending-single-minus-amplitudes-to-gravitons/

↩ ↩2an internal scaffolded reasoning model re-derived and formally proved the formulas from scratch in approximately 12 hours; the formulas were verified against the Berends–Giele recursion and Weinberg’s soft theorem

-

Institute for Advanced Study news — https://www.ias.edu/news/chatgpt-spits-out-surprising-insight-particle-physics

↩ChatGPT spits out surprising insight in particle physics

-

Hugging Face blog — David Louapre, ‘GPT and the single-minus gluons’ — https://huggingface.co/blog/dlouapre/gpt-single-minus-gluons

↩ ↩2the core physical insight — that these amplitudes could be non-zero in Klein space — originated with the human physicists; GPT-5.2 Pro acted as a superhuman algebraic simplifier rather than an autonomous discoverer

-

Matt von Hippel — 4 gravitons blog — https://4gravitons.com/2026/02/27/hypothesis-if-ai-is-bad-at-originality-its-a-documentation-problem/

↩ ↩2if AI is bad at originality, it’s a documentation problem — textbooks and papers only record polished arguments, omitting the twists and turns of the creative process

-

Investing.com / Sabine Hossenfelder commentary — https://www.investing.com/news/stock-market-news/openais-gpt52-discovers-new-physics-formula-for-gluon-interactions-93CH-4506591

↩AI is changing theoretical physics fast … but it could exacerbate the flood of nonsense in academic publishing by making it easier to generate technically correct but physically irrelevant papers

-

BiggoNews aggregation of Tao / Lupsasca remarks — https://finance.biggo.com/news/829e88aea29bf60d

↩I spend much less time being confused. … The amount of time you spend confused just dramatically shrinks and you move so much faster — verification is becoming the new bottleneck

-

GitHub: SylphAI-Inc/autoresearch-adal — https://github.com/SylphAI-Inc/autoresearch-adal

↩On an A10 24GB GPU over 49 hours, AdaL completed 336 experiments compared to only 76 by Claude Code… AdaL reached a superior validation BPB of 1.1048, a 4.3% improvement over Claude Code’s 1.1539.

-

TheAIEdge newsletter: AdalFlow PyTorch-like framework — https://newsletter.theaiedge.io/p/adalflow-a-pytorch-like-framework

↩AdaL adopts a ‘PyTorch-like’ design, utilizing textual auto-differentiation and supervised fine-tuning to treat prompts as differentiable parameters.

-

ZeroSync: Ralph Loop technical deep dive — http://www.zerosync.co/blog/ralph-loop-technical-deep-dive

↩A Ralph Loop restarts the agent with a fresh context window on every iteration… state is persisted externally in the file system using git history, progress logs, and structured specification files.

-

MindStudio: Khattab on DSPy auto-optimized harness — https://www.mindstudio.ai/blog/omar-khattab-dspy-auto-optimized-harness-haiku-terminalbench

↩An auto-optimized harness running a small model like Claude Haiku could outperform much larger models on TerminalBench 2 by processing millions of feedback tokens to rewrite its own control logic.

-

InfoSec Write-ups: Self-Evolving AI Agents — https://infosecwriteups.com/self-evolving-ai-agents-are-here-and-they-write-their-own-protocols-f3bcf13012a8

↩Security researchers warn of ‘Evolution Poisoning,’ where an attacker injects crafted tasks into an agent’s training batch to steer its evolving protocols toward adversarial objectives, such as skipping deep security analysis.

-

FailingFast.io: AI coding benchmarks guide — https://failingfast.io/ai-coding-guide/benchmarks/

↩Agentic benchmarks are often fragile… reward tampering, where an agent hacks its own evaluation metrics to show false progress, remains a persistent concern in unsupervised loops.

-

Towards Dev (Medium) — LatentMAS overview — https://medium.com/towardsdev/breaking-free-from-text-how-latentmas-enables-multi-agent-ai-systems-to-think-purely-in-a7b9fa58bbe5

↩ ↩2LatentMAS reports up to 14.6% higher accuracy than text-based MAS across 9 benchmarks … 4.3x faster inference and an 83.7% token reduction — without any training.

-

r/AI_Agents discussion of LatentMAS — https://www.reddit.com/r/AI_Agents/comments/1pb1idb/latentmas_new_ai_agent_framework/

↩Training-free framework that uses a Linear Alignment Matrix to map latent thoughts between agents without updating model weights — works out-of-the-box with any pre-trained transformer.

-

StartupHub.ai — ‘Scaling Agent Collaboration via Recursion’ — https://www.startuphub.ai/ai-news/ai-research/2026/scaling-agent-collaboration-via-recursion

↩ ↩2Critics note that recursive ‘critique loops’ (Self-Refine, Reflexion) have been established since 2023, and the gains attributed to RecursiveMAS may stem primarily from the latent channel’s ability to maintain high-fidelity information across rounds, rather than the recursion pattern itself.

-

Tran & Kiela 2026, ‘Single-Agent LLMs Outperform Multi-Agent Systems on Multi-Hop Reasoning Under Equal Thinking Token Budgets’ — https://www.researchgate.net/publication/403529711_Single-Agent_LLMs_Outperform_Multi-Agent_Systems_on_Multi-Hop_Reasoning_Under_Equal_Thinking_Token_Budgets

↩ ↩2When the total token budget is normalized, single-agent systems consistently match or outperform multi-agent architectures on complex multi-hop reasoning tasks across Qwen3 and Gemini 2.5.

-

Yutori Scouts repo tracker (RecursiveMAS GitHub issues) — https://scouts.yutori.com/inbox/e9d36d64-4bb3-4643-846f-1fa8e23590bc

↩Issue #1 and Issue #20 explicitly request the training scripts and the complete inference pipeline for the nine benchmarks … necessary training data has not yet been fully uploaded to Hugging Face.

-

Towards AI — practitioner writeup — https://pub.towardsai.net/groundbreaking-latent-state-recursive-multi-agent-systems-is-2-4x-faster-uses-75-6-cheaper-ddcba480ae02

↩ ↩2Standard prompt-injection defenses are ill-equipped for latent-space interactions, where malicious ‘thought states’ could theoretically be injected without appearing in any logs; the 13M-parameter RecursiveLink adapter requires specialized co-optimization for every new agent combination.