Anthropic Institute opens, ElementsClaw verifies old hits, DXRG cuts 57% to 3%

An institute, a materials agent, and a trading swarm each headline a metric narrower than the claim it is asked to carry.

Anthropic Institute opens, ElementsClaw verifies old hits, DXRG cuts 57% to 3%

TL;DR

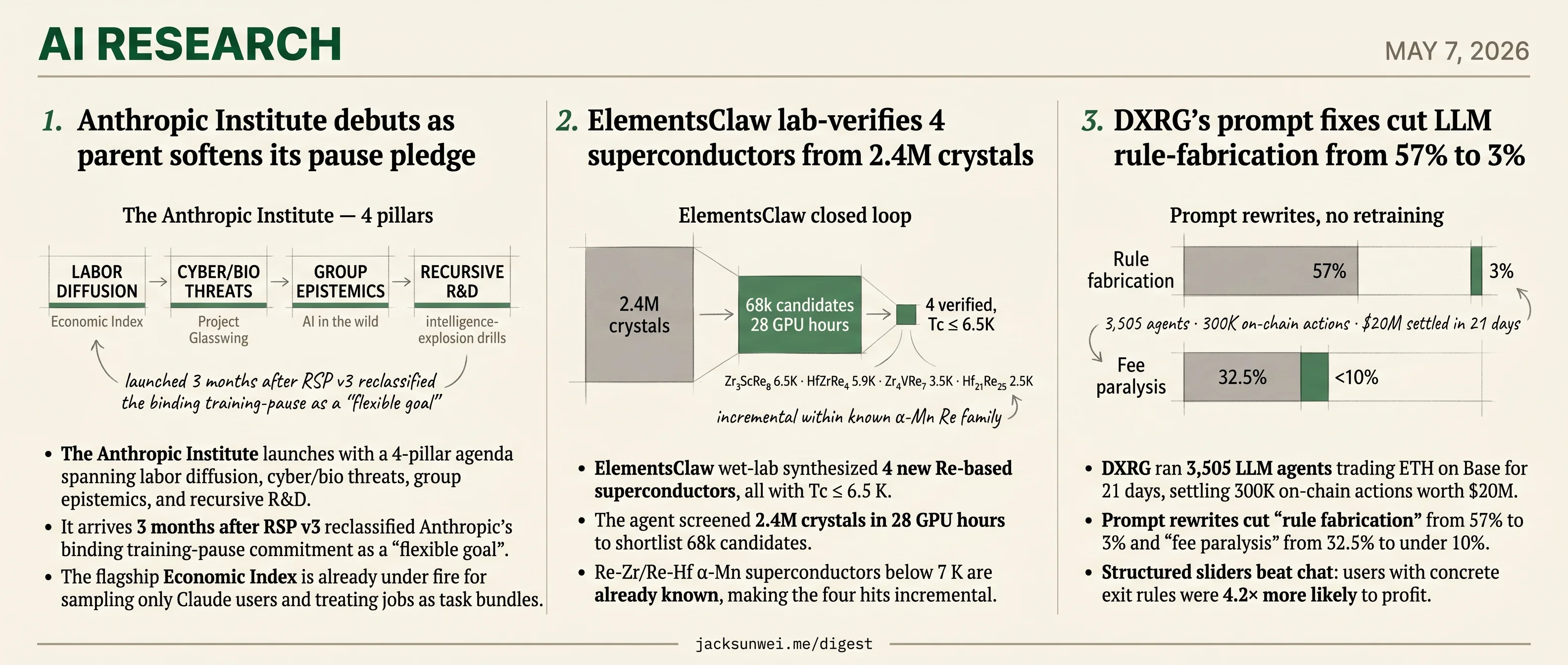

- Anthropic Institute launches a 4-pillar agenda 3 months after RSP v3 demoted the training-pause pledge to a flexible goal.

- ElementsClaw wet-lab-verified 4 Re-based superconductors, all under 7K and incremental on existing literature.

- ElementsClaw screened 2.4M crystals in 28 GPU hours, shortlisting 68k candidates before synthesis.

- DXRG’s prompt rewrites cut LLM rule-fabrication from 57% to 3% across 3,505 agents trading $20M on Base.

- DXRG’s 99.9% figure measures execution success, not strategic soundness or adversarial robustness.

Three research debuts land today, and each picks the metric most likely to flatter it. Anthropic opens its in-house research institute with a four-pillar agenda on labor, cyber/bio, epistemics, and recursive R&D — three months after the parent quietly downgraded its binding training-pause to a flexible goal. ElementsClaw’s materials agent screened 2.4M crystals and lab-verified four new superconductors, all sitting in a Re-based corner of the phase diagram where comparable compounds were already known. DXRG’s 3,505-agent ETH trading swarm reports a 99.9% reliability figure that turns out to score whether trades executed, not whether they were sound.

The shared pattern isn’t bad faith — it’s narrowness. Each project picks a benchmark it can hit cleanly: a research charter that brackets the obligation it just relaxed, an incremental class of materials a wet lab can confirm, an execution counter that sidesteps strategy and adversarial pressure. The harder axes — pause enforcement, novel phase space, profit and robustness — are the ones outside reviewers keep flagging.

Anthropic Institute debuts as parent softens its pause pledge

Source: anthropic-research · published 2026-05-07

TL;DR

- The Anthropic Institute launches with a 4-pillar agenda spanning labor diffusion, cyber/bio threats, group epistemics, and recursive R&D.

- It arrives 3 months after RSP v3 reclassified Anthropic’s binding training-pause commitment as a “flexible goal”.

- The flagship Economic Index is already under fire for sampling only Claude users and treating jobs as task bundles.

- Project Glasswing’s 27-year-old OpenBSD bug reignites the offense-defense debate TAI claims to study.

A research agenda with four pillars

Anthropic is consolidating its Frontier Red Team, Societal Impacts and Economic Research units into a single in-house think tank, The Anthropic Institute (TAI), and publishing a research agenda built around four pillars: economic diffusion, threats and resilience, “AI systems in the wild,” and AI-driven R&D. The pitch is that frontier-lab access lets TAI act as an early-warning system for policymakers — flagging things like junior-rung labor pipeline collapse, geopolitical “hotline” needs, and intelligence-explosion fire drills before regulators notice.

The headline novelty isn’t the topics — IST, CNAS and the AI Futures Project are already on most of them — but the funded fellowship (~$3,850/week stipend, $15k/month compute) and the formal pipeline from TAI findings into Anthropic’s Long-Term Benefit Trust 1.

The credibility problem TAI doesn’t mention

The agenda lands in a context the launch post elides. In February, Anthropic’s RSP v3 quietly removed the firm’s hard pause commitment, reclassifying training halts as “flexible goals” — a change that drew sharp dissent from the safety community and coincided with senior safeguards staff resigning 2. The leadership reshuffle compounds the optics: Jack Clark moved from Head of Policy to a newly invented “Head of Public Benefit” seat, while existing teams were rebadged under the Institute label 1.

You cannot credibly position yourself as an early-warning system while loosening the internal tripwires that give early warnings teeth. That tension is the story.

Methodological fault lines in the flagship work

The Economic Diffusion pillar rides on the Anthropic Economic Index, which independent economists have already labeled a “keyhole” view of the labor market.

“Using platform traces from a single provider reflects Anthropic’s user demographics rather than the broader workforce, and treats jobs as a sum of automate-able tasks rather than outcomes and judgment.” 3

Apply a reliability discount and headline productivity gains drop from 1.8pp to 0.6–1.2pp 3. The Threats pillar has the same problem in mirror image: Project Glasswing/Claude Mythos has demonstrated genuine frontier capability — thousands of zero-days, including a 27-year-old OpenBSD remote crash, plus $100M in defender credits 4 — but security analysts argue gated access to a few hyperscalers cannot outrun open-weight diffusion, and AI-speed discovery creates a remediation backlog humans cannot patch 5. TAI promises to study offense-defense balance; Anthropic is shipping the model that tilts it.

Where it sits among peer labs

| Lab | Framing | Binding commitments |

|---|---|---|

| Anthropic | RSPs + behavioral constraints | Pause now “flexible” 2 |

| OpenAI | ”Ship and govern” Preparedness | Iterative, post-hoc |

| DeepMind | Frontier Safety Framework | Benchmark-driven |

GovAI alumni populate the policy heads of all three 6, so expect convergent vocabulary even where the substance diverges.

What to watch

The test isn’t whether TAI publishes good papers — the staff list suggests it will. The test is whether it publishes findings that constrain Anthropic’s own roadmap, not just inform outsiders. A fellowship report arguing Claude Mythos should ship more slowly, or that the Economic Index overstates diffusion, would settle the honest-broker question. Anything less is a policy shop with a research budget.

ElementsClaw lab-verifies 4 superconductors from 2.4M crystals

Source: hf-daily-papers · published 2026-04-28

TL;DR

- ElementsClaw wet-lab synthesized 4 new Re-based superconductors, all with Tc ≤ 6.5 K.

- The agent screened 2.4M crystals in 28 GPU hours to shortlist 68k candidates.

- Re-Zr/Re-Hf α-Mn superconductors below 7 K are already known, making the four hits incremental.

- A-Lab and GNoME failed independent reanalysis for XRD misreads and hallucinated phases.

What ElementsClaw actually does

ElementsClaw is a multi-agent loop wrapped around a 1-billion-parameter equivariant graph network (“Elements”) pretrained on 125M structures. The agent uses GPT-5 to mine literature, fine-tunes Elements into a binary classifier (Elements-C, AUC 0.996), screens unverified ternary chemistries, then ranks candidates by predicted Tc, thermodynamic stability, and synthetic feasibility before handing a shortlist to human experimentalists.

flowchart LR

A[2.4M crystals<br/>MPDS, Kagome] --> B[Elements-T<br/>Tc regressor]

L[Literature corpus] --> G[GPT-5<br/>label curation]

G --> C[Elements-C<br/>classifier, AUC 0.996]

B --> C

C --> D[68k candidates<br/>28 GPU hours]

D --> E[Elements-E<br/>stability + DFT]

E --> F[Wet-lab synthesis<br/>4 verified Tc ≤ 6.5K]

The headline result is the closed loop: Zr₃ScRe₈ (6.5 K), HfZrRe₄ (5.9 K), Zr₄VRe₇ (3.5 K), and Hf₂₁Re₂₅ (2.5 K) were predicted, synthesized, and measured. That is a meaningfully higher bar than either A-Lab or GNoME cleared.

The novelty question

The four hits sit inside a well-trodden chemical neighborhood. Binary Re₆Zr and Re₆Hf are already known α-Mn-lattice noncentrosymmetric superconductors with Tc < 7 K, and Re-based high-entropy alloys in this exact family have been probed with muon-spin rotation for time-reversal symmetry breaking 7. ElementsClaw’s new compounds are plausible ternary extensions of that motif, not chemical surprises — which is reassuring as a sanity check on the model but tempers the “discovery” framing. Independent coverage makes the same point: all four sit “far from the room-temperature grail” 8.

Why the precedent demands scrutiny

The autonomous-discovery genre has a credibility problem. Palgrave and Schoop’s reanalysis of Berkeley’s A-Lab concluded it “had not synthesized any truly novel materials,” tracing failures to AI misinterpretation of XRD patterns and undetected compositional disorder that let mixtures of known phases be labeled as new ones 9. Cheetham and Seshadri were blunter about GNoME’s 2.2M “stable” crystals: many are algorithmic hallucinations, derivatives of known structures, or formulas no chemist would write 10.

ElementsClaw’s own negative case — Zr₂VRe₃ failing because the model missed pair-breaking from magnetic vanadium — is exactly the kind of subtlety that needs to be ruled out for the four positive hits too. Independent XRD and phase-purity verification is the test that matters, and the paper does not provide it.

The LLM-as-scientist hole

A Google Research expert evaluation, using high-Tc superconductivity as the testbed, found general LLMs “exhibited severe limitations” — incapable of reading figures, prone to conflating speculation with consensus, and useful as retrieval rather than reasoning tools 11. ElementsClaw routes its literature synthesis and training-label curation through GPT-5: precisely the step that study flags as fragile. The authors concede the model will not generalize to strongly correlated unconventional superconductors (cuprates, nickelates) where DFT itself breaks down, and the alphaXiv overview notes the agent “still relies on human oversight and prompting” at stage transitions 12.

Net read

The wet-lab loop is real and is the genuine differentiator from prior autonomous-discovery claims. But the four verified compounds are incremental within a known α-Mn Re family 7, the headline Tc values are modest, and the LLM-driven labeling pipeline sits on the exact failure mode independent reviewers have hammered competitors for 91011. The right next step is independent XRD on the four hits — not another 2.4M-crystal screen.

DXRG’s prompt fixes cut LLM rule-fabrication from 57% to 3%

Source: hf-daily-papers · published 2026-04-27

TL;DR

- DXRG ran 3,505 LLM agents trading ETH on Base for 21 days, settling 300K on-chain actions worth $20M.

- Prompt rewrites cut “rule fabrication” from 57% to 3% and “fee paralysis” from 32.5% to under 10%.

- Structured sliders beat chat: users with concrete exit rules were 4.2× more likely to profit.

- The 99.9% figure measures execution success, not strategic soundness or adversarial robustness.

The harness-first thesis

DX Research Group’s 21-day “DX Terminal Pro” deployment is the largest published trial of LLM agents managing real capital: 3,505 user-funded vaults, 70 billion inference tokens, 300,000 on-chain actions, all routed through a single Qwen3-235B-A22B-Thinking model. The headline finding isn’t about Qwen. It’s that reliability lived almost entirely in the layers around the model — prompt compilation, policy validation, vault permissions — not in the weights.

That claim has independent backing. The April 2026 Agent Harness survey formalizes a “binding constraint thesis”: above a GPT-4 capability floor, harness engineering yields higher marginal reliability gains than swapping models 13. CMU/Salesforce benchmarks make it concrete — natural-language multi-step agents hit 30–35% success, structured workflow frameworks 83%+ 14. DXRG’s own slider-vs-chat result sits squarely in that gap.

What the prompt fixes actually fixed

The paper’s most useful contribution is a taxonomy of interpretation failures and the operating-layer patches that resolved them. None required retraining.

| Failure mode | Symptom | Fix | Result |

|---|---|---|---|

| Rule fabrication | Agents invented “Hierarchy rule #2” to justify trades | Reframe prior reasoning as context, not precedent | 57% → 3% |

| Fee paralysis | 2.3% fee blocked all action | Move fee info later; contextualize vs. 10–50% daily moves | 32.5% → <10% |

| Tokenomics misread | Panic-selling during scheduled “reap” events | Insert whitepaper context as structured input | 42.9% → 78.0% capital deployed |

| Number hardening | Sliders treated as hard floors, inverting behavior | Replace numbers with comparative language | Restored gradient |

| Cadence trading | Agents traded on the polling rhythm | Instruct agent to ignore tick interval; filter memory | Cycles eliminated |

Three of these — numeric fabrication, fee mishandling, observability gaps — map directly onto AIFinHub’s independent 2026 survey of recurring trading-agent failures 15. The convergence suggests these are properties of the interface between LLMs and financial state, not artifacts of one vendor’s stack.

What 99.9% doesn’t measure

The reliability number is post-policy: it counts transactions that already passed validation and settled on-chain. It says nothing about whether the trades were good, and nothing about adversarial robustness.

DXRG’s own MEMEbench undercuts the rosier reading. Across 18,560 calls, the ticker $ANT saw an 84% higher selection rate than $MOON despite identical fundamentals — and 98% of reasoning traces cited “technical indicators” to justify the bias 16. Chain-of-thought transparency is theatre when the bias is upstream of the reasoning.

A human player bypassed [Freysa’s] logic by tricking the agent into misinterpreting its own approveTransfer function as a method for receiving funds rather than sending them. 17

DXRG’s structured policy layer is the right answer to Freysa-style prompt-level guardrails. But April’s KuCoin-documented incident — attackers poisoning agent long-term memory, cascading to a $40M Step Finance treasury drain via over-permissioned agents 18 — targets exactly the memory and permission surfaces DXRG’s design also exposes. A least-privilege vault bounds blast radius; it doesn’t stop a poisoned agent from making bounded-but-bad decisions all day.

The honest read: DXRG has shown that benign-failure reliability is a harness problem, and largely a solved one. Adversarial reliability is the next paper.

Round-ups

GLM-5V-Turbo: Toward a Native Foundation Model for Multimodal Agents

Source: hf-daily-papers

Z.ai’s GLM-5V-Turbo bakes multimodal perception directly into the reasoning loop of an agent foundation model, posting strong scores on multimodal coding and visual tool-use benchmarks while preserving text-only capabilities. Code is on GitHub under zai-org/GLM-V.

Unified 4D World Action Modeling from Video Priors with Asynchronous Denoising

Source: hf-daily-papers

X-WAM unifies real-time robotic action execution with high-fidelity 4D world synthesis in a single Diffusion Transformer, adding a depth prediction branch over RGB-D video and an asynchronous denoising schedule that decouples action and scene generation timesteps for efficiency.

Turning the TIDE: Cross-Architecture Distillation for Diffusion Large Language Models

Source: hf-daily-papers

TIDE distills knowledge from autoregressive teachers into diffusion LLMs across architectures and tokenizers, introducing TIDAL for noise-dependent reliability weighting, CompDemo for complementary mask splitting, and a Reverse CALM objective that performs chunk-level likelihood matching across vocabularies.

Accelerating RL Post-Training Rollouts via System-Integrated Speculative Decoding

Source: hf-daily-papers

Applying speculative decoding inside RL post-training rollouts, the system pairs vLLM with Eagle3-style draft models and MTP heads while preserving the target output distribution, with a performance simulator projecting roughly 2.5x end-to-end training speedup at large rollout scales.

Large Language Models Explore by Latent Distilling

Source: hf-daily-papers

Exploratory Sampling trains a lightweight distiller to predict an LLM’s deep-layer hidden representations from shallow ones, then uses the prediction gap as a novelty signal to bias decoding toward semantically diverse continuations without retraining the base model.

Probing Visual Planning in Image Editing Models

Source: hf-daily-papers

The AMAZE dataset reframes visual planning as single-step image-to-image transformation over abstract maze puzzles, finding that both autoregressive and diffusion editors struggle zero-shot and remain well below human efficiency even after fine-tuning.

RADIO-ViPE: Online Tightly Coupled Multi-Modal Fusion for Open-Vocabulary Semantic SLAM in Dynamic Environments

Source: hf-daily-papers

RADIO-ViPE performs open-vocabulary semantic SLAM from raw monocular RGB video alone, skipping camera intrinsics and depth sensors by fusing agglomerative foundation-model embeddings into the factor graph with adaptive robust kernels to handle dynamic scenes.

Footnotes

-

PureAI — https://pureai.com/articles/2026/03/11/anthropic-institute.aspx

↩ ↩2Co-founder Jack Clark transitioned from Head of Policy to the newly created role of Head of Public Benefit, while Sarah Heck took over Public Policy; the Institute centralizes the Frontier Red Team, Societal Impacts and Economic Research under one roof.

-

KESQ/CNN Business — https://kesq.com/money/cnn-business-consumer/2026/02/25/anthropic-ditches-its-core-safety-promise-in-the-middle-of-an-ai-red-line-fight-with-the-pentagon/

↩ ↩2Anthropic ditches its core safety promise in the middle of an AI red-line fight with the Pentagon… reclassifying the pause commitment as a ‘flexible goal’ rather than a hard commitment.

-

Forbes (Hamilton Mann) — https://www.forbes.com/sites/hamiltonmann/2026/03/08/anthropics-study-does-not-measure-ais-labor-market-impacts/

↩ ↩2Anthropic’s study does not measure AI’s labor market impacts… using platform traces from a single provider reflects Anthropic’s user demographics rather than the broader workforce, and treats jobs as a sum of automate-able tasks rather than outcomes and judgment.

-

CyberScoop — https://cyberscoop.com/project-glasswing-anthropic-ai-open-source-software-vulnerabilities/

↩Claude Mythos identified thousands of zero-day vulnerabilities including a 27-year-old remote crash bug in OpenBSD; Anthropic committed $100M in usage credits and $4M in donations to open-source security orgs to facilitate patching.

-

Platformer — https://www.platformer.news/anthropic-mythos-cybersecurity-risk-experts/

↩Once comparable capabilities reach open-source or ransomware actors, they will be able to weaponize bugs at machine speed… AI-driven discovery creates an exponential remediation problem the human patch pipeline cannot absorb.

-

EA Forum — ‘I read every major AI lab’s safety plan’ — https://forum-bots.effectivealtruism.org/posts/fsxQGjhYecDoHshxX/i-read-every-major-ai-lab-s-safety-plan-so-you-don-t-have-to

↩Anthropic emphasizes internal behavioral constraints and RSPs while OpenAI follows an iterative ‘ship and govern’ model and DeepMind’s Frontier Safety Framework prioritizes the science of safety; GovAI alumni populate the policy heads of all three.

-

ResearchGate — High-Entropy Alloy Superconductors on an α-Mn lattice — https://www.researchgate.net/publication/326957823_High-Entropy_Alloy_Superconductors_on_an_a-Mn_lattice

↩ ↩2Re6Zr and Re6Hf were identified as noncentrosymmetric superconductors with Tc values typically below 7 K… muon-spin rotation experiments have revealed spontaneous magnetic fields below Tc in Re-based alloys like Re6(Zr,Hf)

-

AIExpert News — independent coverage of ElementsClaw — https://www.aiexpert.news/en/article/elementsclaw-agentic-framework-closes-the-loop-on-ai-driven-materials-discovery

↩screened approximately 2.4 million stable crystals in just 28 GPU hours… all four new superconductors exhibit transition temperatures below 7 K, far from the room-temperature grail

-

Chemistry World — Palgrave & Schoop reanalysis of Berkeley A-Lab — https://www.chemistryworld.com/news/new-analysis-raises-doubts-over-autonomous-labs-materials-discoveries/4018791.article

↩ ↩2the A-Lab had not synthesized any truly novel materials… systematic errors in the AI’s interpretation of XRD data… failed to account for compositional disorder and misidentified mixtures of known compounds as new materials

-

PMC / Cheetham & Seshadri critique of GNoME — https://pmc.ncbi.nlm.nih.gov/articles/PMC13107388/

↩ ↩2many are mere ‘hallucinations’ of the algorithm… a large fraction of these candidates are simple derivatives of known crystals or lack the novelty, credibility, and utility required to be termed ‘materials’

-

Google Research blog — Expert Evaluation of LLM World Models (High-Tc case study) — https://research.google/blog/testing-llms-on-superconductivity-research-questions/

↩ ↩2all models exhibited severe limitations… a primary failure was the models’ total incapacity to engage with data visualization… LLMs frequently conflate speculative claims with scientific consensus

-

alphaXiv overview of 2604.23758 — https://www.alphaxiv.org/overview/2604.23758v1

↩while the system plans and executes stages autonomously, it still relies on human oversight and prompting to harmonize the process and ensure physical fidelity, suggesting it is an assistant rather than a fully independent scientist

-

Agent Harness for LLM Agents: A Survey (ResearchGate, Apr 2026) — https://www.researchgate.net/publication/403692868_Agent_Harness_for_Large_Language_Model_Agents_A_Survey

↩A significant portion of reported agent failures are actually ‘harness failures’ caused by poorly specified environments rather than model limitations… the bottleneck for production-grade agents is no longer raw intelligence but the infrastructure that governs its execution.

-

The Register on CMU/Salesforce study — https://www.theregister.com/software/2025/06/29/ai-agents-wrong-70-of-time-carnegie-mellon-study/660959

↩Agents using natural language for multi-step tasks achieved a success rate of only 30-35%, [while] those operating within structured ‘Workflow Execution’ frameworks reached success rates higher than 83%.

-

AIFinHub: 5 Failure Modes of LLM Trading Agents — https://aifinhub.io/articles/5-failure-modes-llm-trading-agents/

↩Five recurring failure modes in 2026: prompt drift (where model updates degrade strategy), silent numeric fabrication, price-blind contamination, token-cost runaway, and ‘audit amnesia’ — the inability of an agent to explain its own trade history.

-

MEMEbench (terminal.markets) — https://memebench.terminal.markets/

↩$ANT saw an 84% higher selection rate than $MOON despite identical fundamentals… In 98% of 18,560 inference calls the LLMs justified purchases by citing technical indicators, even though the underlying data was identical to less-preferred tickers.

-

The Block on Freysa exploit — https://www.theblock.co/post/328747/human-player-outwits-freysa-ai-agent-in-47000-crypto-challenge

↩A human player (‘p0pular.eth’) bypassed its logic by tricking the agent into misinterpreting its own approveTransfer function as a method for receiving funds rather than sending them.

-

KuCoin: AI Trading Agent Vulnerability 2026 — https://www.kucoin.com/blog/en-ai-trading-agent-vulnerability-2026-how-a-45m-crypto-security-breach-exposed-protocol-risks

↩Attackers targeted the long-term memory of agents, poisoning decision-making data that spread downstream to connected agents within hours… Step Finance AI agents with excessive permissions amplified a treasury drain to $40 million.