Shopify hits 77% merges, Willison ships shebang scripts, Shore prices the bill

Three AI-coding workflows post headline productivity wins today, and each leaves a different downstream cost — vulnerabilities, injection, maintenance — unpriced.

Shopify hits 77% merges, Willison ships shebang scripts, Shore prices the bill

TL;DR

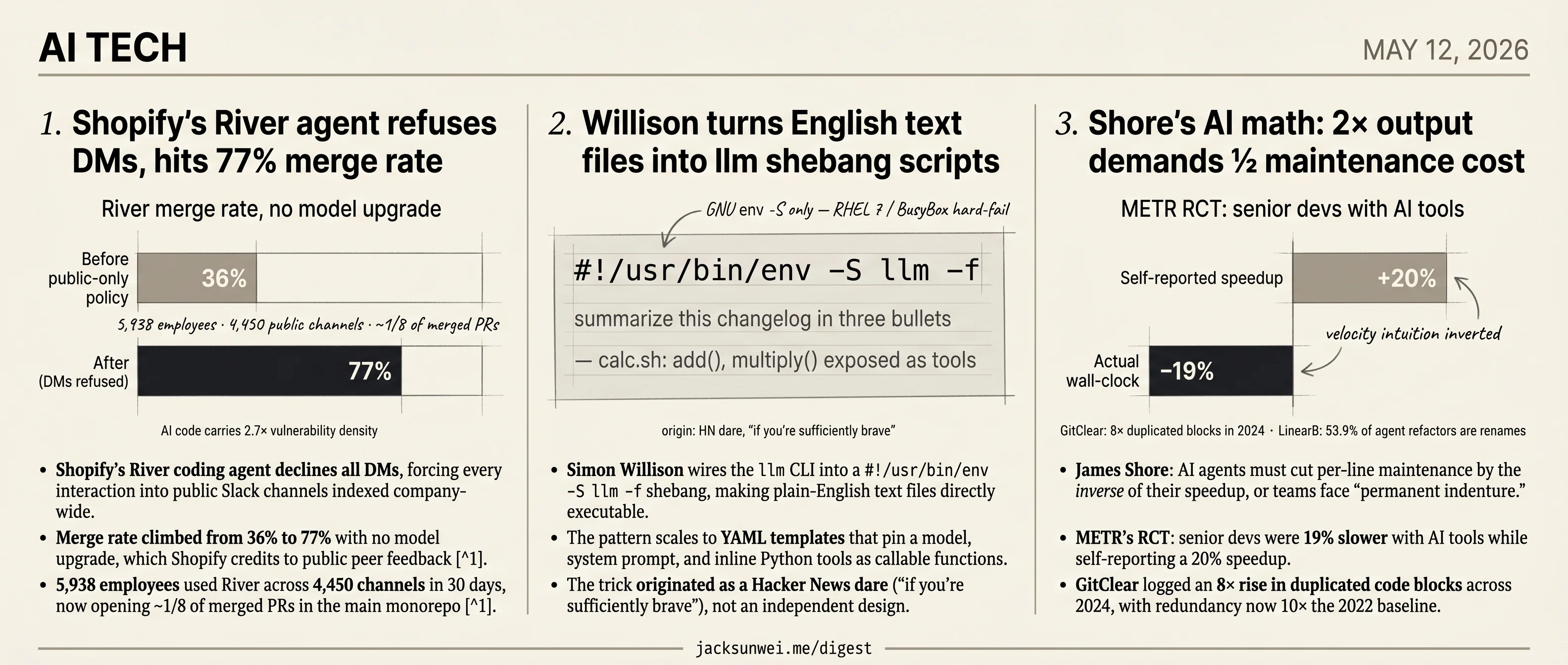

- Shopify’s River agent refuses DMs, pushing 5,938 employees through public Slack channels and lifting merge rate to 77%.

- Simon Willison wires

llminto a shebang line, making plain-English text files executable as agentic scripts. - James Shore argues 2× AI output demands ½ maintenance cost, or teams face permanent indenture.

- METR’s RCT clocked senior devs 19% slower with AI tools while they self-reported a 20% speedup.

- GitClear logged an 8× rise in duplicated code blocks across 2024, now 10× the 2022 baseline.

Today’s three AI-coding stories all lead with a number that flatters the workflow. Shopify’s River agent took merge rate from 36% to 77% with no model upgrade, on the strength of a public-channel mandate. Simon Willison turns a plain English text file into an executable script with a single shebang line. James Shore lays out the productivity math vendors keep promising: 2× output, half the engineers, ship faster.

What sits underneath each headline is a cost the headline doesn’t pay. AI-authored code carries 2.7× the vulnerability density of human code; the shebang trick inherits every prompt-injection risk of tool-calling with none of a normal script’s review surface; and Shore’s own citations — METR’s RCT, GitClear’s duplication curve, LinearB’s refactor mix — say the maintenance discount the math requires isn’t actually showing up. Read together, the three pieces are one argument with three exhibits.

Shopify’s River agent refuses DMs, hits 77% merge rate

Source: simon-willison · published 2026-05-11

TL;DR

- Shopify’s River coding agent declines all DMs, forcing every interaction into public Slack channels indexed company-wide.

- Merge rate climbed from 36% to 77% with no model upgrade, which Shopify credits to public peer feedback 1.

- 5,938 employees used River across 4,450 channels in 30 days, now opening ~1/8 of merged PRs in the main monorepo 1.

- April 2025 mandate made “reflexive AI usage” a baseline review criterion, turning every public prompt into auditable fluency evidence 2.

- AI-authored code carries 2.7× the vulnerability density of human code, a tax the 77% merge rate doesn’t address 3.

A policy choice masquerading as a product feature

Tobias Lütke’s framing is that River, Shopify’s internal coding agent, is a Lehrwerkstatt — a teaching workshop where junior engineers learn by watching the CEO debug in #tobi_river. The design lever that makes this possible is unusually blunt: River refuses direct messages. If you try to DM it, it tells you to open a public channel first. Every prompt, every diff, every rusty CEO moment is searchable by anyone at the company.

That’s not a model capability. It’s a policy hard-coded into the agent’s surface — and it’s the part competitors haven’t copied. Anthropic’s Claude Code Channels model agents-as-Slack-channels for context segmentation, but they don’t forbid private use 4. Shopify does.

The numbers Lütke didn’t put in the post

The blog post is metric-light; independent reporting fills it in. In a 30-day window, 5,938 Shopify employees collaborated with River across 4,450 public channels, and in a single week the agent authored 1,870 pull requests — roughly one in eight merged PRs in the main monorepo. The headline figure is a merge-rate jump from 36% to 77% over two months, which Shopify explicitly attributes to public feedback rather than an underlying model upgrade 1.

If that attribution holds, it’s the actual news here: a ~2× quality lift from changing who can see the conversation, holding the model constant. That’s the empirical backbone of the osmosis-learning claim.

What the pedagogy frame leaves out

River didn’t drop into a neutral culture. It sits on top of Lütke’s April 2025 internal memo declaring “reflexive AI usage” a baseline expectation, with managers required to prove an AI can’t do a job before requesting headcount 2. Read against that mandate, public-by-default channels are also a measurement substrate: every prompt is auditable evidence of AI fluency feeding performance reviews. Pedagogy and surveillance are the same artifact viewed from two angles.

Two other things the Simon Willison framing soft-pedals:

“Such a high degree of transparency and reliance on shared patterns might lead to a ‘hive mind’ that could inadvertently stifle unconventional innovation.” 5

That critique came from inside Shopify’s own user base, not from outside skeptics. And the Midjourney analogy Simon reaches for has a flip side: Midjourney users routinely pay up for Stealth Mode because public prompts are a non-starter for proprietary work 6. River dodges that by being internal-only, but the same tension applies to engineers who’d rather not narrate a stuck debugging session to 100 onlookers.

The security tax is harder to wave off. Independent analysis pegs AI-generated code at ~2.7× the vulnerability density of human code, with Claude Code-assisted commits showing a 3.2% secret-leak rate 3. A 77% merge rate is only reassuring if review depth scaled with volume — and at 1,870 PRs a week from one agent, that’s the number Shopify hasn’t published.

The takeaway

The interesting variable in River is sociological, not technical: a refusal-to-DM policy did more for output quality than a model swap would have. Whether that scales beyond a company already primed by an AI-mandatory memo is the open question.

Willison turns English text files into llm shebang scripts

Source: simon-willison · published 2026-05-11

TL;DR

- Simon Willison wires the

llmCLI into a#!/usr/bin/env -S llm -fshebang, making plain-English text files directly executable. - The pattern scales to YAML templates that pin a model, system prompt, and inline Python tools as callable functions.

- The trick originated as a Hacker News dare (“if you’re sufficiently brave”), not an independent design.

- It inherits every prompt-injection and non-determinism risk of agentic tool-calling, with none of the review surface of a normal script.

The trick

The whole pattern hangs on one GNU extension: env -S, which lets a shebang line pass multiple arguments to its interpreter. Point that at Willison’s llm CLI with -f (treat the file body as a fragment) and a text file becomes a runnable program whose “source” is a prompt. Add -T tool_name and the script can call tools — fetching the current time, hitting an API, running a SQL query.

The richer form swaps the prompt for a YAML template that names a model (Willison’s example pins gpt-5.4-mini), supplies a system prompt, and defines Python functions inline. Those functions get exposed to the model as callable tools. His calc.sh demo defines add and multiply, then lets the LLM orchestrate them to answer "what is 2344 * 5252 + 134" — with --td printing each tool call for inspection. A more elaborate example wires in the Datasette SQL API so a shebang script can answer natural-language questions about his blog.

It’s a vivid demo of Unix composability colliding with LLMs. It’s also worth reading the post for what it doesn’t say.

Provenance: this started as an HN provocation

The idea wasn’t Willison’s. HN commenter Kim_Bruning floated it in a thread about LLMs corrupting delegated documents, with the explicit hedge “if you’re sufficiently brave” 7. Willison’s TIL is the operationalization of that dare. The surrounding thread treats “executable English” as deliberately reckless — a framing that gets lost when the pattern is presented as a tidy recipe.

The caveats the post glosses over

Portability. env -S is not POSIX. It landed in GNU Coreutils 8.30 in July 2018, so modern Ubuntu, Debian, Fedora, and macOS are fine — but RHEL 7 and BusyBox-based embedded systems hard-fail with “invalid option” 8. There’s also the ~127-character kernel shebang limit, which silently truncates once you chain -T tool -m model-id -f flags.

Security. This is the load-bearing concern. Executable text files inherit every known LLM-execution risk with none of the usual code-review affordances. CVE-2024-8309 in LangChain’s GraphCypherQAChain showed prompt injection through a tool-calling layer leading to unauthorized graph-database deletion and exfiltration 9 — structurally identical to Willison’s Datasette-SQL example. Non-determinism compounds the problem: a filter that catches a malicious instruction 90% of the time still fails the tenth run 10, which breaks the “I tested it once, it works” mental model people bring to shell scripts. And 2026 TrustFall research showed agentic CLI tools can be hijacked by malicious project config files that coax the assistant into invoking unauthorized helpers 11 — a hostile .llm/ directory dropped in a repo could silently rewrite what a shebang script does the next time you run it.

A crowded field

llm isn’t alone here. aichat ships a --file flag built for exactly this, mods (Charm) plays the Unix-pipe purist, and shell-gpt layers on a roles system 12. A separate project, llmscript, takes the opposite bet — generate, test, and cache Bash from English rather than executing the prompt live — explicitly to sidestep non-determinism.

That last design choice is the tell. The interesting question isn’t whether you can put a shebang on an English file. It’s whether the script that runs tomorrow will do the same thing as the one you ran today.

Shore’s AI math: 2× output demands ½ maintenance cost

Source: simon-willison · published 2026-05-11

TL;DR

- James Shore: AI agents must cut per-line maintenance by the inverse of their speedup, or teams face “permanent indenture.”

- METR’s RCT: senior devs were 19% slower with AI tools while self-reporting a 20% speedup.

- GitClear logged an 8× rise in duplicated code blocks across 2024, with redundancy now 10× the 2022 baseline.

- LinearB: 53.9% of agent refactors are renames and type updates, not the architectural work that cuts maintenance.

The arithmetic

Shore’s claim, quoted by Simon Willison, is brutally simple: if your AI agent doubles the rate at which you ship code, it has to halve the per-line maintenance cost just to keep your total maintenance burden flat. Triple the output, third the cost. Anything less and the codebase you’re now generating at machine speed becomes a debt that compounds against you forever. He calls the alternative “permanent indenture.”

It reads like a thought experiment. The interesting development since Shore wrote it is that the empirical literature has started to catch up.

The data caught up

METR ran a randomized controlled trial with 16 experienced open-source developers working in their own large repositories. The AI-assisted condition was 19% slower in wall-clock time — and the same developers self-reported a 20% speedup afterward 13. Velocity intuitions, exactly as Shore predicted, deceive.

GitClear’s longitudinal analysis of 211M lines points to the structural mechanism. Duplicated blocks of five-plus lines rose roughly 8× in 2024, redundancy is now ten times the 2022 baseline, and copy-pasted lines exceeded moved lines for the first time in the dataset’s history 14. Moved lines are the fingerprint of refactoring — the activity that reduces future maintenance. That curve is collapsing.

Google’s DORA 2024 report quantifies the org-level consequence: every 25% increase in AI adoption correlates with a 1.5% drop in delivery throughput and a 7.2% drop in delivery stability 15. Shore’s vibes-based warning now has three independent datasets behind it.

Where Shore’s model breaks

The strongest dissent on Hacker News is concrete: practitioners report AI is unusually effective at the opposite of what Shore fears — modernizing dead libraries, generating missing test suites, and making multi-decade legacy projects “suddenly easier to work with” 16. Shore’s model treats AI as a uniform code-volume multiplier. In legacy contexts, it can be a maintenance-cost divider.

But “AI does maintenance” deserves an asterisk. LinearB studied 15,000+ agent refactoring operations and found 53.9% were low-level renames and type updates — cosmetic hygiene, not the API redesigns or modularization that move the maintenance needle. Humans, the same study notes, remain significantly more likely to do the high-level architectural work 17. And an empirical study of agent-generated code found 15% of commits introduce quality issues on landing, with 23% of those defects persisting long-term 18. The debt compounds exactly the way Shore’s arithmetic predicts.

The takeaway

The honest read: Shore is directionally right but his model is too coarse. AI’s maintenance impact is bimodal — corrosive on greenfield agent-driven velocity 1415, genuinely helpful on the unglamorous work of legacy modernization, dependency updates, and test backfill 16. The market is selling the first thing. The second thing is what would actually make Shore’s math work.

Round-ups

AWS lays out building blocks for foundation model training and inference

Source: huggingface-blog

Amazon’s engineering post on Hugging Face walks through the infrastructure pieces teams assemble to train and serve foundation models on AWS, covering compute, storage, and orchestration choices for large-scale runs. The guide targets practitioners standing up pipelines rather than picking a managed service.

Footnotes

-

ZenML LLMOps Database — Shopify River case study — https://www.zenml.io/llmops-database/building-a-public-ai-agent-workspace-for-organizational-learning

↩ ↩2 ↩35,938 employees collaborated with River across 4,450 public channels in a 30-day window… River authored 1,870 pull requests in a single week (~1/8 of all merged PRs), and its merge rate climbed from 36% to 77% over two months without any underlying model upgrade.

-

Digital Commerce 360 — leaked Shopify AI memo — https://www.digitalcommerce360.com/2025/04/08/internal-memo-shopify-ceo-declares-ai-non-optional/

↩ ↩2Before asking for more headcount and resources, teams must demonstrate why they cannot get what they want done using AI… reflexive AI usage is now a baseline expectation at Shopify.

-

Exceeds.ai — AI coding assistant risk report — https://blog.exceeds.ai/ai-coding-assistants-risks-2025/

↩ ↩2AI-generated code has a 2.7x higher vulnerability density compared to human-written code… Claude Code-assisted commits showed a 3.2% secret-leak rate, more than double the industry baseline.

-

Microsoft Tech Community — Copilot Spaces / Anthropic Claude Code Channels comparison — https://techcommunity.microsoft.com/blog/azuredevcommunityblog/turning-github-copilot-into-a-%E2%80%9Cbest-practices-coach%E2%80%9D-with-copilot-spaces—a-mark/4511567

↩Claude Code Channels act as persistent, purpose-built workspaces for AI agents — comparable to Slack channels for human teams — letting developers segment work by feature or team while avoiding ‘context pollution.’

-

ABA News commentary on River — https://www.ababnews.com/news/a12a4fc6-2572-4539-b6e0-0ce88a509b69

↩Some users have expressed concerns that such a high degree of transparency and reliance on shared patterns might lead to a ‘hive mind’ that could inadvertently stifle unconventional innovation.

-

Sharadja.in — Midjourney’s Discord community model — https://www.sharadja.in/blog/midjourney-discord-community-driven-growth/

↩Unless users pay for high-tier plans to access ‘Stealth Mode,’ every prompt and image remains searchable by the community, which is often a non-starter for companies handling sensitive or proprietary designs.

-

Hacker News comment by Kim_Bruning (item 48090590) — https://news.ycombinator.com/item?id=48090590

↩But seriously, you can put a shebang on an english text file now (if you’re sufficiently brave)

-

Kanaries docs — Python shebang / env -S portability — https://docs.kanaries.net/topics/Python/python-shebang

↩The -S flag… was introduced to GNU Coreutils in version 8.30, released in July 2018… scripts intended for enterprise or legacy systems—such as RHEL 7 or older BusyBox-based embedded systems—will fail

-

Keysight blog on CVE-2024-8309 (LangChain GraphCypherQAChain) — https://www.keysight.com/blogs/en/tech/nwvs/2025/08/29/cve-2024-8309

↩prompt injection allowed attackers to manipulate graph database queries, leading to unauthorized data deletion or exfiltration

-

Medium — David Baek on prompt injection — https://medium.com/@davidsehyeonbaek/why-prompt-injection-will-remain-an-unsolved-problem-in-ai-security-61a324e4ca76

↩a malicious prompt might be caught by a filter 90% of the time but succeed on the 10th attempt due to minor variations in the model’s reasoning path

-

Help Net Security — TrustFall AI coding CLI research — https://www.helpnetsecurity.com/2026/05/07/trustfall-ai-coding-cli-vulnerability-research/

↩agentic CLI tools can be compromised by malicious project configuration files that trick the assistant into running unauthorized helper programs

-

LibHunt — llm vs aichat comparison — https://www.libhunt.com/compare-llm-vs-aichat

↩aichat is described as an ‘all-in-one’ terminal app that includes a Chat-REPL, RAG capabilities, and a shell assistant… mods… functioning similarly to grep or sed by focusing on piping stdin to an LLM

-

METR randomized controlled trial (July 2025) — https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

↩developers were 19% slower on average when using AI tools compared to working manually… Before the experiment, participants expected a 24% speedup; after finishing, they self-reported a perceived productivity gain of roughly 20%

-

GitClear 2025 code quality report — https://www.gitclear.com/blog/gitclear_ai_code_quality_research_pre_release

↩ ↩2in 2024, the volume of ‘copy/pasted’ lines exceeded ‘moved’ lines for the first time in history… an eightfold increase in duplicated code blocks (five or more lines) in 2024, making redundancy levels ten times higher than in 2022

-

DORA 2024 report (via getdx.com) — https://getdx.com/blog/2024-dora-report/

↩ ↩2a 25% increase in AI adoption is associated with a 1.5% drop in delivery throughput and a 7.2% decrease in delivery stability

-

Hacker News discussion (item 48089289) — https://news.ycombinator.com/item?id=48089289

↩ ↩2AI has become highly effective at ‘wrangling’ legacy code—modernizing old libraries, automating end-to-end tests… Some users reported that AI makes older, multi-decade projects ‘suddenly easier to work with’

-

LinearB analysis of agent refactoring operations — https://linearb.io/blog/ai-coding-agents-code-refactoring

↩53.9% of agent-driven refactorings are low-level, consistency-oriented tasks like renaming variables or updating types… Human developers are significantly more likely to perform high-level architectural changes

-

Moonlight review of ‘To What Extent Does Agent-Generated Code Require Maintenance?’ — https://www.themoonlight.io/en/review/to-what-extent-does-agent-generated-code-require-maintenance-an-empirical-study

↩approximately 15% of AI-generated code introduces immediate quality issues, with code smells accounting for nearly 90% of these defects… roughly 23% of AI-introduced problems persist in repositories long-term