llm 0.32a2 adopts Responses API, CSP tool gates fetches, Codex repros segfault

Three Simon Willison shipments touch three stack layers today: an OpenAI API switch, a CSP iframe guard, and a Datasette segfault fix.

llm 0.32a2 adopts Responses API, CSP tool gates fetches, Codex repros segfault

TL;DR

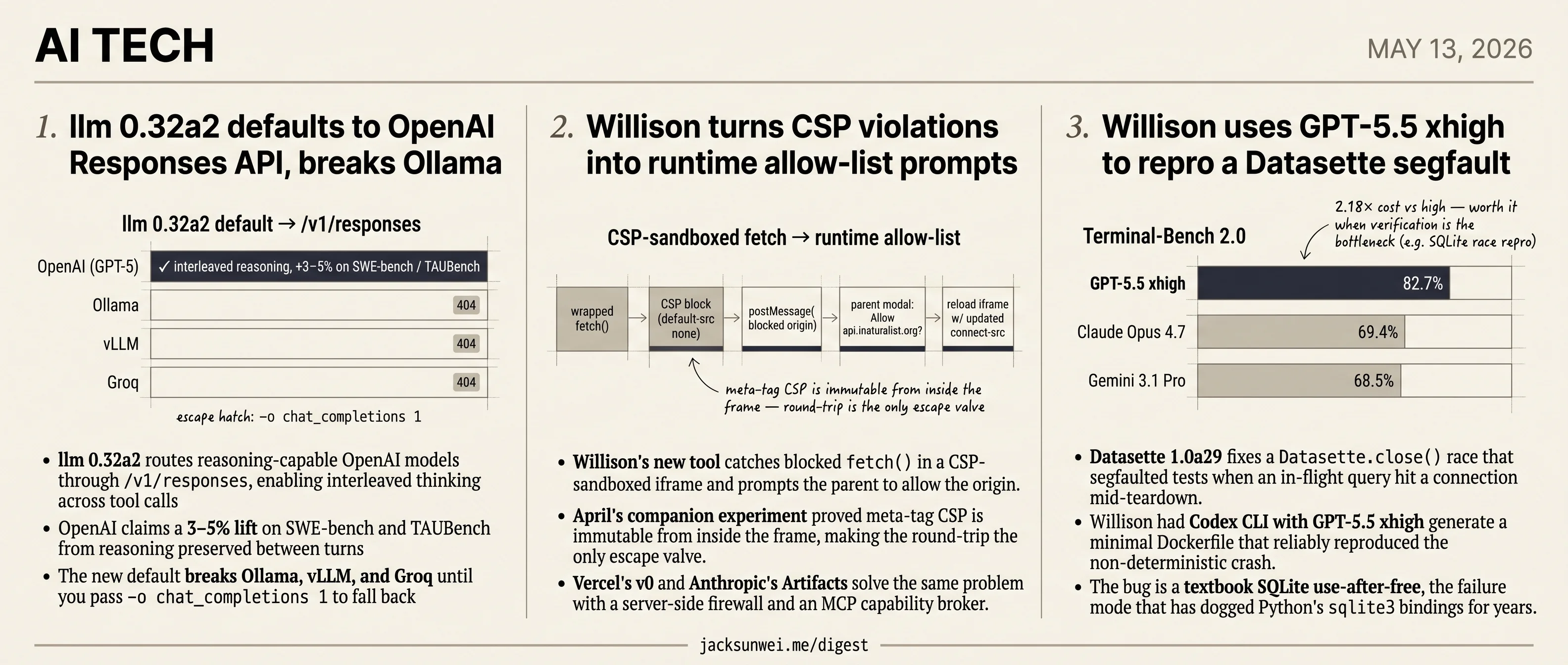

- llm 0.32a2 defaults to OpenAI’s Responses API for 3–5% reasoning gains, breaking Ollama, vLLM, and Groq.

- Willison’s CSP tool routes blocked iframe fetches through parent-window allow prompts, risking reflex-click fatigue at agent scale.

- Codex CLI with GPT-5.5 xhigh reproduced a Datasette segfault rooted in Python’s long-buggy

sqlite3bindings. - Vercel’s v0 and Anthropic’s Artifacts solve the same iframe-isolation problem with server-side firewalls and MCP capability brokers.

Today’s AI tech section is, by accident of timing, an all-Simon Willison day: three small shipments from the same author, each landing at a different layer of the LLM tooling stack. There’s no single thread to force — the connection is the author and the surface area, not a shared verdict.

llm 0.32a2 switches to OpenAI’s /v1/responses endpoint by default for a 3–5% reasoning lift, breaking every non-OpenAI backend until users opt back into chat completions. A CSP-sandboxed iframe pattern lets agent-generated code request fetch() permissions through the parent window — solving a real isolation problem, and inviting an obvious prompt-fatigue one. And Codex CLI with GPT-5.5 xhigh reproduced a non-deterministic Datasette segfault that turned out to be a textbook SQLite use-after-free — fixed in 1.0a29, but emblematic of how long Python’s sqlite3 bindings have carried that failure mode.

llm 0.32a2 defaults to OpenAI Responses API, breaks Ollama

Source: simon-willison · published 2026-05-12

TL;DR

- llm 0.32a2 routes reasoning-capable OpenAI models through

/v1/responses, enabling interleaved thinking across tool calls - OpenAI claims a 3–5% lift on SWE-bench and TAUBench from reasoning preserved between turns

- The new default breaks Ollama, vLLM, and Groq until you pass

-o chat_completions 1to fall back - Critics read the Responses API as deliberate lock-in — stateful, partially encrypted, designed to hide chain-of-thought from rival labs

What the endpoint switch actually buys

Simon Willison’s one-line release note (“Most reasoning-capable OpenAI models now use the /v1/responses endpoint”) understates the change. The migration is the whole point of 0.32a2, and it lands two concrete wins.

First, interleaved reasoning. On Chat Completions, a GPT-5-class model that thinks, calls a tool, and thinks again throws away the first chain on the second turn. The Responses endpoint keeps it. OpenAI’s own number is a 3–5% benchmark lift on SWE-bench and TAUBench attributed to that “preserved reasoning,” along with substantially better prompt-cache utilization on long sessions 1.

Second, visibility. llm now prints the model’s summarized reasoning tokens to stderr in a distinct color (suppress with -R), and logs the otherwise-invisible reasoning token counts to its SQLite history. That last bit is not cosmetic — Willison has noted that reasoning-heavy runs like his pelican-SVG generations can clear a dollar per prompt, and until now those tokens were billed but unobservable from the CLI 2.

What it breaks

The Responses API is not a drop-in replacement for the Chat Completions shape that the rest of the open-source stack standardized on. Ollama, vLLM, Groq, and LiteLLM-style proxies don’t implement it. Point 0.32a2 at a local server with the new default and you get 404s.

The escape hatch ships in the same release: a per-model option -o chat_completions 1 forces the CLI back to the legacy code path 3. It works, but it’s an opt-out, not an opt-in — meaning every existing config targeting a non-OpenAI provider needs editing. For a tool whose pitch is vendor-neutral access to LLMs, defaulting to the OpenAI-only surface is a notable choice.

There are also rough edges on the OpenAI side. Developers on r/OpenAIDev report system instructions silently dropping when previous_response_id is used to chain calls, plus tool-calling regressions versus Chat Completions 4. Alpha-quality plumbing on top of alpha-quality plumbing.

The lock-in critique

The reasoning tokens you see in your terminal are summaries — not the raw chain-of-thought. To actually preserve reasoning across stateless calls (e.g., under Zero Data Retention), OpenAI exposes include=["reasoning.encrypted_content"], an opaque blob the client passes back so o3 and GPT-5 don’t “think from scratch” 5. You hold the state; you can’t read it.

Sean Goedecke is blunter:

The Responses API is designed specifically to hide proprietary ‘thinking styles’ and implementation details from other labs while still providing a stateful experience. 6

That framing puts 0.32a2 in awkward company. Willison has been one of the loudest voices for an “Open Responses” spec that other providers could implement, but the alpha’s default behavior bets the CLI’s primary code path on a surface that is, by design, OpenAI-shaped and partially encrypted.

Net read

Real capability upgrade for GPT-5 users, real migration tax for everyone else. If you run local models through llm, pin 0.31 or learn the new flag before you upgrade.

Willison turns CSP violations into runtime allow-list prompts

Source: simon-willison · published 2026-05-13

TL;DR

- Willison’s new tool catches blocked

fetch()in a CSP-sandboxed iframe and prompts the parent to allow the origin. - April’s companion experiment proved meta-tag CSP is immutable from inside the frame, making the round-trip the only escape valve.

- Vercel’s v0 and Anthropic’s Artifacts solve the same problem with a server-side firewall and an MCP capability broker.

- Prompt fatigue looms: agentic code firing dozens of fetches turns “Allow this origin?” into a reflex click.

How the loop actually closes

The mechanism is small and almost entirely a UX construction. The iframe ships with default-src 'none' plus a narrow allow-list. A wrapped fetch() inside the frame catches the network error the browser throws when CSP blocks an outbound request, then postMessages the blocked URL to the parent. The parent shows a modal — “The sandbox tried to connect to https://api.inaturalist.org. Add this origin to the CSP connect-src allow-list and refresh the page?” — and on approval rewrites the policy and reloads the iframe.

sequenceDiagram

participant S as Sandbox iframe (CSP)

participant P as Parent window

participant U as User

S->>S: wrapped fetch() → CSP block

S->>P: postMessage(blocked origin)

P->>U: "Allow api.inaturalist.org?"

U->>P: approve

P->>S: reload iframe with updated connect-src

S->>S: fetch() succeeds

There is no new browser primitive here. The novelty is that Willison’s own April experiment proved the in-frame script cannot tamper with the policy it’s running under 7, which is what makes the round-trip trustworthy: the sandbox is forced to ask.

Where it sits next to v0 and Artifacts

The same problem — generated code that needs some network access without becoming an exfiltration channel — is being attacked very differently by larger vendors.

| Approach | Isolation | Granularity | Secrets |

|---|---|---|---|

| Willison’s tool | Client-side iframe + CSP | Per-hostname | None brokered |

| Vercel v0 8 | Firecracker microVM + Sandbox Firewall | Allow/deny/user-defined modes | Credential brokering — model never sees keys |

| Claude Artifacts 9 | MCP-brokered service connections | Per-capability (Slack, Calendar) | Connection-scoped tokens |

Willison’s version is the cheapest and the most auditable — it’s a few hundred lines of JS you can read in one sitting — but it offers none of the credential isolation v0 provides, and it asks users to reason about hostnames rather than capabilities.

The gotchas the post glosses over

Two practitioner notes matter for anyone copying the pattern. A sandboxed iframe without allow-same-origin gets a null origin, which is exactly why the manual JS relay is necessary — built-in report-uri paths break against CORS policies that don’t whitelist null 10. The same null origin forces the postMessage back to the parent to use '*' as the target, a documented leak vector for the violation payload itself 11. In Willison’s single-author demo both frames are his, so neither bites; in a multi-tenant embed, both do.

The deeper concern is one the CSP literature has flagged for years: browsers re-prompt only on new permission requests, and broad allow-list entries already in place can be exploited without triggering a fresh warning 12. Pair that with an agent that opens twenty fetches a minute and the “user-mediated” part of “user-mediated allow-list” erodes fast. As a demonstration that meta-tag CSP holds up under hostile in-frame script, the experiment is convincing. As a deployable control for AI-generated code, it stakes out a corner the better-funded alternatives have already moved past.

Willison uses GPT-5.5 xhigh to repro a Datasette segfault

Source: simon-willison · published 2026-05-12

TL;DR

- Datasette 1.0a29 fixes a

Datasette.close()race that segfaulted tests when an in-flight query hit a connection mid-teardown. - Willison had Codex CLI with GPT-5.5 xhigh generate a minimal Dockerfile that reliably reproduced the non-deterministic crash.

- The bug is a textbook SQLite use-after-free, the failure mode that has dogged Python’s

sqlite3bindings for years. - New

TokenRestrictions.abbreviated()helper is a DX win, not a security change — restrictions remain allowlist-only.

A textbook SQLite threading hazard

The headline fix in 1.0a29 closes a race between Datasette.close() and queries still executing on worker threads. A connection got torn down while the C library was mid-dereference on a prepared statement tied to it — the canonical use-after-free pattern documented in long-running Stack Overflow threads on multithreaded SQLite segfaults 13. The mitigations are well-known (sqlite3_close_v2() semantics, per-thread connections, external locks around teardown), and Datasette’s fix essentially codifies them in its lifecycle handling. What’s unusual is that the race survived a mature test suite at all; Willison only introduced it recently when adding automatic per-test connection cleanup.

When xhigh reasoning earns its premium

The more interesting story is how the bug got pinned down. Non-deterministic concurrency bugs are the worst possible target for an LLM agent — you can’t tell if a fix worked from one run, and you can’t tell if a repro is real from one failure. Willison’s move was to have Codex CLI build a minimal Dockerfile that triggered the segfault on demand, turning a flaky heisenbug into a deterministic test case.

That workflow leans hard on GPT-5.5’s agentic-terminal lead: 82.7% on Terminal-Bench 2.0, well ahead of Claude Opus 4.7 (69.4%) and Gemini 3.1 Pro (68.5%) 14. And it specifically uses the xhigh reasoning setting, which independent reviewers have been blunt about:

the ‘xhigh’ setting carries a heavy premium, costing approximately 2.18 times more than ‘high’ reasoning per task 15

In that same review, xhigh actually lost to cheaper configurations on 14 of 20 real-world tasks 15. So why is it the right call here? Because race-condition repro is the niche where verification overhead — running, observing, hypothesizing, re-running — is the entire job. It’s the opposite of one-shot codegen, where xhigh’s extra spend is mostly waste.

The broader caveat still applies. Reliability research has shown an agent with 90% per-step accuracy succeeds on a 10-step debugging chain only 34% of the time 16. A clean Willison anecdote shouldn’t be read as “AI solved my race condition” generally — it’s a data point for when the premium tier is worth reaching for.

The TokenRestrictions helper

The new TokenRestrictions.abbreviated(datasette) utility 17 addresses a quieter papercut. Datasette’s signed-token format encodes permissions as cryptic two-letter keys in an _r dictionary (vi for view-instance, es for execute-sql), and plugin authors had been assembling these by hand. Worth flagging for anyone reading “permissions API” and reaching for the threat model: token restrictions are a strict allowlist layered on top of the actor’s existing permissions and cannot grant new access 18. This is ergonomics, not a new security surface.

Takeaway

Two signals beyond a routine alpha: Datasette joined a well-known class of SQLite-threading bugs, and Willison’s repro workflow is a concrete answer to “when is GPT-5.5 xhigh worth 2.18× the cost?” — when verification is the bottleneck, not generation.

Footnotes

-

The New Stack — Open Responses vs Chat Completion — https://thenewstack.io/open-responses-vs-chat-completion-a-new-era-for-ai-apps/

↩OpenAI claims 3–5% improvement on benchmarks such as SWE-bench and TAUBench when the same models are used via /v1/responses instead of chat/completions, attributed to ‘preserved reasoning’ across turns.

-

Simon Willison’s substack — ‘LLM 0.27 with GPT-5’ — https://simonw.substack.com/p/llm-027-with-gpt-5-and-improved-tool

↩The tool now logs detailed token usage, including ‘invisible’ reasoning tokens, to its internal SQLite database — useful given pelican-SVG generations sometimes cost over a dollar a piece.

-

newreleases.io (0.32a2 changelog) — https://newreleases.io/project/github/simonw/llm/release/0.32a2

↩Use the new model option

-o chat_completions 1to force the CLI to fall back to the older /v1/chat/completions code path, restoring compatibility with Ollama, vLLM, and Groq. -

r/OpenAIDev thread on Responses API — https://www.reddit.com/r/OpenAIDev/comments/1jtz1wi/openai_responses_api_issue/

↩Early adopters reported the apparent loss of system-instruction context when using previous_response_id, and inconsistent tool-calling behavior that was not present in the original Chat Completions interface.

-

OpenAI Cookbook — Reasoning Items — https://developers.openai.com/cookbook/examples/responses_api/reasoning_items

↩By opting into include=[‘reasoning.encrypted_content’], developers receive a proprietary encrypted blob that can be passed back so models like o3 do not have to ‘think from scratch’ under Zero Data Retention.

-

Sean Goedecke — ‘The Responses API’ — https://www.seangoedecke.com/responses-api/

↩The Responses API is designed specifically to hide proprietary ‘thinking styles’ and implementation details from other labs while still providing a stateful experience.

-

Simon Willison — earlier CSP iframe escape test (Apr 2026) — https://simonwillison.net/2026/Apr/3/test-csp-iframe-escape/

↩Once a CSP is parsed from a meta tag, it is immutable; the script cannot remove, modify, or overwrite it, even if the iframe is navigated to a data: URI.

-

Vercel Sandbox Firewall docs — https://vercel.com/docs/vercel-sandbox/concepts/firewall

↩v0 utilizes a Sandbox Firewall with three modes: allow-all, deny-all, and user-defined … ‘credentials brokering’ injects secrets into egress traffic after it leaves the sandbox, ensuring the untrusted AI code never actually ‘sees’ the API keys it is using.

-

Anthropic — Claude Artifacts support docs — https://support.claude.com/en/articles/9487310-what-are-artifacts-and-how-do-i-use-them

↩Anthropic uses a Model Context Protocol (MCP) to broker access to external services … users must explicitly approve individual service connections, keeping the ‘sandbox’ closed except for authorized ‘doors’.

-

web.dev — Sandboxed iframes — https://web.dev/articles/sandboxed-iframes

↩If a sandbox is configured with allow-scripts but without allow-same-origin, the ‘null’ origin remains a persistent hurdle for any automated report-uri requests, which often fail because the reporting server’s CORS policy does not whitelist ‘null’.

-

Team Simmer — postMessage with cross-site iframes — https://www.teamsimmer.com/blog/how-do-i-use-the-postmessage-method-with-cross-site-iframes/

↩while the * wildcard is often used for sandboxed frames because they lack a targetable origin, it can expose report data to unintended recipients.

-

MDN — CSP frame-src reference — https://developer.mozilla.org/en-US/docs/Web/HTTP/Reference/Headers/Content-Security-Policy/frame-src

↩browsers typically only re-prompt users if new permissions are requested … attackers can exploit broad host permissions already in place to inject scripts or exfiltrate cookies without triggering a new warning.

-

Stack Overflow — SQLite multithreaded segfault thread — https://stackoverflow.com/questions/58917306/segmentation-fault-while-using-sqlite-in-a-multithreaded-code

↩use-after-free scenarios where the underlying C library attempts to access memory associated with a connection or prepared statement that has already been deallocated

-

Vellum.ai — GPT-5.5 capabilities review — https://www.vellum.ai/blog/everything-you-need-to-know-about-gpt-5-5

↩GPT-5.5 achieved a state-of-the-art score of 82.7% on Terminal-Bench 2.0, significantly outpacing Claude Opus 4.7 (69.4%) and Gemini 3.1 Pro (68.5%)

-

Towards AI — ‘I tested all 3 GPT-5.5 variants on 20 real tasks’ — https://pub.towardsai.net/i-tested-all-3-gpt-5-5-variants-on-20-real-tasks-the-200-pro-tier-lost-on-14-of-them-0d7a1fc97cef

↩ ↩2the ‘xhigh’ setting carries a heavy premium, costing approximately 2.18 times more than ‘high’ reasoning per task

-

Maxim AI — Ensuring AI agent reliability in production — https://www.getmaxim.ai/articles/ensuring-ai-agent-reliability-in-production-environments-strategies-and-solutions/

↩an agent with 90% accuracy on individual steps succeeds in a 10-step complex debugging task only 34% of the time

-

Datasette latest changelog — https://docs.datasette.io/en/latest/changelog.html

↩TokenRestrictions.abbreviated(datasette) utility method for creating ‘_r’ dictionaries

-

Datasette issue #1320 (permissions/SQL refactor) — https://github.com/simonw/datasette/issues/1320

↩TokenRestrictions function strictly as an allowlist layered on top of the actor’s existing permissions; they cannot grant a token a permission the underlying user does not already possess