OpenAI donates MRC, Google strips Gemma 4 heads, ServiceNow rolls back vLLM V1

OpenAI's MRC fabric, Gemma 4's speedup, and ServiceNow's vLLM V1 fix each move the reproducible result one layer away from what was announced.

OpenAI donates MRC, Google strips Gemma 4 heads, ServiceNow rolls back vLLM V1

TL;DR

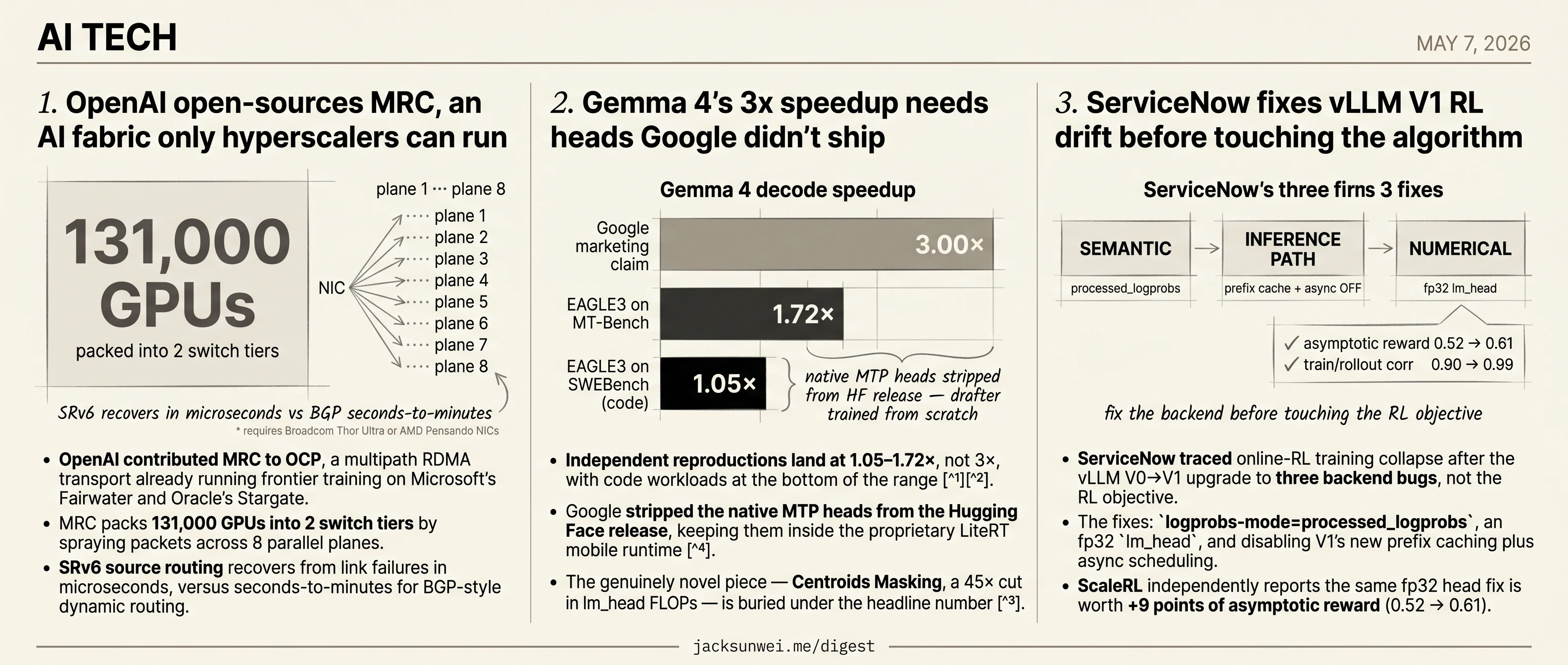

- OpenAI’s MRC fabric, contributed to OCP, requires Broadcom Thor Ultra or AMD Pensando NICs only hyperscalers operate.

- Gemma 4’s 3× speedup drops to 1.05–1.72× outside Google after MTP heads were stripped from the Hugging Face release.

- Centroids Masking, a 45× cut in lm_head FLOPs, is the genuinely novel Gemma 4 idea buried under the headline number.

- ServiceNow restored vLLM V1 RL parity by disabling V1’s prefix-cache and async-scheduling features and forcing an fp32

lm_head. - Simon Willison says his vibe-coding vs agentic-engineering line is dissolving as he stops reviewing each Claude Code edit.

Three tech releases land today, and each one’s headline result sits in a different layer the outside world doesn’t fully control. OpenAI contributed its MRC multipath RDMA fabric to the Open Compute Project — but running it requires Broadcom Thor Ultra or AMD Pensando NICs that only hyperscalers currently operate. Google’s Gemma 4 advertises a 3× speedup that independent reproductions land between 1.05× and 1.72×, after the multi-token-prediction heads were stripped from the Hugging Face checkpoint and kept inside the proprietary LiteRT runtime. ServiceNow restored RL training parity on vLLM V1 — by disabling V1’s headline prefix-cache and async-scheduling speedups and forcing an fp32 lm_head.

Three different layers — silicon, weights, runtime defaults — and three different reasons the announced number isn’t the number an outside team will see on first try. The Willison brief in the round-up tracks a related drift in a different register: the line he once drew between throwaway vibe coding and disciplined agentic engineering is dissolving as he stops line-reviewing what Claude Code writes.

OpenAI open-sources MRC, an AI fabric only hyperscalers can run

Source: openai-blog · published 2026-05-05

TL;DR

- OpenAI contributed MRC to OCP, a multipath RDMA transport already running frontier training on Microsoft’s Fairwater and Oracle’s Stargate.

- MRC packs 131,000 GPUs into 2 switch tiers by spraying packets across 8 parallel planes.

- SRv6 source routing recovers from link failures in microseconds, versus seconds-to-minutes for BGP-style dynamic routing.

- Broadcom Thor Ultra or AMD Pensando NICs required, gating MRC to hyperscalers despite the OCP contribution.

- Core ideas aren’t new — AWS’s SRD has sprayed packets across 64 ECMP paths in production Nitro NICs for years.

What MRC actually changes

Standard RoCEv2 pins a flow to a single path and leans on dynamic routing (BGP) to recover from failures. That works until you’re synchronously training across tens of thousands of GB200s, where one link flap stalls the whole job and a single hot path tail-latencies the collective. MRC unpicks both assumptions.

The sender splits an 800Gb/s NIC into eight 100Gb/s “planes” and sprays packets across hundreds of paths simultaneously. Each packet carries its destination memory address, so the receiver’s NIC reassembles out-of-order arrivals directly into GPU memory — no software reordering. When a packet drops, switches can trim the payload and forward just the header, giving the sender an explicit “this path is bad, retransmit elsewhere” signal instead of waiting for a timeout.

Routing is deterministic: SRv6 encodes the full switch-by-switch path in the IPv6 destination address, so failures don’t trigger control-plane recomputation. OpenAI reports rebooting four Tier-1 switches mid-training run with no job interruption, and absorbing multiple link flaps per minute with zero measurable impact on pretraining throughput.

flowchart LR

S[Sender NIC<br/>8x 100Gb planes] -->|spray| P1[Path 1]

S -->|spray| P2[Path 2]

S -->|spray| Pn[Path ...N]

P1 --> R[Receiver NIC<br/>HW reorder via<br/>embedded mem addr]

P2 --> R

Pn -. trimmed header .-> S

S -. retire path<br/>microseconds .-> Pn

Why now: Ethernet eats the AI back end

Dell’Oro pegs 2025 as the year Ethernet AI back-end revenue overtook InfiniBand 1, and MRC is the wedge driving that further. The strategic frame matters: OpenAI authored MRC with AMD, Broadcom, Intel, Microsoft and NVIDIA as collaborators, producing a transport that makes Ethernet competitive with InfiniBand on tail latency and failure recovery — exactly where InfiniBand has historically won. ServeTheHome captures the resulting market shape bluntly: NVIDIA itself doesn’t expect a single Ultra Ethernet Consortium (UEC) winner, and instead anticipates hyperscalers forking variants like MRC tuned to their workloads 2. Midokura frames MRC and UEC v1.0.2 (Jan 2026) as complementary rather than rival — MRC ships today, UEC builds the slower multivendor baseline 3.

The “open” asterisk

The OCP contribution makes the spec readable; it does not make MRC deployable. Data Center Knowledge notes that packet trimming, SRv6 forwarding, and out-of-order DMA placement all require high-end programmable NICs — currently just Broadcom’s Thor Ultra and AMD’s Pensando Pollara 400 — which limits near-term adoption to operators with hyperscaler-grade silicon budgets 4. Bizety goes further, arguing that SRv6 source routing imposes meaningful header overhead and pushes complexity onto the NIC, creating “a new form of silicon lock-in” dressed as openness 5.

The contribution is less a clean-sheet invention than a standardization of techniques AWS, Google (Falcon), and the UEC drafts already converged on.

That’s the honest read. AWS shipped packet-sprayed reliable datagrams over Nitro years ago 6; Google has Falcon; UEC is drafting its own. MRC’s real novelty is SRv6-as-control-plane and the production receipts from Fairwater and Stargate. For the four companies that can run it, that’s enough. For everyone else, OCP membership won’t conjure a Thor Ultra into the rack.

Gemma 4’s 3x speedup needs heads Google didn’t ship

Source: ars-technica-ai · published 2026-05-06

TL;DR

- Independent reproductions land at 1.05–1.72×, not 3×, with code workloads at the bottom of the range 78.

- Google stripped the native MTP heads from the Hugging Face release, keeping them inside the proprietary LiteRT mobile runtime 9.

- The genuinely novel piece — Centroids Masking, a 45× cut in lm_head FLOPs — is buried under the headline number 10.

- Multi-token prediction itself is two years and two prior implementations old (Meta 2024, DeepSeek-V3) 11.

The 3× number doesn’t survive contact with reproductions

Google’s marketing claim is that Gemma 4 inference runs up to 3× faster with no quality loss thanks to multi-token prediction (MTP) and speculative decoding. The “no quality loss” half is mathematically sound — the target model verifies every drafted token, so outputs are identical to greedy decoding. The “3×” half is where the story falls apart.

The most rigorous independent test, Thoughtworks’ EAGLE3 draft head trained against Gemma 4 31B, hit 1.72× on MT-Bench but collapsed to 1.05–1.14× on SWEBench — code tokens are less predictable, drafter rejection rates climb, and the speculative budget shrinks 7. A widely-circulated r/LocalLLaMA benchmark pairing the 31B target with the E2B (4.65B) drafter measured a 29% average speedup, peaking at 50% on coding tasks 8. That’s a real win, but it’s an order of magnitude below the headline.

The pattern is consistent: speculative decoding gains track how predictable your token stream is. Conversational chat is easy. Code, math, and structured output are not. Vendor benchmarks pick the easy end.

The MTP heads aren’t actually in the open release

The more uncomfortable detail Ars skips: Google omitted the native MTP heads from the Hugging Face safetensors, shipping them only inside the .litertlm binaries that feed Google’s proprietary LiteRT mobile runtime 9. Community forensics on r/LocalLLaMA started reverse-engineering INT8 weights out of the LiteRT graph; Thoughtworks gave up and trained an EAGLE3 drafter from scratch 7. Either way, reproducing Google’s number requires work that the Apache 2.0 release was supposed to make unnecessary.

For contrast, Qwen 3.5 ships its MTP heads in the primary release. The kindest reading of Google’s choice is engineering caution. The less kind reading is that “open weights” now means “open enough to evaluate, closed enough to keep the fast path on our runtime.”

Implementation fragility makes this worse. A parallel thread documented that for Qwen 3.6 MoE, tokenizer metadata mismatches silently dropped llama.cpp into “token translation mode,” producing a net slowdown 12. Gemma 4’s hybrid attention (50 sliding-window layers, 10 global) similarly broke vLLM and llama.cpp until dual-KV-cache patches landed 7. The headline number assumes a tuned stack the average user does not have.

What’s actually new, and what isn’t

Buried under the speedup pitch is a piece of real engineering: Centroids Masking groups the 262K-token vocabulary into ~4K clusters, cutting linear-head computation by roughly 45× with negligible accuracy impact 10. That’s the novel Gemma 4 contribution. It’s also the part the press release didn’t lead with.

The MTP technique itself is inherited. Meta’s Gloeckle et al. (2024) introduced parallel MTP heads for training efficiency. DeepSeek-V3 reworked them into a sequential causal chain and reported a ~1.8× decode speedup — explicitly crediting Meta in its tech report 11. Gemma 4 is the third lineage entry, not the origin.

Takeaway

If you’re deploying Gemma 4 today, budget for 1.3–1.7× in realistic workloads, expect serving-stack churn, and don’t count on the headline drafter unless you’re willing to train your own. The interesting paper is the one Google didn’t write up — Centroids Masking. The “3×” is a LiteRT demo with a press release attached.

ServiceNow fixes vLLM V1 RL drift before touching the algorithm

Source: huggingface-blog · published 2026-05-06

TL;DR

- ServiceNow traced online-RL training collapse after the vLLM V0→V1 upgrade to three backend bugs, not the RL objective.

- The fixes:

logprobs-mode=processed_logprobs, an fp32lm_head, and disabling V1’s new prefix caching plus async scheduling. - ScaleRL independently reports the same fp32 head fix is worth +9 points of asymptotic reward (0.52 → 0.61).

- MiniMax-M1 ties the same change to train/rollout logprob correlation rising from ~0.9 to ~0.99.

- The cost is real: parity means turning off V1’s headline throughput features, reopening the determinism-vs-speed debate.

The collapse, and where it wasn’t

When the PipelineRL team upgraded from vLLM 0.8.5 to 0.18.1, their GSPO training runs diverged from the V0 reference — reward curves drifted, clip rates spiked, policy ratios drifted away from 1.0. The instinctive response in 2025 RL research is to reach for an algorithmic patch: truncated importance sampling, masked IS, dynamic LR throttling. ServiceNow’s contribution is to argue, with parity plots, that this is the wrong move until the inference engine is returning the numbers the trainer thinks it’s getting.

They split the gap into three layers — semantic, inference-path, and numerical — and fixed each before touching the loss.

The three fixes

| Layer | V1 default | Fix | Why it matters |

|---|---|---|---|

| Semantic | Returns raw model logprobs | logprobs-mode=processed_logprobs | Trainer expects post-temperature/penalty logprobs; raw values offset the mean policy ratio |

| Inference path | Prefix caching + async scheduling on | Both off; pause_generation(mode="keep", clear_cache=False) during weight sync | Prevents reuse of pre-update KV state and mid-update request aborts |

| Numerical | lm_head in bf16 | Compute lm_head in fp32 | Tiny logit deltas at the final projection blow up in policy ratios |

After all three, the per-step deviation in the policy ratio (scaled by 10,000) tracks the V0 reference, and the corrected reward curve overlays the baseline.

This is everyone’s bug

The processed-logprobs trap isn’t a PipelineRL quirk. TRL’s GRPOTrainer hit the same mismatch on vLLM ≥0.10.2 and patched it identically 13; verl shipped an analogous default flip. The V0→V1 migration silently broke the logprob contract for every major open-source RL stack that read from vLLM, and “raw vs processed” is now the canonical first thing to check when ratios drift.

The fp32 lm_head story is even more striking. ScaleRL’s ablations isolate the fix as worth roughly nine points of asymptotic reward (0.52 → 0.61) and call it a “de-facto standard” 14. MiniMax-M1’s postmortem reports the same change lifted train/rollout token-probability correlation from ~0.9 to ~0.99 and eliminated long-CoT reward plateaus 15. Three unrelated codebases — PipelineRL, ScaleRL, MiniMax — converged on the same low-level fix. It’s table stakes now.

The price of parity

ServiceNow lands firmly on the determinism side of a live tradeoff. An independent practitioner analysis estimates that bitwise-parity mitigations like masked IS and dynamic LR throttling carry up to ~25% compute overhead 16, and ServiceNow pays for parity explicitly by disabling prefix caching and async scheduling — the two features V1 was sold on.

Whether you can re-enable them later is the open question, and the algorithmic layer is moving fast. GSPO’s sequence-level ratios already tolerate residual MoE routing and precision noise better than token-level GRPO 17. MiniMax’s CISPO soft-clips importance weights so rare reasoning tokens (“Wait”, “However”) still contribute gradient, reportedly halving steps-to-target 18. ServiceNow’s recipe is orthogonal to both — it’s the floor everyone now has to stand on before any of the clever stuff works.

Round-ups

Vibe coding and agentic engineering are getting closer than I’d like

Source: simon-willison

On Heavybit’s High Leverage podcast, Simon Willison admits the line he drew between throwaway ‘vibe coding’ and disciplined ‘agentic engineering’ is dissolving in his own workflow: he no longer reviews every line Claude Code writes for production, treating the agent like a black-box internal team whose output he trusts until it breaks.

Footnotes

-

Dell’Oro Group analysis — https://www.delloro.com/openais-mrc-initiative-reinforces-ethernets-expanding-role-in-ai-back-end-networks/

↩OpenAI’s MRC initiative reinforces Ethernet’s expanding role in AI back-end networks, where Ethernet sales surpassed InfiniBand in 2025.

-

ServeTheHome (Patrick Kennedy) — https://www.servethehome.com/nvidia-spectrum-x-ethernet-mrc-is-the-custom-rdma-transport-protocol-for-gigascale-ai/

↩MRC is the custom RDMA transport protocol for gigascale AI… NVIDIA does not see the industry collapsing onto a single UEC winner; instead hyperscalers will tune variants like MRC to their specific workloads.

-

Midokura: UET vs Falcon and Beyond — https://midokura.com/hardware-transports-for-ai-networking-uet-vs-falcon-and-beyond/

↩MRC is a production-hardened extension of RoCEv2 incorporating early UEC ideas, while UEC v1.0.2 (Jan 2026) remains the broader multivendor framework—the two are complementary, not rival, transports.

-

Data Center Knowledge — https://www.datacenterknowledge.com/networking/openai-pushes-new-ai-networking-protocol-as-gpu-clusters-scale

↩Although contributed to OCP, MRC’s advanced features—packet trimming, SRv6 source routing, out-of-order DMA—require high-end programmable NICs like Broadcom Thor Ultra or AMD Pensando Pollara, limiting near-term adoption to hyperscalers.

-

Bizety: AI Network Wars — https://bizety.com/2025/12/31/ai-network-wars-ultra-ethernet-vs-nvidias-dominance/

↩Critics argue source routing via SRv6 adds significant header overhead and shifts complexity to the NIC, potentially creating a new form of silicon lock-in where only specific vendors can support the full stack efficiently.

-

Ernest Chiang notes on AWS SRD — https://www.ernestchiang.com/en/notes/general/aws-srd-scalable-reliable-datagram/

↩SRD sprays packets across up to 64 parallel paths via ECMP and delegates reordering to Nitro hardware—an approach AWS shipped years before MRC formalized similar ideas.

-

Thoughtworks/HuggingFace blog (EAGLE3 for Gemma 4) — https://huggingface.co/blog/lujangusface/tw-eagle3-gemma4

↩ ↩2 ↩3 ↩4EAGLE3 draft head for Gemma 4 31B… achieved a 1.72x speedup on the MT-Bench conversational benchmark… speed gains dropped to 1.05–1.14x on the SWEBench coding benchmark, as code is inherently less predictable than natural language.

-

r/LocalLLaMA benchmark thread — https://www.reddit.com/r/LocalLLaMA/comments/1sjct6a/speculative_decoding_works_great_for_gemma_4_31b/

↩ ↩229% average speedup (peaking at 50% for coding tasks) when using the E2B (4.65B) model to draft for the 31B target

-

Maarten Grootendorst, ‘A Visual Guide to Gemma 4’ — https://newsletter.maartengrootendorst.com/p/a-visual-guide-to-gemma-4

↩ ↩2the native MTP heads were initially omitted from the Hugging Face release, remaining exclusive to Google’s LiteRT framework

-

Google AI Edge MTP overview (ai.google.dev) — https://ai.google.dev/gemma/docs/mtp/overview

↩ ↩2Centroids Masking groups the 262K-token vocabulary into ~4K clusters… reduces linear head computation by roughly 45x with negligible impact on accuracy

-

Medium — ‘Understanding MTP in DeepSeek-V3’ — https://medium.com/@bingqian/understanding-multi-token-prediction-mtp-in-deepseek-v3-ed634810c290

↩ ↩2DeepSeek explicitly credits Meta’s work (Gloeckle et al., 2024) in its technical reports as the foundational inspiration… DeepSeek-V3 uses auxiliary one-layer transformer modules that predict tokens in a causal chain

-

r/LocalLLaMA — ‘Speculative decoding silently broken for Qwen 3.6’ — https://www.reddit.com/r/LocalLLaMA/comments/1ss46dj/speculative_decoding_silently_broken_for_qwen36/

↩metadata mismatches in early GGUF files (such as conflicting add_bos_token flags) could force inference engines into ‘token translation mode,’ effectively nullifying all speed gains

-

TRL GitHub issue #4159 — https://github.com/huggingface/trl/issues/4159

↩TRL’s GRPOTrainer added logprobs_mode=“processed_logprobs” for vLLM ≥0.10.2; without it, raw logprobs combined with non-unit temperature produce a train-inference mismatch that destabilizes policy ratios.

-

ScaleRL paper (arxiv 2510.13786) — https://arxiv.org/html/2510.13786v1

↩Including the FP32 precision fix at the LM head improved asymptotic reward from 0.52 to 0.61, establishing it as a de-facto standard for stable large-scale RL training.

-

MiniMax-M1 technical report (arxiv 2506.13585) — https://arxiv.org/abs/2506.13585

↩Upgrading the LM head to FP32 precision raised correlation between training and rollout token probabilities from ~0.9x to ~0.99x, eliminating reward plateaus in long-CoT RL.

-

0fd.org — ‘When Speed Kills Stability’ — http://0fd.org/2025/11/14/when-speed-kills-stability-demystifying-rl-collapse-from-the-training-inference-mismatch/

↩Mitigations such as Masked Importance Sampling and dynamic LR scheduling can add up to 25% computational overhead, prompting debate over whether to prioritize raw inference speed or bitwise consistency.

-

Andrey Lukyanenko — GSPO paper review — https://andlukyane.com/blog/paper-review-gspo

↩GSPO clips significantly more tokens than GRPO yet yields more effective gradients, and is naturally tolerant of the precision discrepancies and routing fluctuations inherent in MoE models — eliminating engineering hacks like Routing Replay.

-

EmergentMind — CISPO algorithm summary — https://www.emergentmind.com/topics/cispo-algorithm

↩CISPO clips only the magnitude of the importance sampling weight while retaining the underlying gradient update, so rare ‘reflective’ tokens (e.g. ‘Wait’, ‘However’) still contribute — reaching target performance in roughly half the steps of GRPO.