OpenAI claims the default and the ad slot; Anthropic claims FactSet's

OpenAI made GPT-5.5 the ChatGPT default and opened a self-serve ads platform while Anthropic's finance agents knocked 8% off FactSet.

OpenAI claims the default and the ad slot; Anthropic claims FactSet’s

TL;DR

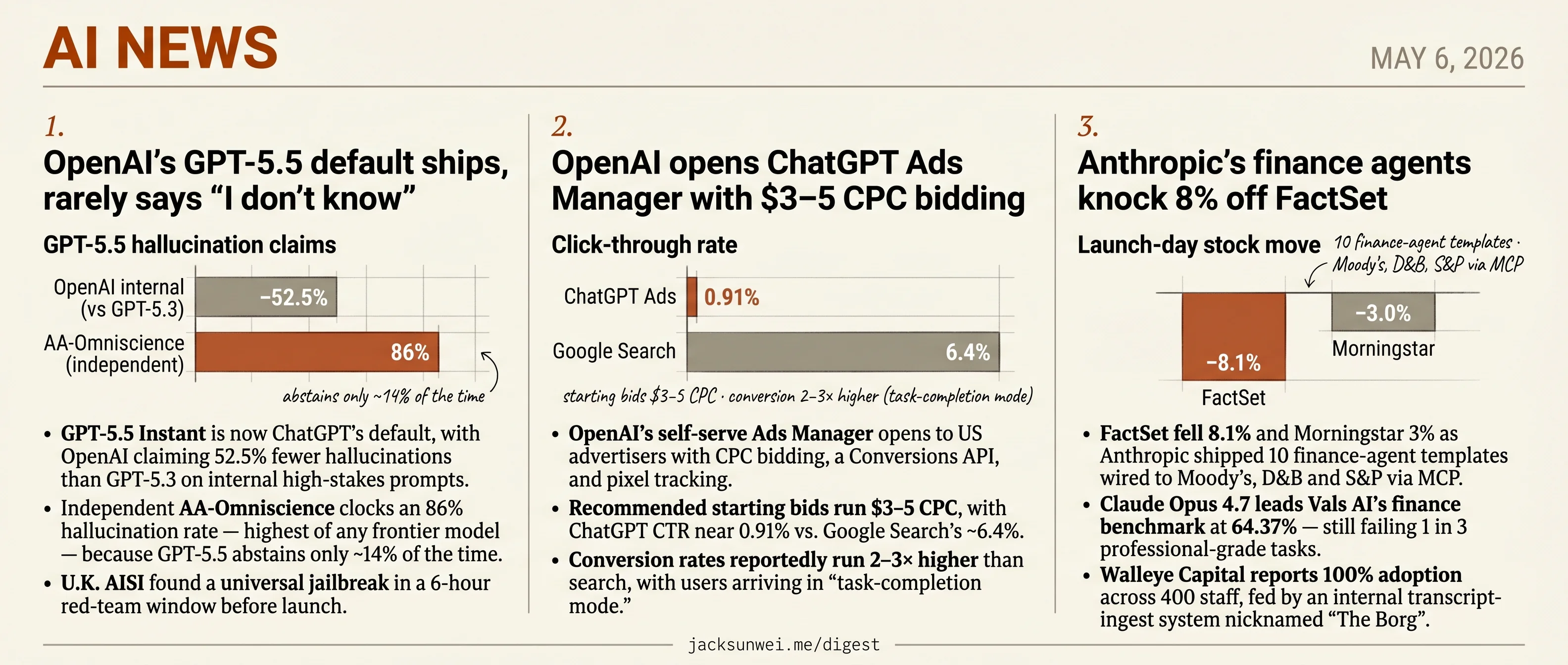

- GPT-5.5 Instant becomes ChatGPT’s default, with an 86% hallucination rate on outside benchmark AA-Omniscience.

- OpenAI’s Ads Manager opens to US advertisers at $3–5 CPC with reported 2–3× higher conversions than search.

- FactSet fell 8.1% as Anthropic shipped 10 finance-agent templates wired to Moody’s, D&B, and S&P via MCP.

- Google, Microsoft, xAI agreed to let the Commerce Department pre-evaluate frontier models before public release.

- Apple will pay $250M to settle claims it misled iPhone 15 Pro and 16 buyers on AI Siri features.

Today is a distribution day for the two frontier labs. OpenAI swapped GPT-5.5 Instant in as ChatGPT’s default and opened a self-serve Ads Manager to US advertisers in the same news cycle — one move books the consumer surface, the other starts monetizing it. Anthropic took the enterprise lane: ten finance-agent templates wired through MCP to Moody’s, D&B, and S&P, and the market priced the threat immediately, with FactSet down 8.1% and Morningstar off 3%.

None of these launches landed clean. GPT-5.5’s vendor-claimed 52.5% hallucination cut sits next to an 86% rate on the independent AA-Omniscience benchmark and a U.K. AISI jailbreak found in a 6-hour window. Anthropic’s MCP stack ships with a documented RCE path the company calls developer responsibility. And the ads launch lands the same week Anthropic’s Super Bowl spots explicitly positioned Claude as the no-ads alternative — a competitive frame OpenAI just gave teeth to. The briefs round out the regulatory backdrop: a voluntary federal review channel, two Apple AI stories, a Meta copyright suit, and a state AG taking Character.AI to court.

OpenAI’s GPT-5.5 default ships, rarely says “I don’t know”

Source: openai-blog · published 2026-05-05

TL;DR

- GPT-5.5 Instant is now ChatGPT’s default, with OpenAI claiming 52.5% fewer hallucinations than GPT-5.3 on internal high-stakes prompts.

- Independent AA-Omniscience clocks an 86% hallucination rate — highest of any frontier model — because GPT-5.5 abstains only ~14% of the time.

- U.K. AISI found a universal jailbreak in a 6-hour red-team window before launch.

- API pricing roughly doubles to $5/$30 per million tokens, though ~30% shorter outputs soften the per-task hit.

The headline number doesn’t survive contact with outside benchmarks

OpenAI’s launch pitch for GPT-5.5 Instant is “smarter, tighter, safer default” — 52.5% fewer hallucinations on high-stakes legal/finance/medical prompts, 81.2% on AIME 2025, 85.6% on GPQA, ~30% shorter answers. The system card, the TechCrunch write-up, and The Verge’s coverage all hang on that hallucination figure.

Artificial Analysis’s AA-Omniscience benchmark tells a different story. GPT-5.5 sets a record 57% raw accuracy — and simultaneously posts an 86% hallucination rate, the highest among current frontier models, because it almost never abstains when it doesn’t know 1. The contradiction is methodological, not a flat falsehood: OpenAI is measuring reduction-against-self on curated prompts; AA-Omniscience is measuring calibration — what fraction of wrong answers are confidently confabulated rather than refused. GPT-5.5 abstains roughly 14% of the time. Claude 4.1 Opus, by contrast, holds near-zero hallucination by refusing 2.

“GPT-5.5 is trained to commit to an answer — the risk of a confident wrong remains a significant liability for citation work and compliance drafting.” 2

If your workload is agentic coding or bounded enterprise tasks where a wrong answer is cheap to verify, the raw-accuracy lift is real. If it’s legal citations, medical summaries, or regulated drafting, the new default has gotten worse on the axis that matters.

Safety verification came up short

The launch shipped without complete external audit. The U.K. AI Safety Institute found a universal jailbreak during a six-hour red-team window, and both AISI and the U.S. CAISI reportedly hit configuration issues that prevented them from verifying the final launch configuration 3. The public is effectively trusting OpenAI’s self-attestation on the safety claims in the system card.

The new Gmail/Drive/Calendar connectors and persistent memory open a fresh attack surface worth diagramming:

flowchart LR

A[Attacker email<br/>hidden white-text] --> B[Gmail inbox]

B --> C{ChatGPT<br/>summarize}

D[User prompt] --> C

C --> E[Long-term memory]

E -. corrupted .-> F[All future sessions]

C -. exfiltration .-> G((External URL))

Security researchers have already documented “ShadowLeak” and “ZombieAgent” zero-click prompt-injection attacks that exploit the agentic Gmail connector — hidden instructions in an email get executed when ChatGPT summarizes the inbox, and a single poisoned interaction can permanently corrupt long-term memory across future sessions 4. The “Memory Sources” UI OpenAI is marketing as a transparency win shows users which memories were used, but doesn’t address the underlying instruction-vs-data confusion in the connector itself.

Migration friction and a pricing reset

Simon Willison’s prompting guide leads with OpenAI’s own warning: treat GPT-5.5 as a new model family to tune for, not a drop-in replacement 5. Legacy prompt stacks regress; temperature/top_p are out in favor of reasoning.effort and text.verbosity; the system role moved to developer; the Responses API is now the preferred surface over Chat Completions.

Sticker pricing roughly doubled — $5 input / $30 output per million tokens, with a Pro tier at $30/$180 6. OpenAI’s defense is that the model produces ~30% fewer output tokens, so net workload cost rises closer to 20% than 100%. That math holds for some workflows and breaks for others; teams running long agentic loops with heavy retries should re-benchmark before flipping the alias.

What’s actually changed

The default got faster, more concise, and better at math and multimodal reasoning. It also got more willing to bluff. For ChatGPT’s median consumer query that’s a fine trade. For the regulated and citation-heavy work OpenAI specifically called out in its hallucination headline, the independent measurement says the opposite of the marketing.

Further reading

- GPT-5.5 Instant System Card — openai-blog

- OpenAI releases GPT-5.5 Instant, a new default model for ChatGPT — techcrunch-ai

- OpenAI claims ChatGPT’s new default model hallucinates way less — the-verge-ai

OpenAI opens ChatGPT Ads Manager with $3–5 CPC bidding

Source: openai-blog · published 2026-05-05

TL;DR

- OpenAI’s self-serve Ads Manager opens to US advertisers with CPC bidding, a Conversions API, and pixel tracking.

- Recommended starting bids run $3–5 CPC, with ChatGPT CTR near 0.91% vs. Google Search’s ~6.4%.

- Conversion rates reportedly run 2–3× higher than search, with users arriving in “task-completion mode.”

- Anthropic’s Super Bowl LX spots (“Ads are coming to AI. But not to Claude.”) drove an 11% Claude DAU spike.

What shipped

OpenAI is moving its ChatGPT ads pilot from hand-shaken partnerships to infrastructure. The new self-serve Ads Manager lets US advertisers register, fund campaigns, upload creative, and measure conversions through a Conversions API and pixel — the standard performance-marketing stack. CPC bidding joins the original CPM model, and integrations with Adobe, Criteo, Kargo, Pacvue, and StackAdapt let buyers pull ChatGPT inventory into existing programmatic workflows. The pitch is that ChatGPT users are in “task-completion mode” — comparing products, evaluating categories — and therefore worth more per click than a Google session.

The economics don’t quite pencil out

Independent benchmarks tell a more cautious story than OpenAI’s framing. Recommended starting max bids of $3–5 CPC sit in mid-tier Google Search territory, while click-through rate clocks in around 0.91% versus Google Search’s ~6.4% 7. The bull case rests on conversion rates “2–3× higher” than search because users arrive with intent already formed 7 — a real claim, but one without third-party attribution data to verify it yet.

Marketers aren’t sold either. Analysts at Gartner and Enders told Raconteur that the Ads Manager still lacks the granular demographic targeting, remarketing, lookalikes, and third-party measurement integrations that make Google and Meta defensible 8. Until that parity arrives, the “self-serve for SMBs” framing oversells what’s actually usable today.

Anthropic and the trust attack

The launch landed into an unusually loud headwind. Anthropic spent Super Bowl LX money on four spots — “Betrayal,” “Deception,” “Treachery,” “Violation” — depicting AI assistants pivoting mid-conversation into absurd sales pitches, closing with “Ads are coming to AI. But not to Claude.” The campaign drove an 11% surge in Claude’s daily active users 9. Sam Altman called the ads “funny” but “clearly dishonest,” and reframed the fight as access versus elitism: OpenAI bringing free AI to billions while Anthropic is “serving an expensive product to rich people” 10.

“Ads are coming to AI. But not to Claude.” — Anthropic, Super Bowl LX

Hitzig and the publisher gap

Inside the building, the most cutting critique came earlier. Researcher Zoë Hitzig resigned in February with a NYT op-ed warning that ChatGPT holds “an archive of human candor that has no precedent” and that the company had “stopped asking the questions” she was hired to answer about ad-driven manipulation risk 11. She drew the Facebook parallel explicitly — early privacy promises eroded by monetization pressure — which is exactly the structural objection OpenAI’s “Answer Independence” pledge is meant to neutralize.

Then there’s the supply side. ChatGPT’s answers are built partly on licensed publisher content, but unlike Perplexity’s Publishers’ Program, OpenAI shares zero ad revenue when those sources get cited inside an ad-supported response 12. That asymmetry — extracting ad dollars from traffic that would otherwise reach publisher sites — is the next licensing-renewal fight waiting to happen.

Takeaway

The Ads Manager is real product progress; the surrounding context is what to watch. OpenAI is now competing on three fronts at once — measurement parity with Google, brand-trust positioning against Anthropic, and value-chain math with publishers — and shipping CPC bidding only addresses the first.

Anthropic’s finance agents knock 8% off FactSet

Source: anthropic-news · published 2026-05-05

TL;DR

- FactSet fell 8.1% and Morningstar 3% as Anthropic shipped 10 finance-agent templates wired to Moody’s, D&B and S&P via MCP.

- Claude Opus 4.7 leads Vals AI’s finance benchmark at 64.37% — still failing 1 in 3 professional-grade tasks.

- Walleye Capital reports 100% adoption across 400 staff, fed by an internal transcript-ingest system nicknamed “The Borg”.

- MCP’s stdio transport has a documented RCE path that Anthropic calls “expected” developer responsibility, not a bug.

A market-structure event, not a product launch

Anthropic’s “Agents for financial services” reads like a SKU drop — ten templates (Pitch Builder, Earnings Reviewer, KYC Screener, Month-End Closer, etc.) running on Claude Opus 4.7, plus Microsoft 365 integration and Managed Agents with credential vaults. The market read it differently. FactSet shares fell 8.1% and Morningstar 3% on the day, as investors priced in the Moody’s, Dun & Bradstreet, Guidepoint and S&P Capital IQ connectors as Anthropic positioning Claude as the default research surface for the buy side 13. A simultaneously announced $1.5B joint venture with Goldman Sachs and Blackstone underscored that this is a top-down enterprise wedge, not a developer-tools release.

flowchart LR

A[Moody's / D&B / S&P CIQ<br/>FactSet / MSCI / LSEG] -->|MCP connectors| B{Claude Opus 4.7<br/>Managed Agent}

C[Excel / PPT / Word / Outlook] --> B

B --> D[Subagents:<br/>methodology, valuation]

B --> E[Audit log + credential vault]

B -.->|stdio RCE path| F((Host machine))

The 64.37% problem

Anthropic frames Opus 4.7 as state-of-the-art for financial reasoning, and Vals AI’s leaderboard backs the ranking — 64.37%, ahead of GPT-5.5 at 59.96% and DeepSeek V4 at 60.39% 14. Read the other direction, the top model on the industry-standard benchmark still busts about a third of professional-grade tasks. That is a bar “that would get a human tossed off a buy-side desk,” as one analyst put it, and it sits awkwardly next to Managed Agents pitched for “multi-hour deal closes” with autonomous credential access.

Adoption is real, but idiosyncratic

The customer proof points are uneven. FIS is the cleanest: AML investigators reportedly spend ~80% of their time on manual evidence gathering, and FIS’s core banking systems touch nearly 12% of global GDP, so compressing investigations from days to minutes has real absolute leverage 15. Walleye’s 100%-adoption headline is more complicated. The rollout is enforced by CEO Will England with cash bounties and leaderboards, and the productivity layer is “The Borg” — a system that ingests transcripts from nearly every Zoom call, phone conversation and meeting at the firm 16. That’s a surveillance-and-culture story as much as an AI one, and not trivially portable to a bank.

Security and regulatory overhang

Two things sit underneath the launch that the announcement doesn’t address. First: the MCP stdio transport carrying Moody’s and D&B data into these agents has a demonstrated remote-code-execution pathway via unsanitized commands, and Anthropic has characterized the behavior as “expected” 17. For a CISO signing off on Managed Agents with vaulted credentials, that framing is going to land badly.

Second: US bank regulators have explicitly carved agentic AI out of OCC Bulletin 2026-13, and the rescinded SR 11-7 model-risk framework is openly acknowledged as insufficient for non-linear autonomous reasoning 18. Anthropic’s “audit-ready” Managed Agents are shipping into a regulatory vacuum, not a settled regime — which is fine until the first enforcement action picks the precedent.

The vendors who lost 8% weren’t wrong about the threat. They may just be early on the timeline.

Round-ups

Google, Microsoft, and xAI will allow the US government to review their new AI models

Source: the-verge-ai

Google DeepMind, Microsoft, and xAI agreed to let the Commerce Department’s Center for AI Standards and Innovation run pre-deployment evaluations and targeted research on new frontier models before public release, formalizing a voluntary government review channel for U.S. labs.

Apple agrees to pay iPhone owners $250 million for not delivering AI Siri

Source: the-verge-ai

The proposed $250 million settlement covers U.S. buyers of all iPhone 16 models and the iPhone 15 Pro purchased between June 10, 2024 and the present, resolving claims Apple misled customers about delayed Apple Intelligence Siri features.

Apple plans third-party AI model selection in iOS 27

Source: techcrunch-ai

Reports indicate iOS 27 will let users select a default third-party AI model for system tasks rather than routing everything through Apple Intelligence or ChatGPT, opening Siri-adjacent features to competing chatbot providers via extensions.

Further reading:

- Apple could let you pick a favorite AI model in iOS 27 — the-verge-ai

Book publishers sue Meta over AI’s ‘word-for-word’ copying

Source: the-verge-ai

Macmillan, McGraw Hill, Elsevier, Hachette and a fifth publisher, joined by one author, accuse Meta of one of the largest copyright infringements in history, alleging Llama reproduces their books word-for-word from training data.

Pennsylvania sues Character.AI after chatbot impersonated licensed psychiatrist

Source: techcrunch-ai

Pennsylvania’s attorney general alleges a Character.AI bot posed as a licensed psychiatrist during a state investigation and fabricated a medical license serial number, making it one of the first state-level enforcement actions against a chatbot platform for impersonating a regulated professional.

Further reading:

Microsoft gives up on Xbox Copilot AI

Source: the-verge-ai

New Xbox CEO Asha Sharma is winding down Copilot on mobile and halting console development, days after reorganizing the Xbox platform team to absorb executives from Microsoft’s CoreAI division where she previously worked.

Influential study touting ChatGPT in education retracted over red flags

Source: ars-technica-ai

A widely cited paper promoting ChatGPT’s benefits in classrooms has been retracted after reviewers flagged methodological problems; the study had already accumulated hundreds of citations shaping the early evidence base for generative AI in education.

Footnotes

-

Artificial Analysis (AA-Omniscience benchmark) — https://artificialanalysis.ai/articles/openai-gpt5-5-is-the-new-leading-AI-model

↩GPT-5.5 achieved a record-breaking 57% accuracy… but simultaneously posted an 86% hallucination rate — the highest among current frontier models — because it almost never abstains when it doesn’t know.

-

Medium (Candemir) — ‘the one number nobody’s quoting’ — https://medium.com/@candemir13/gpt-5-5-what-actually-changed-whats-overblown-and-the-one-number-nobody-s-quoting-de3c79cf0592

↩ ↩2GPT-5.5 is trained to commit to an answer — the risk of a confident wrong remains a significant liability for citation work and compliance drafting; Claude 4.1 Opus maintains near-zero hallucination by abstaining.

-

Transformer News — ‘OpenAI shouldn’t be deciding if its GPT-5.5…’ — https://www.transformernews.ai/p/openai-shouldnt-be-deciding-if-its-gpt-55

↩The U.K. AISI identified a universal jailbreak during six-hour red-teaming of GPT-5.5; CAISI and the U.K. AISI faced configuration issues that prevented them from verifying the effectiveness of the final launch configuration.

-

Medium — ‘When ChatGPT Becomes the Attacker’ — https://medium.com/@techintel0211/when-chatgpt-becomes-the-attacker-how-new-ai-flaws-exposed-gmail-outlook-and-github-data-eac8d5464c9e

↩ShadowLeak and ZombieAgent vulnerabilities exploit the agentic Gmail connector — attackers embed hidden instructions (e.g., white-text) that ChatGPT executes while summarizing an inbox, enabling zero-click data exfiltration; a single poisoned interaction can permanently corrupt long-term memory.

-

Simon Willison — GPT-5.5 prompting guide — https://simonwillison.net/2026/apr/25/gpt-5-5-prompting-guide/

↩To get the most out of GPT-5.5, treat it as a new model family to tune for, not a drop-in replacement for gpt-5.2 or gpt-5.4… start with the smallest prompt that preserves the product contract.

-

AICerts — enterprise adoption coverage — https://www.aicerts.ai/news/openai-gpt-release-inside-gpt-5-5s-agentic-leap/

↩Standard API pricing roughly doubles to $5/1M input and $30/1M output tokens, with a Pro tier at $30/$180; OpenAI argues token-efficiency gains (~30% fewer words) hold the net workload cost increase to ~20%.

-

Impressive (ChatGPT Ads vs Google Ads 2026) — https://impressive.com.au/chatgpt-ads-vs-google-ads-2026/

↩ ↩2OpenAI’s Ads Manager recommends starting max bids of $3–$5 CPC; ChatGPT CTR sits near 0.91% vs Google Search’s ~6.4%, but conversion rates are reportedly 2–3x higher because users are in ‘task-completion mode.’

-

Raconteur — https://www.raconteur.net/marketing-sales/openai-rolls-out-ads-on-chatgpt-but-marketers-remain-unconvinced

↩Marketers remain unconvinced: analysts at Gartner and Enders note OpenAI’s ad manager still lacks the granular demographic targeting, remarketing and third-party measurement integrations that Google and Meta offer.

-

Business Insider — https://www.businessinsider.com/anthropic-super-bowl-openai-chatgpt-ads-claude-2026-2

↩Anthropic’s Super Bowl LX campaign — spots titled ‘Betrayal,’ ‘Deception,’ ‘Treachery’ and ‘Violation’ — closed with ‘Ads are coming to AI. But not to Claude,’ and drove an 11% surge in Claude’s daily active users.

-

Inc. (Jason Aten) — https://www.inc.com/jason-aten/anthropics-dishonest-ads-clearly-struck-a-nerve-with-sam-altman-that-was-the-point/91297927

↩Sam Altman called Anthropic’s ads ‘funny’ but ‘clearly dishonest,’ arguing OpenAI would never run ads the way the spots depicted and contrasting his free-to-billions strategy with Anthropic ‘serving an expensive product to rich people.’

-

SiliconANGLE — https://siliconangle.com/2026/02/11/openai-researcher-quits-slippery-slope-chatgpt-ads/

↩OpenAI researcher Zoë Hitzig quit, warning the move toward ads is a ‘slippery slope’ and that the company has ‘stopped asking the questions’ she was hired to answer about ethical governance of the data users share.

-

Media Copilot — https://mediacopilot.ai/openai-chatgpt-ads-no-publisher-revenue-share/

↩OpenAI is not sharing ad revenue with the news organizations whose content fuels ChatGPT’s answers — a sharp contrast to Perplexity’s Publishers’ Program, which pays out when sources are cited in ad-supported responses.

-

ThePaypers — https://thepaypers.com/fintech/news/anthropic-launches-finance-ai-agents-after-usd-15-bln-joint-venture

↩Shares of established data providers plummeted, with FactSet Research Systems falling 8.1% and Morningstar dropping 3% as investors priced in the potential for AI to cannibalize traditional analyst roles.

-

Vals AI Finance Agent leaderboard — https://www.vals.ai/benchmarks/finance_agent

↩Claude Opus 4.7 secured the top position with an accuracy score of 64.37%, outperforming rivals such as GPT-5.5 (59.96%) and DeepSeek V4 (60.39%) — still failing on nearly one-third of professional-grade financial tasks.

-

FIS press release — https://www.fisglobal.com/about-us/media-room/press-release/2026/fis-brings-agentic-ai-to-banking-with-anthropic-starting-with-financial-crimes

↩Investigators spend approximately 80% of their time manually gathering evidence from disparate banking systems… the agent connects directly to FIS’s core banking systems which power nearly 12% of the global economy.

-

LetsDataScience — Walleye deployment writeup — https://letsdatascience.com/blog/anthropic-financial-services-agents-jamie-dimon-may-5

↩Central to this deployment is an internal intelligence layer nicknamed ‘The Borg’ — a collective memory system that records and ingests transcripts from nearly all company Zoom calls, phone conversations, and meetings.

-

BigGo / developer commentary on MCP — https://finance.biggo.com/news/202605052021_Anthropic_finance_agents_Claude_banking

↩Researchers demonstrated that the default implementation [of MCP stdio transport] allows for Remote Code Execution (RCE) by executing unsanitized commands on the host machine… Anthropic has characterized this behavior as ‘expected’.

-

Crowdfund Insider / regulatory analysis — https://www.crowdfundinsider.com/2026/05/277386-anthropic-to-cater-to-financial-services-firms-automates-analysis-pitchbooks-more/

↩OCC Bulletin 2026-13 explicitly excluded generative and agentic AI from its immediate scope… regulators have signaled that traditional model risk frameworks (like the rescinded SR 11-7) are insufficient for the novel, non-linear reasoning of autonomous agents.