OpenAI's UDP voice relay, antirez ships Redis ARGREP, Gemini adds webhooks

Three infrastructure releases — OpenAI's UDP voice relay, antirez's Redis ARGREP, Gemini API webhooks — all land below the model layer.

OpenAI’s UDP voice relay, antirez ships Redis ARGREP, Gemini adds webhooks

TL;DR

- OpenAI’s Realtime transport swaps SFUs for stateless UDP relays serving 900M WAU.

- Trickle ICE and STUN/TURN are missing from the relay, locking out UDP-firewalled clients.

- antirez’s PR 15162 lands a sparse Redis array with

ARGREPregex over RAM-resident data. - Gemini’s webhook removes only the status poll, not the Cloud Storage result fetch.

- Batch jobs sit PENDING up to 72 hours, capping the latency the callback actually removes.

Three shipments today, all in the plumbing rather than the model. OpenAI’s Realtime stack trades SFUs for a stateless UDP relay that routes 900M WAU off a single ICE ufrag, encoding the destination directly in the protocol field. Gemini’s API picks up Standard Webhooks for batch, Deep Research, and long video jobs, killing the status poll if not the Cloud Storage result fetch. antirez’s Redis PR 15162 lands a sparse three-level array with server-side ARGREP, pushing regex into the database itself.

None of today’s tech wins are about model capability — they’re about the layer underneath, where latency, locking, and round-trips actually live. antirez’s four-month build leaned on multi-model cross-review from Claude, GPT-5, and Gemini, which he insists on calling amplification, not autonomy — a reminder that LLMs are now showing up most usefully as collaborators on the infrastructure work, not the headline of it.

OpenAI’s voice stack swaps SFUs for stateless UDP relays

Source: openai-blog · published 2026-05-04

TL;DR

- OpenAI’s Realtime transport ditches SFUs for a stateless relay + stateful transceiver, serving 900M WAU.

- Relay routes the first packet via ICE ufrag, encoding destination in the protocol field — no DB lookup.

- Critics flag missing trickle ICE and no integrated STUN/TURN — users behind UDP-blocking firewalls are locked out.

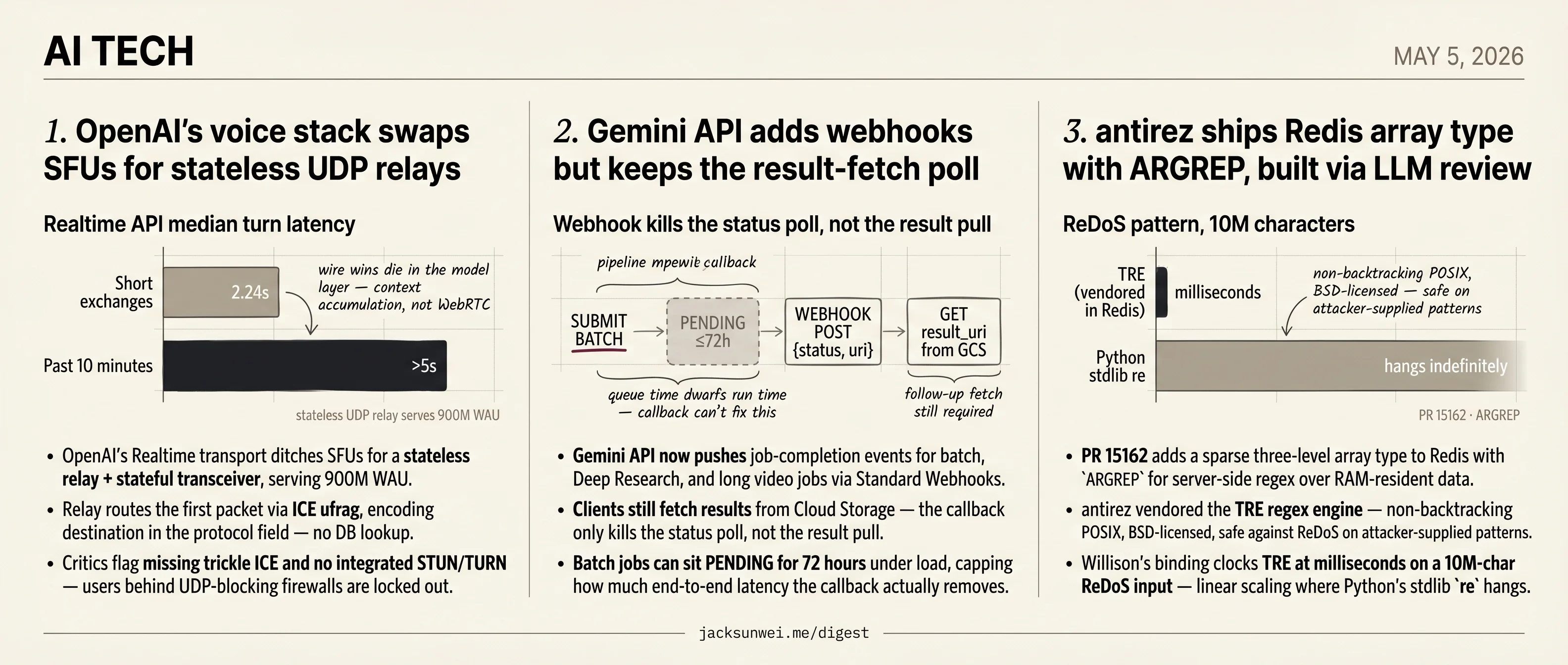

- End-to-end latency is bounded by the model: median turn latency climbs from 2.24s to over 5s past 10 minutes.

What OpenAI actually changed

The headline trick is routing without state. A standard WebRTC deployment either runs an SFU (great for fan-out, overkill for 1:1) or hands every session a dedicated public port (a Kubernetes nightmare at OpenAI’s scale). OpenAI’s relay does neither: it terminates a small, fixed UDP surface, peeks at the incoming packet’s ICE username fragment, and forwards to the right transceiver pod. Because the server-side ufrag is generated with routing metadata baked in, the relay never decrypts media, never holds session state, and never blocks on a lookup. The transceiver — a separate stateful service — handles ICE, DTLS, SRTP, and the bridge into OpenAI’s internal inference protocol.

flowchart LR

U[Client browser/mobile] -->|Cloudflare geo-steering| R[Stateless UDP relay]

R -->|ufrag-routed first packet| T[Stateful transceiver pod]

T -->|DTLS/SRTP terminated| I[Inference backend]

R -. fixed small UDP surface .- K[(K8s pod scaling)]

The Go implementation leans on SO_REUSEPORT plus runtime.LockOSThread to pin UDP-reading goroutines to specific cores. That’s a well-trodden pattern: an independent benchmark of the same approach scaled a software router from 1.3 Gbit/s on one core to 7.5 Gbit/s on ten — near-linear 1. The catch, which the blog glosses over, is that pinned goroutines are punishing if they ever block; practitioners measure roughly 10× more context switches when a LockOSThread loop hits a channel or syscall it can’t satisfy immediately 2. The relay’s hot path has to be a tight poll-and-forward with zero blocking work, full stop.

Where the architecture leaks

The post is silent on connectivity fallback, and that’s the biggest omission. Hacker News critics surfaced by independent coverage point out the Realtime stack ships without trickle ICE and without integrated STUN/TURN servers — so anyone behind a UDP-blocking firewall or restrictive Wi-Fi has no path in 3. Requiring full ICE gathering before session start also chips away at the latency wins the architecture exists to deliver 3. And the stateless ingress design means developer backends can’t forcibly kill a rogue session, while ephemeral keys are sniffable and reusable 3.

The shape of the design is recognizable. Cloudflare Calls uses anycast to put the first hop within ~10ms of most users globally 4, and OpenAI’s “nearest cluster via Cloudflare steering” follows the same playbook — but optimized for 1:1 model sessions rather than Cloudflare’s many-viewer fan-out, which is why an SFU was the wrong tool here.

The latency the user actually feels

Wire-level wins don’t survive contact with the model. Independent benchmarks put time-to-first-voice on the Realtime API at 450–900ms and TTFB at 250–500ms on stable networks 5 — already well above what the transport alone implies. Worse, a developer running production traffic reported median turn latency rising from 2.24s in short exchanges to over 5s past the 10-minute mark 6. That’s not a WebRTC problem; that’s context accumulation, VAD tuning, and model-side compute. OpenAI’s relay is a real piece of engineering, but the perceived “awkward pause” the blog opens with lives mostly in the layers above it.

Gemini API adds webhooks but keeps the result-fetch poll

Source: google-ai-blog · published 2026-05-04

TL;DR

- Gemini API now pushes job-completion events for batch, Deep Research, and long video jobs via Standard Webhooks.

- Clients still fetch results from Cloud Storage — the callback only kills the status poll, not the result pull.

- Batch jobs can sit PENDING for 72 hours under load, capping how much end-to-end latency the callback actually removes.

- Security model splits static project-wide HMAC-SHA256 secrets from dynamic per-request JWKS/RS256 verification against Google’s well-known endpoint.

What shipped

Google added event-driven webhooks to the Gemini API, letting developers register a URL that receives an HTTP POST when a long-running job — Batch API runs, Deep Research, long video generation — finishes. The implementation follows the Svix-led Standard Webhooks specification: every request carries webhook-signature, webhook-id, and webhook-timestamp headers, delivery is at-least-once with retries up to 24 hours, and authentication splits into two modes. Static, project-level webhooks use a one-time HMAC-SHA256 signing secret; dynamic, per-request webhooks are verified asymmetrically against Google’s JWKS endpoint at generativelanguage.googleapis.com/.well-known/jwks.json 78. The cookbook pins google-genai>=1.73.1 and tells verifiers to drop payloads older than five minutes to defeat replay 8.

The “eliminate polling” framing is half true

The announcement pitches webhooks as the end of polling. In practice, the payload is a status snapshot plus a Cloud Storage URI — consumers still make a follow-up call to pull results 8. That removes the status poll, not the result fetch:

sequenceDiagram

participant App

participant Gemini as Gemini Batch API

participant GCS as Cloud Storage

App->>Gemini: submit batch + webhook_config

Note over Gemini: PENDING (up to 72h under load)

Gemini-->>App: POST {status, result_uri}

App->>GCS: GET result_uri

GCS-->>App: payload

The bigger asterisk sits in Google’s own docs: under load, batch jobs can stay in a PENDING queue for up to 72 hours before expiring 9. A webhook that fires “the instant a job completes” matters less when queue time dwarfs run time, and no callback architecture fixes that.

Catch-up, not breakthrough

Read against the serverless-inference market, this is parity work. Replicate productized exactly this pattern years ago by outsourcing delivery to Svix and emitting queued/starting/completed events; Modal took a Python-native sync/async route instead 10. Anthropic’s Batch API still makes you poll /v1/messages/batches/{id}, so Google does pull ahead there. The choice of Standard Webhooks — the same spec OpenAI adopted for its batch and Deep Research callbacks — points at convergence on Svix’s conventions across the frontier labs.

There’s also a redundancy problem inside Google’s own stack: Vertex AI customers already wire long-running jobs to Eventarc and Pub/Sub, which fan out to Cloud Functions and integrate with GCP IAM and observability 11. The new API-level webhooks are easier to prototype but live outside that fabric, forcing a choice between two Google-blessed eventing models.

Trust context

This is the same Gemini API surface that, earlier in the year, silently inherited GCP keys Google had previously labeled “not secrets” — one developer watched a bill jump from $180 to over $82,000 in 48 hours after enabling Gemini on an existing project 12. That history sharpens scrutiny of the new defaults: a single static HMAC secret per project is convenient but blast-radius-heavy, and the dynamic JWKS path is the safer pattern teams should reach for first. The webhook launch is a real ergonomic win. It is not the polling-killer the post-title implies.

antirez ships Redis array type with ARGREP, built via LLM review

Source: simon-willison · published 2026-05-04

TL;DR

- PR 15162 adds a sparse three-level array type to Redis with

ARGREPfor server-side regex over RAM-resident data. - antirez vendored the TRE regex engine — non-backtracking POSIX, BSD-licensed, safe against ReDoS on attacker-supplied patterns.

- Willison’s binding clocks TRE at milliseconds on a 10M-char ReDoS input — linear scaling where Python’s stdlib

rehangs. - The four-month build leaned on multi-model cross-review (Claude, GPT-5, Gemini), which antirez calls amplification, not autonomy.

What actually shipped

Salvatore Sanfilippo’s PR 15162 adds a first-class Array data type to Redis, and the design is more interesting than the 18-command surface suggests. Under the hood it’s a three-level sparse directory — a “super directory” of sliced dense directories pointing to array slices — so ARSET myarr 999999 foo doesn’t allocate the intervening million slots, while dense regions still get near-O(1) access 13. Simon Willison wrapped the experimental branch in a WASM-compiled browser playground so you can poke at it without building from source.

The motivating workload is explicit: AI-agent memory. Millions of Markdown fragments held in RAM, filtered server-side via ARGREP, which exposes EXACT, MATCH, GLOB, and RE modes against a vendored regex engine 13. That last mode is where the design choice gets load-bearing.

Why TRE, and why it matters

ARGREP takes user-supplied patterns. A backtracking regex engine in that position is a trivial denial-of-service vector — one crafted pattern hangs the server. antirez’s answer was to vendor TRE, a small pure-C POSIX engine that uses a non-backtracking algorithm and recently relicensed to 2-clause BSD, making it legally clean inside Redis 8’s tri-license 13.

Willison’s companion post is the empirical gut-check. He built a ctypes binding and ran TRE against a classic ReDoS pattern over 10 million characters: TRE finishes in milliseconds with linear scaling, while Python’s stdlib re effectively never returns 14. That independently validates the most safety-critical engineering call in the PR.

flowchart LR

A[AI agent] -->|user-supplied pattern| B[ARGREP myarr]

B --> C{TRE engine<br/>non-backtracking}

C --> D[Sparse 3-level array<br/>RAM-resident fragments]

D --> E[Matched results]

The agentic-engineering claim — and its dissenters

The methodology story is what’s getting argued about. antirez frames the four-month build as proof that an expert plus an adversarial multi-model loop — Claude Opus, GPT-5.x, and Gemini cross-reviewing each other’s diffs — produces deeper work in the same calendar time, not faster work. He’s emphatic that LLMs are “good amplifiers and bad one-man-band workers” and that “for high quality system programming tasks you have to still be fully involved,” explicitly rejecting vibe coding 15.

The wider community is skeptical that this generalizes. Casey Muratori compares AI-assisted coding to “having someone else play the piano for you,” arguing the babysitting tax against rigorous systems requirements often cancels the productivity gain 16. Quantitative critiques pile on: a 211M-line analysis found AI-assisted code carries roughly 50% more duplication than refactored equivalents, and independent benchmarks suggest up to half of generated code introduces vulnerabilities 17. An antirez-caliber reviewer absorbs those risks. A median team probably doesn’t.

The Valkey fault-line widens

There’s a licensing kicker. Redis 8 ships under AGPLv3 / SSPL / RSALv2; the Valkey fork remains BSD-3-Clause. That asymmetry means Valkey legally cannot absorb PR 15162, while Redis can still pull anything Valkey ships — a “one-way code flow” the Valkey maintainers have acknowledged 18. Valkey has been investing in multi-threaded I/O and native JSON instead, sidestepping the “computational data structure” direction antirez is steering Redis toward. The array type isn’t just a feature; it’s a divergence marker.

Further reading

- TRE Python binding — ReDoS robustness demo — simon-willison

Round-ups

Granite 4.1 3B SVG Pelican Gallery

Source: simon-willison

Simon Willison ran his “pelican riding a bicycle” SVG prompt against all 21 Unsloth GGUF quantizations of IBM’s new Apache 2.0-licensed Granite 4.1 3B model, spanning 1.2GB to 6.34GB. Verdict: no discernible quality-versus-size pattern, and every output is uniformly terrible.

Footnotes

-

Medium — High Performance Network Programming — https://medium.com/high-performance-network-programming/performance-optimisation-using-so-reuseport-c0fe4f2d3f88

↩throughput increased from 1.3 Gbit/s on a single core to 7.5 Gbit/s on 10 cores by simply parallelizing data planes with SO_REUSEPORT

-

OneUptime engineering blog — https://oneuptime.com/blog/post/2026-01-30-udp-optimization-strategies/view

↩If a pinned goroutine blocks frequently on channels or system calls, it can lead to a 10x increase in context switches, as the runtime cannot easily reuse that dedicated thread for other tasks.

-

AwesomeAgents.ai analysis — https://awesomeagents.ai/news/openai-voice-ai-webrtc-kubernetes/

↩ ↩2 ↩3Critics on Hacker News point out that the architecture lacks support for trickle ICE and does not provide integrated STUN/TURN servers… users on enterprise networks or public Wi-Fi that block UDP traffic may find the service completely unreachable.

-

Cloudflare blog — Calls Anycast WebRTC — https://blog.cloudflare.com/cloudflare-calls-anycast-webrtc/

↩Cloudflare Calls disrupts this by using Anycast routing… ensures the ‘first hop’ for any user is always the geographically closest data center, reducing initial latency to under 10ms for most of the world’s population.

-

eesel.ai — Realtime API vs Whisper benchmark — https://www.eesel.ai/blog/realtime-api-vs-whisper-vs-tts-api

↩time-to-first-voice (TTFV) typically falls between 450ms and 900ms on stable networks, while time-to-first-byte (TTFB) is often around 250ms to 500ms

-

Reddit r/PromptEngineering — Realtime API field report — https://www.reddit.com/r/PromptEngineering/comments/1svq3br/what_i_learned_from_running_openai_realtime_api/

↩median turn latency rose from 2.24 seconds in short exchanges to over 5 seconds in calls lasting longer than ten minutes

-

MarkTechPost (independent coverage) — https://www.marktechpost.com/2026/05/05/google-adds-event-driven-webhooks-to-the-gemini-api-eliminating-the-need-for-polling-in-long-running-ai-jobs/

↩Static webhooks are project-wide and use HMAC-SHA256 with a one-time signing secret; dynamic webhooks override per-request and are verified asymmetrically against Google’s JWKS endpoint.

-

google-gemini/cookbook Webhooks.ipynb — https://github.com/google-gemini/cookbook/blob/main/quickstarts/Webhooks.ipynb

↩ ↩2 ↩3Requires google-genai>=1.73.1; dynamic webhooks must be verified by extracting the JWT from webhook-signature and validating RS256 against https://generativelanguage.googleapis.com/.well-known/jwks.json, and payloads older than 5 minutes should be rejected.

-

ai.google.dev Batch/Webhooks docs — https://ai.google.dev/gemini-api/docs/webhooks

↩Under load, batch jobs can sit in a PENDING queue for up to 72 hours before expiring — meaning the low-latency webhook only fires once the job actually starts, not when it was submitted.

-

Nightwatcher AI (Replicate vs Modal comparison) — https://nightwatcherai.com/blog/replicate-vs-modal-comparison

↩Replicate offloads webhook reliability to Svix and emits queued/starting/completed events — the async-by-default pattern Google is now catching up to, while Modal still leans on a Python-native sync/async SDK.

-

YouTube walkthrough (Vertex AI eventing) — https://www.youtube.com/watch?v=MRDK8gAzDI8

↩Vertex AI users already wire long-running jobs to Eventarc and Pub/Sub, which can fan out to Cloud Functions or webhooks — a more GCP-native path than the new Gemini API webhooks.

-

The Hacker News (Truffle Security disclosure) — https://thehackernews.com/2026/02/thousands-of-public-google-cloud-api.html

↩Enabling Gemini on existing GCP projects silently granted Gemini access to keys Google had previously told developers were ‘not secrets’ — one developer saw bills jump from $180 to over $82,000 in 48 hours.

-

Pasquale Pillitteri — Redis Array technical writeup — https://pasqualepillitteri.it/en/news/1902/redis-array-antirez-ai-agents-knowledge-base

↩ ↩2 ↩3The new Array uses a three-level sparse representation — a ‘super directory’ of sliced dense directories that point to array slices — so writing index 0 and index 999,999 doesn’t blow up memory, while ARGREP runs server-side regex over RAM-resident data via the vendored TRE library.

-

Simon Willison — TRE Python binding (ReDoS demo) — https://simonwillison.net/2026/May/4/tre-python-binding/

↩Against a classic ReDoS-vulnerable pattern over 10 million characters, TRE completes in milliseconds with linear scaling, while Python’s stdlib re module hangs effectively indefinitely on the same input.

-

Pasquale Pillitteri — quoting antirez’s news/164 — https://pasqualepillitteri.it/en/news/1902/redis-array-antirez-ai-agents-knowledge-base

↩Sanfilippo describes LLMs as ‘good amplifiers and bad one-man-band workers’ and insists ‘for high quality system programming tasks you have to still be fully involved,’ rejecting ‘vibe coding’ in favor of what he calls Automatic Programming.

-

YouTube — Casey Muratori on AI-assisted coding — https://www.youtube.com/watch?v=suZ2Gt6i8do

↩Muratori compares AI-assisted coding to ‘having someone else play the piano for you’ and argues the cost of babysitting the model against rigorous systems requirements often negates the promised productivity gains.

-

FailingFast.io — AI coding benchmarks — https://failingfast.io/ai-coding-guide/benchmarks/

↩A 2024 analysis of 211 million lines of code found AI-assisted tools led to a roughly 50% increase in code duplication compared to refactoring, and independent benchmarks indicate up to half of AI-generated code may introduce vulnerabilities.

-

Pasquale Pillitteri — Valkey/licensing context — https://pasqualepillitteri.it/en/news/1902/redis-array-antirez-ai-agents-knowledge-base

↩Because Redis 8 ships under AGPLv3 / SSPL / RSALv2 while Valkey remains BSD-3-Clause, Valkey cannot legally absorb PR 15162; the reverse direction stays open, producing what maintainers describe as a ‘one-way code flow.‘